13 Learning from practice

mentoring, feedback, and portfolios

Competence An integrated body of knowledge, skills, and (professional) attitudes enabling proficient performance in certain real-life settings.

Mentoring (coaching or supervising) One-to-one learning activity with the goal of stimulating the learning and professional development of a learner.

Metacognition See main Glossary, p 341.

Multi-source feedback (also known as 360-degree feedback) A type of performance assessment that provides feedback on various tasks and behaviours from various reviewers. Ideally, it gathers information from people who are qualified and have credibility to judge clinical practice such as: (1) peers familiar with a similar domain of practice; (2) members of the health care team such as nurses, physicians assistants etc.; (3) patients as the recipients of health care.

Personal development plan A list of educational needs, development goals, and actions and processes, compiled by learners and used in systematic management and periodic reviews of learning.

Portfolio See main Glossary, p 341.

Reflection; reflective learning See main Glossary, p 342.

Outline

In this chapter, we focus on learning from practice in medical workplaces, for which self-directed assessment seeking and reflection are critical and a mentor is of great importance. First, we introduce two exemplar routines for learning from practice that mentors can use to stimulate self-directed assessment seeking and reflection. Next, we elaborate on strategies for providing feedback. Finally, we describe instruments that can be used for self-directed assessment seeking and reflection: multi-source feedback (MSF) and portfolios.

Introduction

Constructivist theories are dominant in the contemporary learning sciences and underlie many educational reforms. In constructivist theories, the view of learning is that people construct knowledge and understanding by interpreting information, processes, and experiences, building on what they already know (Bransford et al, 2000). This implies that what people consider “reality” is in fact their own construction of reality based on their personal knowledge. It is this personal reality that guides a person’s actions and perceptions. Bransford and his colleagues emphasise that constructivism is a theory of knowing and not a theory of pedagogy (teaching). However, it does have major consequences for teaching. A logical extension of the view that new knowledge must be constructed from existing knowledge is that teachers need to pay attention to incomplete understandings, false beliefs, and naive renditions of concepts that learners may have about a given subject. Teachers then need to build on these ideas in ways that help each student achieve a more mature understanding. If students’ initial ideas are ignored, the understandings they develop can be very different from those that the teacher intends (Bransford et al, 2000, p 10). This does not imply that teachers should never tell students anything directly (teaching by telling can be very effective in some situations) but Bransford and his colleagues emphasise the importance of helping people take control over their own learning. People must learn to recognise when they understand and when they need more information. They refer to people’s abilities to predict their performances on various tasks and monitor their current level of mastery and understanding as metacognition. Teaching practices congruent with a metacognitive approach to learning include those that focus on self-assessment and reflection.

Self-assessment, which is considered in detail in Chapter 8, is widely propagated in the field of medical education as a tool for improvement. It is a self-regulatory proficiency that is powerful in selecting and interpreting information in ways that provide feedback (Hattie and Timperley, 2007). Generally, effective learners create internal feedback and cognitive routines while they are engaged in academic tasks. Less effective learners have minimal self-regulation strategies and they depend much more on external factors (such as the teacher or the task) for feedback. Learners with well-developed self-assessment skills can evaluate their levels of understanding, their effort and strategies used on tasks, their attributions and opinions of others about their performance, and their improvement in relation to their goals and expectation. Recent reviews, however, highlight that, for cognitive (information neglect and memory biases) and socio-biological reasons (doctors being adaptive to maintain an optimistic look on themselves), the adequacy of self-assessment as an individually conducted internal activity is limited (Davis, et al, 2006; Loftus, 2003). Eva and Regehr (2008) propose that learners make better use of external information about their performance by self-directed assessment seeking, which they describe as a process by which one takes personal responsibility for looking outward and explicitly seeking feedback and information from external sources of assessment data to direct performance improvements that can help validate one’s self-assessment.

Reflection (see also Chapter 2) is a strategy which Eva and Regehr describe as “a conscious and deliberate reinvestment of mental energy aimed at exploring and elaborating one’s understanding of the problem one has faced (or is facing) rather than aimed at simply trying to solve it” (Eva and Regehr, 2008, p 15). Hatton and Smith (1995) distinguish three types of reflection. The first type is concerned with the means to achieve certain ends. The second type is not only about means but also about goals, the assumptions upon which they are based, and the actual outcomes. The third type of reflection is referred to as critical reflection. Here, moral and ethical criteria are also taken into consideration. Judgements are made about whether professional activity is equitable, just, and respectful or not. Hatton and Smith emphasise that these three types of reflection should not be viewed as hierarchical. Different (educational) contexts and situations lend themselves more to one kind of reflection than to another. Other authors writing about reflection emphasise the impact of reflection on action. Among them Schön’s work on reflection by professionals is undoubtedly the most influential (Schön, 1983). He distinguished between reflection-in-action and reflection-on-action. Reflection-in-action implies conscious thinking and modification during task performance. Reflection-on-action implies what we recently described as “letting future behaviour be guided by a systematic and critical analysis of past actions and their consequences” (Driessen et al, 2008, p 827). Most learners tend to find reflecting on their own learning difficult. For example, students arriving at university fresh from secondary education are not used to reflecting deliberately on their learning. Outside the domain of medical education, there is substantial evidence that it takes considerable effort for learners to learn to reflect on their actions or learning (Ertmer and Newby, 1996). Korthagen et al (2001) observed that some resistance was common when students were first introduced to reflective learning because their prior experiences were with education in which the transfer of knowledge was the goal. Thus, students’ images and expectations of education tend not to be in keeping with learning from reflection.

Mentoring gives a push in the right direction by asking the right questions and thus focusing the learner’s thinking processes. Mentors can also help learners with self-directed assessment seeking. Of course they can give feedback on performance themselves but they can also show learners where else to seek for information and how to present it. Finally, after self-directed assessment seeking and reflection, they can help learners identify and define learning goals. An effective mentor can have a positive influence on maximising the learner’s performance and developing their talent (Jowett and Stead, 1994). A mentor needs to be good at providing constructive feedback, helping learners think about themselves by asking questions and confronting them with discrepancies, and creating a challenging but safe learning environment (Driessen et al, 2008).

In the next section, we introduce two examples of routines for learning from practice that mentors can use to stimulate self-directed assessment seeking and reflection.

Routines for learning from practice

Various routines for learning from practice have been proposed in previous publications (e.g. Kolb, 1984; Korthagen et al, 2001; NHS, 2001). All of them have in common that they include experience and reflection, and most of them also include a phase of systematic evaluation. In this section we describe two of those routines: the National Health Service (NHS) appraisal routine and Korthagen’s model for cyclic professional development. We use description of the latter to formulate suggestions for mentors aiming at stimulating their mentees’ self-directed assessment seeking, reflection, and deliberate practice.

Appraisal

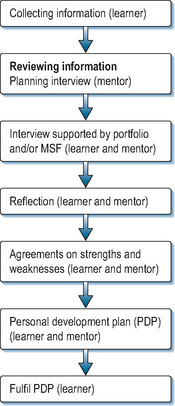

In 2001, the UK National Health Service (NHS, 2001) introduced an appraisal routine for consultants and general practitioners. This routine was defined as “a structured process of self-reflection”. Appraisal sets out to enhance professional development and learning. It invites doctors (called “appraisees”) to review their professional activities comprehensively and to identify areas of strength and need for development. The essence of appraisal is a confidential conversation, led by a trained appraiser (the term that is used for a mentor in appraisal procedures), supported by preparatory documentation such as a portfolio which is based on the General Medical Council’s (GMC) components of good medical practice (General Medical Council, 2006). Since 2007, MSF tools (see later) have also been introduced to inform NHS consultant appraisal. The appraisal conversation should be followed by a period of reflection, after which the appraiser gives feedback. Then, an action plan is agreed, which the appraiser can use to steer development and learning (see Figure 13.1).

Early research findings have shown that the majority of appraisees feel encouraged and supported in their professional development by the appraisal process (Boylan et al, 2005). The most significant perceived benefit has been the opportunity to reflect on individual performance with a supportive colleague. There are, however, repeated concerns about time, confusion with revalidation, and worries about covering health and probity queries (Lewis et al, 2003). Reported changes in performance have included: being more reflective, updating medical bags, and improved record keeping. It is not yet clear whether appraisal truly meets expectations and leads to an improvement of patient care, not least because appropriate outcome measures are not yet available.

The ALACT model

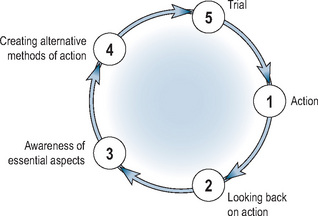

Korthagen et al (2001) developed a model for cyclic professional development, aimed at stimulating reflection on experience. They refer to this model as the “ALACT model”, named after the first letter of the five phases they distinguished, namely Action, Looking back on action, Awareness of essential aspects, Creating alternative methods of action, and Trial (Figure 13.2).

Action

The cycle starts with an action undertaken for a specific purpose (e.g. to develop a specific competence). Learners can be helped to improve their existing routines and, concurrently, acquire new ones by pre-selecting experiences from which they can learn, for example a mixture of patients who are more or less easy to diagnose.

Looking back on action: self-assessment seeking behaviour

The ALACT cycle then moves to the stage where learners look back on a previous action, usually when that action was not successful or something unexpected happened. This looking back on action is assumed to be accompanied by an evaluation of whether the goals were realised and the learner’s part in this. In many cases this can be regarded as a form of self-assessment.

The role of mentors is to encourage learners to seek information about their performance from various sources. Information can come from external sources like assessments, practice guidelines, or formal feedback. Careful scrutiny of their own performance may be challenging for learners. Effective mentors have an important role in creating a safe environment by distinguishing between learners as individuals and their performance. If learners feel unsafe, they will be reluctant to acknowledge learning needs. Mentors need to help learners focus on descriptions of what happened and stimulate learners to be concrete. When learners give more general evaluations about a situation and their performance, mentors should ask questions such as, “What went well?”, “What went wrong?”, “How did you solve that?”, and “What effect did it have?” Furthermore, it is important that the mentor stimulates learners to take a broader perspective than their own. To realise this, Korthagen et al propose questions for mentors such as:

Awareness of essential aspects: reflection

After conclusions have been drawn about the quality of performance and the characteristics of the situation, the next step in the ALACT model is to foster awareness of essential aspects. In this phase, learners try to develop a new and better understanding of what has happened: that is, they reflect on their performance. In this phase, a mentor is also essential to ensure that learners integrate external feedback into their self-concepts. The mentor can help the learner examine the data, see patterns, and identify cause–effect associations. Two strategies that are effective in making learners aware of essential aspects are confrontation and generalisation. A mentor can confront the learner with discrepancies between: self-assessment and external feedback; verbal and non-verbal expressions; how the learner sees himself and how the mentor sees the learner; and what the learner says he does and what he actually does. This confrontation can lead to an “Aha-moment”, which is a catalyst for reflection. Communication strategies a mentor can use include statements such as:

Mentors can also help learners see general patterns. Because of their experience and a certain distance, it is easier for mentors to recognise patterns in the data. Learners are often too immersed in one situation to see similarities with other situations. Questions that help learners generalise across experiences are

The perspective of reflection can be on means, goals, or moral/ethical perspective (see earlier). The mentor can focus on the means a learner used to achieve a goal and try to understand why the strategy was successful or not. A mentor can ask questions like: “Which strategies did you consider?”, “Why did you select this strategy?”, “Which are the advantages and disadvantages of the strategy you used?”, “Which part of your strategy was effective and which part was not effective?”, “Why was it effective or not?”, and “Would this strategy have been more or less effective in a different situation?” The perspective can also be on whether the learner had selected a suitable goal for this particular situation, leading to questions such as: “What did you want to achieve?”, “Were you successful?”, “What do you consider successful?”, “Why is this particular goal important?”, and “Why did you pursue this goal?” Finally learners may consider what they want to achieve from a moral or ethical perspective. A mentor can stimulate moral or ethical reflection by asking questions like: “Do you think patients/patients’ families/medical colleagues/nurses/administrators are satisfied with these outcomes?” “What are their primary interests?”

Creating or identifying alternative methods of actions

Reflection may trigger a search for alternative strategies or abandonment of original goals. It is important to explain (new) goals and alternative strategies. Reflection (awareness of essential aspects), stimulated by mentoring, is often not enough to induce behavioural change by itself. Barriers to change often comprise diverse personal, professional, and contextual factors. Goal setting, follow-up, and reminders are important strategies that can be used by mentors to motivate learners to improve their actual daily practice. The fact that people who are less oriented to goals and outcomes are less likely to take positive steps to change has been highlighted (Shute, 2008). Ericsson’s research predicts that expertise will grow not just from the weight of experience but also from engaging in activities specifically designed or selected to improve performance (Ericsson, 2006).

The mentor has an important task here. Learners who work with a mentor set more specific goals and improve more than those who do not work with a mentor (Smither et al, 2003). A personal development plan (PDP), which records what was agreed between mentor and learner about what should be done differently and which goals should be achieved can be useful. This PDP can be on the agenda at the next meeting between mentor and learner, when what has already been achieved and what areas still need work can be discussed. A problem is that plans in PDPs are often too vague. It is therefore important that mentors stimulate learners to be concrete. To ensure future change in practice, mentors need to commit learners to: (1) identify the goals, (2) develop a SMART (Specific, Measurable, Acceptable, Realistic, and Time-bound) plan to achieve their goals (Box 13.1), (3) explore ways that can impede improvement, and (4) provide ways to overcome barriers.

Trial

The last step in the ALACT cycle is trialling, which starts a new cycle in the spiral of professional development. It is well known that, although adequate goals are set, feedback is not necessarily followed by change. Barriers such as lack of time and support, or even the perceptual belief of negative consequences of changed behaviour, can impede the implementation of change. If, as a mentor, you are convinced that goals are SMART, you should help learners to identify barriers that might stand in the way of improvement. Mentors and learners should also consider whether any additional resources are needed to overcome the barriers explored. Even when changes in practice are made, learners can relapse into old routines and improvements can decline because of lack of time, resources, and information. Reminders have been shown to be very effective in preventing relapse into old routines and decline of performance improvement. Reminders are meant to prevent busy, sometimes forgetful students or doctors from falling back into routines when their workloads are heavy. Mentors can organise such reminders, which do not need to be cumbersome; a 10-minute phone call asking how things are going at this moment can be effective.

Providing feedback

We have seen that feedback is essential for stimulating reflection. In this section, we highlight the goals of formative feedback and factors that are essential for it to exercise its full effects.

Goals of formative feedback

Before mentors can stimulate learners to reflect on and learn from their experiences, learners should have information about their performance with respect to those experiences. In other words, learners should receive feedback on their performance in particular situations. In general, formative feedback should address the accuracy of a learner’s response to a problem or task and may touch on particular errors and misconceptions (Shute, 2008), the latter representing more specific or elaborated types of feedback. Formative feedback should also permit the comparison of actual performance with some established standard of performance. The main purpose of feedback is, therefore, to reduce the discrepancy between current and desired practices or understandings (Hattie and Timperley, 2007). In some cases, mentors will provide feedback before the phase of promoting reflection starts. In others, learners have already received the feedback from clinical supervisors or teachers.

Providing formative feedback

Several meta-analyses have found that feedback generally improves learning; however, there is wide variability in its effects and there are gaps in the literature, particular relating to how task characteristics, instructional contexts, and learner characteristics interact to mediate its effects. In other words, we simply do not know “what feedback works”. Nevertheless, a recent review (Shute, 2008) has given some insights. In Table 13.1, we summarise some important conditions under which feedback is well known to improve learning and performance. In order for feedback to fulfil its purpose, three fundamental questions for the learner need to be addressed (Hattie and Timperley, 2007):

Table 13.1 Formative feedback guidelines to enhance learning (things to do)

| Prescription | Description |

|---|---|

| Focus feedback on the task, not the learner | Feedback to the learner should address specific features of his or her work in relation to the task, with suggestions on how to improve |

| Provide elaborated feedback to enhance learning | Feedback should describe the what, how, and why of a given problem. This type of cognitive feedback is typically more effective than verification of results |

| Present elaborated feedback in manageable units | Provide elaborated feedback in small enough pieces that it is not overwhelming and discarded. Presenting too much information may not only result in superficial learning but also may invoke cognitive overload |

| Be specific and clear with the feedback message | If feedback is not specific or clear, it can impede learning and frustrate learners. If possible, try to link feedback clearly and specifically to goals and performance |

| Keep feedback as simple as possible (based on learner needs and instructional constraints) | Simple feedback is generally based on one cue. Keep feedback as simple and focused as possible. Generate only enough information to help students and not more |

| Reduce uncertainty between performance and goals | Formative feedback should clarify goals and seek to reduce or remove uncertainty in relation to how well learners are performing on a task and what needs to be accomplished to attain the goal(s) |

| Give unbiased, objective feedback | Feedback from a trustworthy source will be taken more seriously than other feedback, which may be disregarded |

| Promote a “learning” goal orientation via feedback | Formative feedback can be used to alter goal orientation – from a focus on performance to a focus on learning. This can be facilitated by crafting feedback, emphasising that effort yields increased learning and performance, and mistakes are an important part of the learning process |

(adapted from Shute, 2008)

To address the first question, clearly defined goals should be available and learners should have clear understanding of desired practice or competence. Without goals, they are less likely to engage in properly directed action. The second question requires concrete information from an assessment of performance. It is essential that clearly defined indicators of whether a task has been completed are available. The final question informs the learner what actions need to be taken to close the gap between actual and desired performance. Therefore, an action plan is necessary, giving specific information about how to proceed.

How effectively feedback addresses the three questions for learners is dependent on what aspects of performance are addressed. There are four foci for feedback:

Feedback on a task, often called corrective feedback or knowledge of results, is the easiest type to give and consequently the most frequently given. It should concentrate on performance of a task rather than the knowledge required to perform it. Feedback that focuses on the process underlying a task encourages a deeper appreciation of performance. Such feedback is relevant to the detection and correction of error and helps learners develop a facility for self-feedback. Feedback that focuses on self-regulation addresses the interplay between commitment, control, and confidence. It addresses the way students monitor, direct, and regulate actions toward learning goals and implies a measure of autonomy, self-control, self-discipline, and self-direction. Learners’ attributions of success and failure can have more impact than actual success or failure. In other words, feedback that does not explain the cause of poor performance or relate poor performance to identifiable circumstances is likely to engender personal uncertainties and decrease performance. It is essential that a supervisor directs feedback to observed performance, while being aware of the impact it has on the learner’s self-efficacy, such that attention is directed back to the task, leading learners to invest more effort in it. Feelings of self-efficacy are important mediators in feedback situations. From their major review, Kluger and DeNisi (1996) concluded that feedback is effective to the extent to which it directs information to enhanced self-efficacy and to more effective self-regulation. Finally, feedback that focuses on the person of the learner usually does not have any educational value. It concentrates on the personal attributes of the learner and does not contain any task-related information, strategies to improve commitment to the task, or a better understanding of self or the task itself. This focus of feedback is usually not very effective. Rather, its impact can have an adverse effect on learners, particularly when negative feedback is at a personal level.

Emotional reactions to feedback

Published research shows that people are poor at self-assessment (Davis et al, 2006). Self-directed assessment seeking, which can for instance take the form of MSF (see later), may be more accurate. Studies have shown, however, that external feedback from peers and others that is inconsistent with self-perceptions may be discounted and not used to inform one’s self-assessment. Learners who overrate themselves report more negative reactions to feedback and view it as inaccurate (Brett and Atwater, 2001). Negative (emotional) reactions to negative feedback, which may reflect transitory mood states, influence how learners use feedback. Mentors have an important role to play in this process. Emotion is one of the themes inherent within the processes of reconciling, assimilating, accepting, and using external feedback. It is important for mentors to acknowledge emotional reactions during the process of giving feedback (Sargeant et al, 2008).

Multi-source feedback

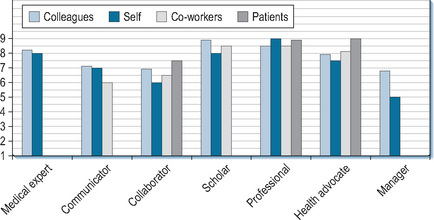

MSF, also known as 360-degree feedback, is a type of assessment that includes feedback on performance from various reviewers. It was originally developed for managers in the business sector in response to an increasing demand for managerial and professional performance in complex environments. MSF has the potential to provide feedback on a broad range of competencies (particularly generic ones) from people who can observe performance directly. Generic competencies in the medical domain include: communication with patients; collaboration and communication with clinical colleagues; aspects of professionalism; and management. A strength of MSF, compared to a single assessment by one assessor, is that several assessments are aggregated, increasing the reliability of the rating. Moreover, observations are (at least ideally) based on a greater period of time than a single encounter, thus improving the validity of the assessment. When used effectively, MSF can generate structured feedback, which can facilitate the different steps of the reflective process. MSF is especially effective at informing learners about their performance (Step 2 of Korthagen’s ALACT cycle) and making them aware of their strengths and weaknesses (Step 3).

MSF is being used on an increasingly large scale in medicine for both summative as well as formative purposes in undergraduate, postgraduate, and continuing education settings. Residency programmes in the United States and all Foundation Programmes in the United Kingdom use multi-source evaluations to assess their residents and fellows (ACGME, 2009; NHS, 2009). In the Netherlands and the United States, MSF is used to assess undergraduate students during their clerkships. In Canada, a programme has been developed to assess the performance of physicians routinely, with the primary purpose of improving the quality of medical practice. This programme also provides a way of identifying doctors for whom detailed assessment of practice performance or medical competence is needed (Hall et al, 1999).

Research on the impact of MSF on future clinical practice is still in its infancy and relies predominantly on doctors’ self-reports of changes. A randomised controlled trial compared subsequent MSF ratings between one group of residents who received an MSF report and a control group who did not. The trial showed that nurse ratings increased for the MSF group compared to the group who did not receive MSF reports. The difference in change between ratings was statistically significant for communicating effectively with the patient and family (35%), timeliness of completing tasks (30%), and demonstrating responsibility and accountability (26%). Other researchers have shown that between 61% and 72% of doctors report a change in behaviour as a consequence of receiving MSF. For one-third of doctors, however, MSF does not seem to add any value.

Procedure

MSF systems vary in terms of: purposes, number of assessors, content of the questionnaires, frequency of evaluation; and mechanism for feeding back to learners. Ideally, MSF is used to gather information from qualified people, who have the credibility to judge clinical practice, such as (1) peers familiar with a similar domain of practice; (2) members of the health care team; and (3) patients as the recipients of health care. In this section we will refer to persons who give feedback as “raters”.

With most MSF tools, learners complete a self-assessment questionnaire using the same items as the peer questionnaire. This allows a mentor to contrast the learner’s self-assessment with collated external assessments and signal inconsistencies. Earlier, we wrote that mentors should do this in step 3 of the ALACT cycle: identifying essential aspects (Korthagen et al, 2001). This exercise can be especially helpful for learners who lack insight into difficulties they are having, and learners who lack confidence and rate themselves less well than their colleagues.

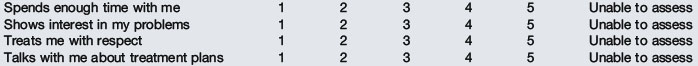

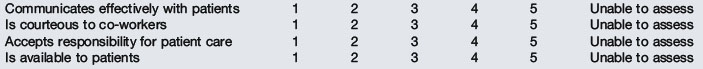

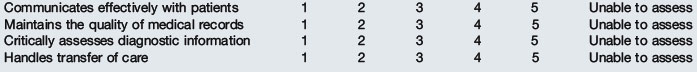

Next, questionnaires are developed for the different categories of rater: for instance, patients, colleagues, and supervisors. Items are included relating to domains best observed by the group in question. For example, patient questionnaires include more items addressing communication, while co-workers’ questionnaires mainly include items about collaboration (examples of questions are given in Box 13.2). In the example in the Box, completion of one questionnaire takes on average 5 minutes. A learner who is going to be assessed using MSF is usually sent a pack containing the rater questionnaires. In some systems, learners receive a password for a web-based system where they can select their raters. Raters are expected to return the completed forms or respond via a web-based system within a couple of weeks.

Box 13.2 Examples of questionnaire items of multi-source feedback (available on www.par-program.org)

Patient Questionnaire

Based on ALL OF YOUR VISITS to your doctor’s office, how do you feel about your doctor’s attitude and behaviour towards you? My doctor:

(1 = strongly disagree, 2 = disagree, 3 = neutral, 4 = agree, 5 = strongly agree)

All ratings are aggregated and compiled in a feedback report that includes mean scores for each reviewer group on all the items, and also tables and graphs to compare learners’ scores with group scores. The total number of respondents is also documented in the report. In many systems, the feedback report contains free text comments as well (see Figure 13.3 for an example of an MSF report).

Web-based systems for the application of MSF are relatively new in medicine. A few countries have implemented them for use with clerks, registrars, and practising doctors. Studies in business have shown that using electronic MSF systems does not influence the consistency of the raters or the feedback scores themselves. It has been found that online feedback mechanisms promote anonymity, which increases learners’ perceptions of trust in the authenticity of their feedback.

Quality of MSF

Validity and reliability

Content validity of MSF is established by mapping questionnaires to one of the frameworks that have been developed for medical students, doctors in training, and fully trained doctors (such as Good Medical Practice in the United Kingdom or CanMeds; Frank et al, 1996). By showing instruments’ ability to discriminate between specialties and levels of experience, studies have also shown that MSF instruments have construct validity (Overeem et al, 2007).

The reliability of assessment instruments concerns their internal consistency and stability (inter-rater reliability, intra-rater reliability, or generalisability). Generally, instruments applied with MSF have reasonable internal consistency, with Cronbach’s alpha varying from 0.83 to 0.98 (Overeem et al, 2007). The generally accepted threshold of reliability for high-stakes judgement is a generalisability coefficient of 0.8. For formative purposes, a generalisability coefficient of 0.7 is appropriate. Achieving this level is influenced by the size of the group of respondents. For example, more patients than nurses or peers are required in order to achieve reliable results. Overall, reasonably reliable results can be achieved with the assessments of 8–12 peers or co-workers and 15–25 patients.

Rater credibility

Learners receiving MSF are more likely to use the feedback when they perceive their raters to be well informed about their practice. To ensure that credible raters are selected – raters who have been able to observe the learner’s behavior for an extended period of time – learners should be involved in selecting them. Although it may seem counter-intuitive, there is evidence that this procedure does not distort ratings (Overeem et al, 2007).

One general assumption of MSF is that ratings reflect a rater’s best and specific judgement about a learner and the dimension on which the learner is being assessed. This assumption needs further attention as researchers have pointed out that there is a tendency in peer ratings towards leniency to minimise bad feelings. Further, there is evidence that there is a “halo effect” exists in MSF (Mount and Scullen, 2001), whereby a rater perceives one factor to be of paramount importance and rates the learner based on this one factor. Rater training programmes have been developed to eliminate common rating errors such as halo effect and rater leniency. Research to date, however, is inconclusive about the effect of such programmes on rater accuracy (Mount and Scullen, 2001). Nevertheless, an important condition for valid and credible feedback is that raters understand what they are supposed to do and what happens with their feedback. Further, raters should be assured of the anonymity and confidentiality of the process so their ratings are credible. In addition, an anonymous feedback procedure is required whereby only mentors can gain access to raters’ identities and everyone involved must have clear information about the confidentiality and safety of the procedures.

Specificity

Effective assessment feedback is specific in nature. A qualitative study with 15 family physicians in Canada showed that mailed MSF reports, which contained only numerical scores, were often inadequate to point towards useful improvements. Narrative comments in which respondents provide more specific explanations of their ratings and suggestions to improve performance are more informative and satisfactory to learners (Overeem et al, 2010). Research has also shown that recipients of feedback pay more attention to comments than quantitative ratings (Brett and Atwater, 2001). Raters should be encouraged to be constructive rather than destructive when making comments.

Mentoring MSF

Emotional reactions are the main impediment to using raters’ judgements to support personal advancement. Recipients of negative MSF, first timers in particular, go through phases of shock, anger, and rejection of results before being able to accept it. A decline in subsequent multi-source evaluations has even been described (Brett and Atwater, 2001). Mentors can help MSF recipients through the stages of acceptance, as we have described earlier. Mentors must be especially sensitive to emotional reactions when: (1) feedback is largely negative, (2) incongruity exists between (inflated) self-ratings and ratings from others, (3) feedback concerns personal character traits instead of behaviour, and (4) feedback is judgemental. The utility of MSF for reflection and performance improvement depends enormously on the mentor facilitating the feedback. Mentors should take care not to focus on minutiae or isolated adverse comments in MSF reports. Rather, the discussion of feedback should identify areas of strength and weakness and, very importantly, help the learner identify where developmental work would be useful.

Steps 4 and 5 of the ALACT cycle create an ideal opportunity for goal setting, continuous feedback, and follow-up. Learners can inform people in their working environment about their improvement goals and seek feedback to foster their continuing efforts. Studies have shown that feedback recipients who perceive support from co-workers hold more positive attitudes towards the feedback system and are more involved in job development (Maurer et al, 2002). Table 13.2 overviews the factors that promote successful use of MSF.

Table 13.2 Factors promoting the success of MSF

| Factor | Recommendation |

|---|---|

| Implementation | An anonymous feedback procedure is required whereby only mentors can gain access to ratings. Ensure that everyone involved has clear information about procedures that guarantee confidentiality and safety. Make protected time and resources available |

| Content of MSF | Include narrative comments in the questionnaires. Provide learners with feedback reports that combine statistical data with narrative comments |

| Credibility and specificity of MSF | Provide clear information for raters about the criteria for credible and specific feedback. Let learners choose their raters so they include people who have observed performance |

| Mentoring | Provide mentoring by trained teachers, supervisors, or peers. Ensure that mentors are trained in how to encourage reflection and goal setting, and are sensitive to emotional reactions |

| Follow-up | Focus on the output of an MSF process. Formulating concrete goals for change and arranging follow-up interviews advances the use of MSF for practice improvement |

Portfolios

Portfolios vary in their content and format but, basically, they report on work done, feedback received, progress made, and plans for improving competence. Portfolios may be digital or paper-based and content may be prescribed or left to the students’ discretion. They can hold copies of materials, tests, photographs, observation reports, videotapes, handwritten notes, reports of evaluations, and so forth. Compared to assessment instruments like paper-and-pencil tests, OSCEs, and Mini-Clinical Examinations, portfolios offer an opportunity for assessors to see the wide range of a student’s work and consider the limitations and opportunities of varying performance contexts when making judgements.

Portfolio as a multi-purpose instrument

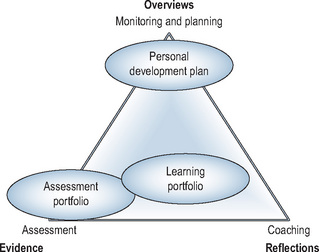

Portfolios are promoted as an excellent instrument for authentic assessment as well as to stimulate reflective thinking. Working on a portfolio can activate reflection, because collecting work samples, evaluations, and other types of illustrative materials compels learners to look back on what they have done and analyse what they have and have not accomplished (ALACT Step 2: Looking back on action). Another way in which portfolios can stimulate reflective thinking is written reflections, the inclusion of which is usually mandatory. Examples are: reflective journals or diaries, reflective essays, mission statements, self-evaluations, and descriptions of steps taken to achieve improvement. In many cases, portfolios are assembled over a long period of time. That is why they can be used as an instrument to support planning and monitoring in professional development (ALACT Step 4: Creating alternative methods of action). One way to do so is to document learning objectives and the trail of related learning activities and accomplishments. Portfolios that are primarily geared to assessment will remain organised around artefacts and other kinds of materials, which provide “evidence” of competencies. Portfolios that are primarily used to monitor and plan students’ development will give overviews centre stage. Portfolios whose primary objective is to foster learning by stimulating learners to reflect on and discuss their development will be organised around learners’ reflections.

Inevitably, these developments have widened the applicability of the label “portfolio” to a broad range of instruments. Some portfolios might as well be labelled “Personal Development Plan” or “Reflective Essay”. Because of that tremendous variety, critical appraisal of the strengths and weaknesses of different systems is advisable before deciding which one to implement in a particular setting. The question to be answered is whether a certain portfolio is fit for its intended purpose. Just like shoes, portfolios come in different shapes and sizes (Spandel, 1997); and just as someone else’s shoes are unlikely to fit comfortably, portfolios tailored to one particular institution may not fit into the educational configuration(s) of another institution. An ill-fitting portfolio will inevitably be discarded sooner or later. The triangle in Figure 13.4 helps clarify the nature of a portfolio to determine whether it is appropriate for its intended purpose. It does so by positioning the portfolio where it is most likely to achieve its intended principal objectives.

Obviously, a portfolio can achieve more than one goal. When it serves a combination of goals, its position in the triangle will shift towards the centre as its strengths are distributed more evenly across evidence, overviews, and reflections. In practice, the majority of portfolios are not situated at one of the corners of the triangle. A notable exception is PDPs.

The use of portfolios in medical education

Since their introduction in medical education in the early 1990s, portfolios have been increasingly used in all stages of the medical education continuum: in undergraduate medical education; postgraduate specialist training; and the continuing medical education (CME) of practising doctors. Portfolios in medicine have been subject of much educational research (Buckley et al, 2009; Driessen et al, 2007; Tochel et al, 2009), which confirms the theory-based notion that a portfolio has the potential to be a useful instrument to develop and assess competencies that are difficult to develop or assess with other instruments; especially reflective skills or performance in the workplace. However, the success of portfolios is conditional on fulfilment of certain prerequisites (Table 13.3).

Table 13.3 Implementing portfolio learning

| Factor | Recommendation |

|---|---|

| Goals | Clearly introduce the goals of working with a portfolio |

| Combine goals (learning and assessment) | |

| Introducing the portfolio | Provide clear guidelines about the procedure, the format, and the content |

| Be cautious about problems with information technology | |

| Mentoring/interaction | Provide mentoring by either teachers, trainers, supervisors, or peers |

| Assessment | Incorporate safeguards in the assessment procedure, such as intermittent feedback cycles, involvement of relevant resource persons (including the student), and a sequential judgement procedure |

| Use assessment panels of 2–3 assessors depending on the stakes of the assessment | |

| Train assessors | |

| Use holistic scoring rubrics (global performance descriptors) | |

| Portfolio format | Use a hands-on introduction with a briefing on the portfolio’s purpose and procedures |

| Keep the portfolio format flexible | |

| Avoid being overly prescriptive about the portfolio content | |

| Avoid too much paperwork | |

| Position in the curriculum | Integrate the portfolio in other educational activities in the curriculum |

| Be moderately ambitious for early undergraduate portfolio use |

Mentoring

The single most decisive factor is mentoring. If no provision is made for learner–mentor contacts in which portfolio content can be discussed and feedback given, all the time and effort expended on the portfolio will probably be wasted. First, as previously discussed, many learners are initially reluctant to engage in reflection because its purpose is not self-evident. A teacher, supervisor, or mentor is needed to convince learners that reflection is worthwhile. Second, reflection does not come naturally to most learners. As stated earlier, mentors have a supportive role to play in the various steps of the reflective process.

A systematic review showed that portfolio processes often fail to include adequate support and mentoring despite evidence of its positive impact (Driessen et al, 2007). There were also examples of portfolios being used without training or giving them time to perform their mentoring task properly. It was concluded that, although mentoring is possible without a portfolio, it is hardly possible to use a portfolio to promote reflection without mentoring (Driessen et al, 2007; van Tartwijk and Driessen, 2009).

The format of portfolios

Another important point is that a portfolio must be “lean”. Both learners and mentors have an aversion to large portfolios whether on paper or screen. There are too many instances of portfolios comprising a huge collection of materials, which indicates lack of clarity on the part of teachers as well as learners about the objectives. Because of such uncertainty, learners are sometimes advised to produce extensive portfolios, but there are good reasons to require selective and purposeful collection of materials. All parties involved stand to gain from portfolios that are tailored to their intended purposes so designers should create portfolios that are fit for the objectives to be achieved. When summative assessment is the sole objective of a portfolio, students can be asked to include only materials related to the competencies to be assessed and mark which parts of the portfolio have special relevance to which specific competencies (so-called captions). In that way, assessors do not have to peruse the full contents but can focus on what is relevant to the assessment.

When the portfolio’s objective is to promote reflection, an open structure based on clear guidelines is preferable. When portfolio content is too rigidly prescribed, however, reflections tend to become superficial and students may even make up topics. A study in teacher training showed that trainees reflected more superficially on topics that were less important for their day-to-day practice (Mansvelder-Longayroux, 2006). It is therefore essential that learners have opportunities to adjust the content of portfolios to the experiences they have in day-to-day learning practice. On the other hand, it is important that students, for whom a portfolio is new, are offered support and clarity as to what is expected from them. This can be achieved by organising a portfolio along the lines of professional roles or a competency profile (e.g. the CanMeds roles: Frank et al, 1996), supported by guiding questions. Further guidance can be offered by a well-informed mentor, who introduces the portfolio and explains the objectives and how they can be attained.

Position in the curriculum

A rigidly predefined structure becomes even more counterproductive when learners do not have enough new experience on which to reflect. Since reflection starts by looking back on action, learners must encounter enough new events to provide objects of reflection. In the absence of new events, students reflect for the sake of reflecting and may resort to fantasy. In the early stages of medical school, the curriculum is mainly theoretical and students have few real practical experiences. Therefore, a portfolio used in the early undergraduate curriculum years should not be too ambitious.

Ever since the introduction of portfolios, a controversial issue has been whether or not it is acceptable to have a single portfolio serving both summative assessment and reflection. An argument against this dual function is that assessment jeopardises the quality of reflection and detracts from the portfolio’s value in supporting effective mentoring. Learners may be reluctant to expose their less successful efforts or reflect on strategies for addressing weaknesses if they believe they are at risk of having “failures” turned against them in summative assessments. Unassessed portfolios, on the other hand, do not “reward” learners for the time and energy they have invested in them, which leads to them taking the portfolio and associated learning activities less seriously. We favour the middle ground and agree with the observation by Snyder and colleagues that: “The tension between assessment for support and assessment for high stakes decision making will never disappear. Still, that tension is constructively dealt with daily by teacher educators throughout the nation” (Snyder et al, 1998). Striking the right balance between support and judgement is the challenge facing mentors with whom students talk about their portfolios.

Assessment

Portfolios are seen by many as subjective and not suitable for high-stakes decisions. Early reports suggested we needed to temper our expectations for achieving strict psychometric criteria of validity and reliability because portfolios are individualistic and non-standardised. Low inter-rater reliabilities in portfolio assessment were a particular cause for concern. Educational developers responded by standardising assessment procedures to an excessive degree, with concomitant drawbacks. It was Snadden (1999), who asked for the first time whether we should continue trying to fit non-standardised portfolios to psychometric criteria. Webb et al (2003) came up with the idea of using criteria derived from qualitative research. Instead of a quantitative psychometric approach which looked at consistency across repeated assessment, a qualitative approach would add information to the judgement process until saturation was reached. Assessment procedures based on qualitative research criteria can incorporate safeguards, such as intermittent feedback cycles, involvement of relevant resource persons (including learners), and a sequential judgement procedure. It is also recommended that portfolio assessment procedures make use of: holistic scoring rubrics; small groups of trained assessors; and specific rater training expertise, including benchmarking and discussion between assessors.

Some final thoughts

The clinical workplace is an indispensable learning environment for the starting doctor. Doctors learn to put in practice all the knowledge and skills they acquired at medical school there and merge it into what one could refer to as competence. Research findings consistently show that teacher characteristics such as experience (Rivkin et al, 2005), scores on certification exams (Ferguson, 1991), and teacher training (Angrist and Lavy, 2001; Steinert et al, 2006) have a major impact on student learning. The strategies and tools available to teachers are also very important. In this chapter, we have introduced some of the tools and strategies the clinical teacher can use in the role of a mentor. Teaching tasks, however, have to compete with other tasks such as patient care and, sometimes, research. An investment of teaching time is an absolute requirement for successful use of the instruments we have presented.

Implications for practice

Self-assessment through “self-directed assessment seeking” and reflection, particularly “reflection-on-action”, are important elements of learning from practice. Routines to facilitate learning from practice, such as the UK NHS appraisal process and the ALACT model for cyclical CPD, depend upon the input of a mentor who can support these processes since there is good evidence that, generally speaking, people are poor at self-assessment and many do not naturally reflect. A good mentor creates a safe environment in which to ask challenging questions of the learner, using techniques such as confrontation and generalisation. They support the learners’ reflection, whether focused on means, goals, or ethical and moral perspectives. They provide constructive feedback, pointing the learner towards sources of information about their performance, facilitate goal-setting, possibly through creation of a SMART PDP, and help with follow-up including providing reminders about proposed actions.

Although research has not yet fully answered the question “What feedback works?”, guidelines for providing formative feedback have been described. These help the learner address the key questions, “Where am I going?”, “How am I going?”, and “Where to next?”, and may focus on the task, the process, self-regulation, or the person. Generally, feedback that focuses on the person does not have any useful value, and if critical or negative may be damaging. There is always an emotional component to peoples’ reactions to feedback and mentors must recognise and work with this.

MSF has shown potential to provide people with useful information about a wide range of competencies, including strengths and weaknesses. Validity and reliability can be enhanced through procedures such as blueprinting and ensuring an appropriate number of respondents, and factors promoting success of MSF have been identified. The structured feedback provided can support reflection and action, and although research on its effect on future performance is still at an early stage, MSF seems to be acceptable to users. Mentors play an important role in helping people make the most of the process.

Portfolios are effective instruments both for promoting reflection (in support of professional development), and for assessment, although there are potentially conflicting issues that may jeopardise use of a single portfolio for both purposes. A wide variety of formats and approaches have been described and it is important to critically appraise strengths and weaknesses in the context of intended purpose before adopting a particular model. Notwithstanding the differences, the essential element in all portfolios is the documentation and collation of learning, usually in the form of written artefacts. The content of a portfolio must be kept to a minimum, and learners need support in how to use it. Again, the support of a mentor appears to be the most important factor in a portfolio’s effectiveness.

ACGME. Homepage of the Accreditation Council for Graduate Medical Education. 2009. Available at: http://www.acgme.org/ Accessed October 16

Angrist J.D., Lavy V. Does teacher training affect pupil learning? Evidence from matched comparisons in Jerusalem public schools. J Labor Econ. 2001;19(2):417-458.

Boylan O., Bradley T., McKnight A. GP perceptions of appraisal: professional development, performance management, or both? Br J Gen Pract. 2005;55:544-545.

Bransford J., Brown A.L., Cocking R.R. How people learn: brain, mind, experience, and school. Washington DC: National Academy Press, 2000. Expanded ed

Brett J.F., Atwater L.E. 360 degree feedback: accuracy, reactions, and perceptions of usefulness. J Appl Psychol. 2001;86(5):930-942.

Buckley S., Coleman J., Davison I., et al. The educational effects of portfolios on undergraduate student learning: a Best Evidence Medical Education (BEME) systematic review: BEME Guide No. 11. Med Teach. 2009;31(4):282-298.

Conlon M. Appraisal: the catalyst of personal development. BMJ. 2003;16(327):389-391.

Davis D.A., Mazmanian P.E., Fordis M., et al. Accuracy of physician self-assessment compared with observed measures of competence: a systematic review. JAMA. 2006;296(9):1094-1102.

Driessen E.W., van Tartwijk J., Van der Vleuten C.P.M., et al. Portfolios in medical education: why do they meet with mixed success? A systematic review. Med Educ. 2007;41(12):1224-1233.

Driessen E.W., van Tartwijk J., Dornan T. The self-critical doctor: helping students become more reflective. BMJ. 2008;336:827-830.

Ericsson K.A. The influence of experience and deliberate practice on the development of expert performance. In: Ericsson K.A., Charness N., Feltovich P.J., et al, editors. The Cambridge handbook of expertise and expert performance. New York: Cambridge University Press; 2006:683-704.

Ertmer P.A., Newby T.J. The expert learner: strategic, self-regulated, and reflective. Instr Sci. 1996;24(1):1-24.

Eva K.W., Regehr G. “I’ll never play professional football” and other fallacies of self-assessment. J Contin Educ Health Prof. 2008;28(1):14-19.

Ferguson R.F. Paying for public education: new evidence on how money matters. Harvard J Legis. 1991;28(2):465-498.

Frank J., Jabbour M., Tugwell P., et al. Skills for the new millenium: report of the societal needs working group. Ottawa: Royal College of Physicians and Surgeons of Canada, 1996.

General Medical Council. Guidance on good medical practice. 2006. http://www.gmc-uk.org/guidance/good_medical_practice/index.asp. Accessed September 23, 2009

Hall W., Violato C., Lewkonia R., et al. Assessment of physician performance in Alberta: the physician achievement review. CMAJ. 1999;161:52-57.

Hattie J., Timperley H. The power of feedback. Rev Educ Res. 2007;77(1):81-112.

Hatton N., Smith D. Reflection in teacher education: towards definition and implementation. Teach Teach Educ. 1995;11(1):33-49.

Jowett V., Stead R. Mentoring students in higher education. Educ Train. 1994;36:20-26.

Kluger A.N., DeNisi A. The effects of feedback interventions on performance: a historical review, a meta-analysis, and a preliminary feedback intervention theory. Psychol Bull. 1996;119(2):254-284.

Kolb D.A. Experiental learning: experience as the source of learning and development. Englewood Cliffs, NJ: Prentice Hall, 1984.

Korthagen F.A.J., Kessels J., Koster B., et al. Linking theory and practice: the pedagogy of realistic teacher education. Mahwah, NY: Lawrence Erlbaum Associates, 2001.

Lewis M., Elwyn G., Wood F. Appraisal of family doctors: an evaluation study. Br J Gen Prac. 2003;53:454-460.

Loftus E.F. Our changeable memories: legal and practical implications. Nat Rev: Neurosci. 2003;4:231-234.

Mansvelder-Longayroux D.D. The learning portfolio as a tool for stimulating reflection by student teachers. Leiden: Leiden University, 2006.

Maurer T.J., Mitchell D., Barbeite F.G. Predictors of attitudes toward a 360-degree feedback system and involvement in post-feedback management development activity. J Occup Organ Psychol. 2002;75:87-107.

Mount M.K., Scullen S.E. Multisource feedback ratings. In: London M., editor. How people evaluate other in organisations. Mahwah, NJ: Lawrence Erlbam Associates, 2001. Chapter 7

NHS. NHS appriasal toolkit. 2001. Available from https://www.appraisals.nhs.uk/menu.html Accessed October 15, 2009

NHS. Homepage of the Foundation Programme. 2009. Available from www.foundationprogramme.nhs.uk Accessed October 16

Overeem K., Faber M.J., Arah O.A., et al. Doctor performance assessment in daily practice: does it help doctors or not? A systematic review. Med Educ. 2007;41(11):1039-1049.

Overeem K., Lombarts M.J.M.H, Arah O.A., et al. Three methods of multisource feedback compared. A plea for narrative comments and co-workers’ perspectives. Med Teach. 2010;32(2):141-147.

Rivkin S.G., Hanushek E.A., Kain J.F. Teachers, schools, and academic achievement. Econometrica. 2005;73(2):417-458.

Sargeant J., Mann K., van der Vleuten C.P.M., et al. Directed self-assessment: practice and feedback within a social context. J Cont Educ Health Prof. 2008;28(1):47-54.

Schön D. The reflective practitioner: how professionals think in action. New York: Basic Books, 1983.

Shute V.J. Focus on formative feedback. Rev Educ Res. 2008;78(1):153-189.

Smither J.W., London M., Flautt R., et al. Can working with an executive coach improve multisource feedback ratings over time? A quasi-experimental field study. Pers Psychol. 2003;56:23-44.

Snadden D. Portfolios – attempting to measure the unmeasurable? [Commentary]. Med Educ. 1999;33(7):478-479.

Snyder J., Lippincott A., Bower D. The inherent tensions in the multiple uses of portfolios in teacher education. Teach Educ Q. 1998;25(1):45-60.

Spandel V. Reflections on portfolios. In: Phye G.D., editor. Handbook of academic learning: construction of knowledge. San Diego: Academic Press; 1997:573-591.

Steinert Y., Mann K., Centeno A., et al. A systematic review of faculty development initiatives designed to improve teaching effectiveness in medical education: BEME Guide No. 8. Med Teach. 2006;28(6):497-526.

Tochel C., Haig A., Hesketh A., et al. The effectiveness of portfolios for post-graduate assessment and education: BEME Guide No. 12. Med Teach. 2009;31(4):299-318.

van Tartwijk J., Driessen E.W. Portfolios for assessment and learning: AMEE Guide No. 45. Med Teach. 2009;31(9):790-801.

Webb C., Endacott R., Gray M.A., et al. Evaluating portfolio assessment systems: what are the appropriate criteria? Nurse Educ Today. 2003;23:600-609.