11 Learning and teaching clinical procedures

Contextualising learning See main Glossary, p 338.

Hybrid simulation The seamless linking of simulators (e.g. bench top urinary catheter model or virtual reality endoscopy simulator) with simulated patients.

SP (simulated patient) See main Glossary, p 341.

Technical skills Psychomotor and dexterity skills and requisite knowledge required for performing procedures.

Outline

This chapter focuses on educational issues around learning, teaching, and assessing clinical procedures. We limit our scope to procedures carried out on conscious patients and exclude surgical operations. We start with a brief historical overview, reviewing key drivers which are changing the landscape of clinical care and health care education. We identify changes to procedural skills training, highlighting the move from apprenticeship models to competency-based curricula, and shifts from doctor-focused and task-focused care to patient-centred and team-based approaches. We allude to themes in contemporary medical curricula, framing the emergence of skills centres as a central factor in the teaching and assessment of procedural skills. We summarise procedural skills assessments including the simulation-based objective structured clinical examination (OSCE), objective structured assessment of technical skills (OSATSs), and the direct observation of procedural skills (DOPS) in workplace-based assessments. The next section outlines selected theories relevant to skills-based teaching. Here, our aim is to raise awareness of the extensive literature which bears upon our topic, without providing a comprehensive critique. Much of the chapter deals with simulation, whose role in procedural skills training is key but whose uncritical acceptance has led to problems. A critique of current approaches identifies key limitations. From this, we put forward our own ‘learner-centred, patient-focused’ approach to procedural skills training, using hybrid simulation. We use the integrated procedural performance instrument (IPPI) as an example of how to consider strengths and limitations of innovative approaches. We conclude by proposing a ‘layered learning’ approach. This combines scenario design, hybrid simulation, and contextualised training within a learner-centred framework that includes patients as they would exist in real clinical practice.

Introduction

Medical education has witnessed many upheavals during its long history and recent years have been characterised by continual flux (Calman, 2007; Ludmerer, 1999). There has been a series of profound changes during the twentieth century, punctuated by influential reports (Flexner, 1910; General Medical Council, 1947, 1957, 2003; Medical Education Committee of the British Medical Association, 1948). From the perspective of procedural skills, the change from apprenticeship to competency-based programmes has been especially influential.

The historical apprenticeship approach to learning and teaching clinical procedures

In the apprenticeship model, medical students went on a prolonged ‘journey’, stretching from undergraduate training to their postgraduate years. This extended time frame was crucial because it allowed them to absorb a particular way of practising medicine that was heavily based on their master’s style. This approach flourished in an era that has now passed, where patients routinely spent days or weeks in hospital for routine procedures and where a culture of learning ‘on’ patients was widely accepted. In the United Kingdom, this took place within consultant-led ‘firms’ which were stable and relatively independent, and where medical students were treated as inexperienced but legitimate members of a community of practice (Lave and Wenger, 1991; Wenger, 1998). Procedural skills (such as taking blood, intravenous cannulation, and inserting urinary catheters) formed part of a learner’s routine duties; they were learnt under the tutelage of junior doctors and integrated within clinical care. Again, the culture of the time meant that patients were generally acquiescent in a system where being ‘practised on’ was the norm.

Apprenticeship under pressure

The contemporary climate is radically different. The number of medical students has greatly increased, placing further pressure on dwindling opportunities for clinical experience. At the same time, the ethical climate has changed profoundly, and it is no longer acceptable for unskilled novices to practice on patients. Progressively more limited working hours have fragmented the traditional ‘firm’ structure, forcing a change towards shift systems. And the dizzying pace of technological development has meant that patients no longer spend many days in hospital but are processed through specialist units as rapidly as possible. As a result, the slow process by which learners gradually acquired a range of procedural skills is a thing of the past. There is growing concern that a mismatch between book knowledge and procedural skills experience leads graduating doctors to lack confidence in what they can do. Many clinicians in the current climate are graduating with minimal real-world clinical experience (Boots et al, 2009; Cave et al, 2007; Wu et al, 2008) and this mismatch is even more challenging for them. Contemporary developments around revalidation and relicensing of registered practitioners make this debate relevant to professionals at all stages of their career.

Competency-based education

The notion of competencies has exerted a powerful influence on educational thinking. The move towards competency-based programmes is, in part, a response to the changing influences described in the previous paragraph. Such programmes set out specified learning outcomes, stating explicitly what learners are expected to know and be able to do at graduation, and providing learners at the start of their medical education with a clear sense of where they are going (General Medical Council, 2003). In theory at least, making it clear what competencies are expected should help new doctors meet the demands of their roles in a complex health service. The notion of competency-based education has particular relevance for clinical procedures, which are ‘observable’ and therefore ‘measurable’. However, defining competencies is more difficult than might at first appear. One particular challenge is accounting for the variability of clinical practice. Although a new doctor might be expected to perform a procedural skill competently (e.g. suturing), outcomes are often written in broad terms and assumed to be generic. Suturing varies in complexity and is affected by many factors (the depth and site of the wound; availability of suitable equipment and facilities; and characteristics of the individual patient). ‘Generic’ skills do not necessarily transfer through different contexts. Across higher education, a key driver of competency-based education is the production of ‘workforce ready’ graduates. A further limitation of this approach is its focus on achieving minimum standards of safe practice. Although baseline competence is a sine qua non, there is a strong case for pursuing excellence rather than minimal competence (Tooke, 2008). Finally, in competency-based education, there is a risk that a long list of individual competencies may overshadow the ‘whole’ professional practitioner (de Cossart and Fish, 2005). In clinical practice, the whole is much greater than the sum of its parts. A reductionist approach can result in educators becoming over-focused on fine-grained components, causing learners and teachers to lose sight of the bigger picture.

Fragmentation of curricula

At the same time, there is increasing emphasis on professionalism, patient safety, and a range of other attributes which medical students are expected to develop. These learning outcomes are codified in the United Kingdom by the General Medical Council, and all medical schools have to adopt them (Box 11.1). We will return to this tension between fragmented skills and whole-patient care in a later section. One consequence of the competency movement has been the splitting off of procedural skills into a separate educational stream. Medical curricula are now often built around themes, one of which is ‘clinical skills’. Learners are expected to develop a repertoire which is aligned with the GMC’s specifications. The learning and teaching of procedural skills has acquired an identity of its own. Although this has helped focus attention on clinical skills training, it becomes removed from the broader context in which the skills are performed in clinical practice. Factors shaping procedural skills training are summarised in Box 11.2.

Box 11.1 Clinical and practical skills the UK General Medical Council expects of new doctors

Clinical and practical skills

Communication skills

Graduates must be able to communicate clearly, sensitively, and effectively with patients and their relatives, and colleagues from a variety of health and social care professions. Clear communication will help them carry out their various roles, including clinician, team member, team leader, and teacher.

Graduates must know that some individuals use different methods of communication, for example, Deafblind Manual and British Sign Language.

Graduates must be able to do the following:

Students must have opportunities to practise communication in different ways, including spoken, written, and electronic methods. There should also be guidance about how to cope in difficult circumstances. Some examples are listed as follows:

http://www.gmc-uk.org/education/undergraduate/undergraduate_policy/tomorrows_doctors.asp#Clinical%20and%20practical%20skills

Teaching of procedures

Simulation is steadily gaining ground as a means of learning procedural skills in a safe setting. In the United Kingdom, skills centres, which provide a range of bench top models, are now a part of every university and most hospitals. Although most are fairly unsophisticated, a spectrum of simulators is available, with highly complex mannequins and virtual reality systems becoming more widespread. High cost puts more complex ones beyond the reach of undergraduate training. See the following references for an overview of simulators in procedural skills (Bradley, 2006; Kneebone and Bello, 2005; Maran and Glavin, 2003; Vozenilek et al, 2004; Windsor, 2009). The aim is that all learners should receive an initial introduction to the techniques of clinical procedures away from actual patients, in a setting where they can master the basics and make mistakes without causing harm. Many current simulators are fairly crude representations of body parts, such as models for practising venepuncture or urinary catheterisation. The focus is on the equipment and techniques of each procedure, and the patient is ‘absent’. Such decontexualised learning runs the risk of oversimplification, implying that there is always one ‘correct’ way to perform any procedure. Simulation of this kind is becoming widely accepted as an alternative to learning at the bedside, developing its own identity as an educational approach. This has obvious benefits, such as allowing novices to learn and practice the basics of a practical procedure in a protected setting where undivided attention can be given to the learner and where patient care is not in jeopardy. But there is a danger that simulation may be seen as an alternative rather than an adjunct to clinical practice.

Assessment of procedures

In former times, there was little or no assessment of procedural skills within undergraduate education. There was a widespread assumption that new graduates would have acquired the skills they needed for clinical practice by the time they finished their course. As evidence to the contrary began to emerge, simulation began to be used increasingly for assessment of procedural skills, providing a proxy for clinical observation. One of its attractions was the ease with which components of a procedural skill could be isolated and examined. Several approaches are in use:

Objective structured clinical examination

OSCEs have been widely used for over three decades for formative and summative assessment. There are several excellent descriptions of the process and reports of validity and reliability (Harden, 1990; Harden and Gleeson, 1979; Hodges, 2003; Regehr et al, 1999; Reznick et al, 1998; Townsend et al, 2001). Although there are variations in OSCEs, learners usually work through a series of stations, in each of which they perform a task. This may form part of a procedure, examination, communication, or other clinical activity. The task may involve a real patient or a simulated patient (SP), simulator kit, medical equipment, patient records, or other relevant items. The format is formal, with whistles and bells signalling when learners start and finish stations. An assessor (examiner) is present at each station, recording judgements on paper forms. SPs may also be requested to make judgements (Cleland et al, 2009; Homer and Pell, 2009; Whelan et al, 2005). Feedback to learners often depends on the purpose of the OSCE, high stakes assessments usually offering limited feedback while formative assessments provide a richer learning experience (Nestel et al, in press). The number and length of stations varies widely, with published examples from 4 to 30 stations, each lasting between 5 and 20 minutes. Although the literature contains many descriptions of OSCEs, there are few references to an underpinning theoretical framework. Tasks are often reduced to component parts, using these as representations of the whole. On Miller’s pyramid for assessing clinical competence (Miller, 1990), the OSCE addresses the ‘shows how’ of isolated tasks. Although this may be an important component of mastering technique, we argue that it is also important for learners to practise and be assessed on any skill in the context in which it will be used.

Objective structured assessment of technical skill

This influential approach, developed by Reznick’s Toronto group, addresses the technical aspects of surgical procedures (Faulkner et al, 1996; MacRae et al, 2000; Martin et al, 1997; Regehr et al, 1998; Reznick and MacRae, 2006; Szalay et al, 1998). A series of procedural stations (using bench top models or human cadavers) allows learners to be directly observed as they carry out operative procedures. A combination of checklists and global rating scales is used to make judgements about the skills observed. The use of global assessments by experts has proved to be especially valuable. Expert judgements allow for variation in individual technique (a major criticism of the reductionist OSCE approach). OSATS has been extensively validated and is widely used for assessing ‘technical’ surgical skill.

Direct observation of procedural skills

There has been a major shift within postgraduate education towards assessment in the workplace (Norcini, 2003; Norcini and Burch, 2007). For procedural skills, this has been through DOPS, where doctors are observed and assessed as they conduct procedures on selected patients (Box 11.4). The Foundation Programme for junior doctors in the United Kingdom is usually undertaken in the first 2 years after medical school (The Foundation Programme, 2010a). Foundation doctors are expected to undertake a series of work-based assessments covering a spectrum of knowledge, attitudes, and skills relevant to practising as a junior doctor. The assessments include case-based discussions, observations of junior doctors performing clinical examinations and procedural skills, and multi-source feedback. For procedural skills, a nominated assessor observes the junior doctor performing a selected procedure on a patient (Box 11.3). In real time, the assessor uses a six-point rating scale on a generic rating form – DOPS (The Foundation Programme, 2010b). The form consists of 11 items (Box 11.4). Foundation doctors arrange their own assessments, selecting which procedure they will be assessed on from the Foundation Programme curriculum and choosing a suitable patient and assessor. This work-based assessment represents a significant shift in procedural skills education for junior doctors, ensuring that actual practice is observed. That is, the highest point (‘does’) on Miller’s pyramid. The DOPS form provides a framework relevant to many procedures, encouraging systematic thinking about skills and encouraging dialogue between learner and assessor with the intention of supporting learning. A key aim is to encourage junior doctors to take responsibility for managing their learning in the clinical environment.

Box 11.3 The procedural skills doctors in the UK Foundation Programme are expected to undertake in workplace-based assessments

There are, of course, limitations. Although doctors are encouraged to sample widely from possible procedures, assessors, and patients, it is likely that a narrow sample is often chosen. It seems natural, for example, that doctors being assessed should choose to demonstrate a straightforward procedure on a co-operative patient. Doctors’ responses to challenging situations and behaviours are therefore rarely captured. Yet it is those very challenges that become crucial in clinical care. There are anecdotal reports of inaccurate recording of forms, unwillingness of assessors to report underperformance, and difficulties using the observation as a learning opportunity. The latter relates to limited time, knowledge of the purpose of the assessment, and teaching skill. However, the Foundation Programme provides a formal curriculum structure in what had often been an unstructured and service-oriented experience for junior doctors, providing first steps towards supportive monitoring of doctors’ continuing professional development in a diverse health service.

Theory

In order to make rational choices about how best to learn, teach, and assess procedural skills, it is necessary to have an awareness of relevant learning theory. Such theories have emerged from many disciplines, including education, psychology, sociology, philosophy, and anthropology. Chapter 2 is wholly devoted to learning theory but we identify here theories which we believe are especially relevant to a discussion of procedural skills. We do not attempt to be comprehensive or to provide a formal critique of these positions. Learning theories are often classified as behaviourist, cognitivist, or constructivist. Like most classifications, there is overlap. Behaviourism is mainly concerned with learning manifested as changes in behaviour. The environment is seen as critical for shaping learning, and issues of contiguism (proximity of learning to application) and reinforcement (awards or punishments) are central to promoting learning. Unlike behaviourism, where factors external to the individual are seen as of primary importance, cognitivism outlines an individual’s capacity to influence learning through memory (information processing), prior knowledge, and experience. Constructivism also focuses on individuals but not on ‘memory’. It emphasises the ways in which individuals create new knowledge for themselves by engaging with others through talk, activities, and problem-solving. It emphasises, also, the social environment within which learning occurs.

Expertise

It is clear that expertise does not just ‘happen’. Indeed, it has become a mantra that its acquisition in any field requires sustained deliberate practice over many years. Ericsson’s pivotal work (Ericsson, 2004, 2005) has done much to highlight the crucial role of practice in the development of elite performance across a range of domains and disciplines. It is clear, for instance, that all international performers have engaged in a minimum of 10,000 hours of deliberate practice – not just doing, but doing with the express intention of improving. Traditional models of expertise, especially around procedural tasks, are based on a sequential process with defined stages (Dreyfus and Dreyfus, 1986; Fitts and Posner, 1967). Although the details vary according to which model is used, the learner progresses from a cognitive stage (learning what is to be done), through an associative stage (learning how to do it), and finally to an automatisation stage (where it falls from conscious awareness). Although this provides helpful insights into the acquisition of surgical skill, it leaves unanswered questions. The final stage of expertise demands particular attention. Cognitivists describe automaticity as the effortless completion of tasks, where the learner no longer has to think about steps in completing the task or procedure. This reduces demands on their cognitive capacity and enables them to deal with other stimuli. The concept is often illustrated with driving skills. As a newcomer to driving, it can be completely overwhelming simply to steer the car at low speed, let alone accelerate, brake, and indicate. Reversing and parking may seem far too complex. With practice, however, these component skills come together, allowing the driver to process the broad range of stimuli with which they are confronted. In clinical practice, learners commonly block out what are seemingly obvious cues as they focus on completing steps in a clinical procedure they are learning. If the procedure is taught ‘out of context’, then the learner does not have an opportunity to rehearse the skill as it might be performed in real practice, surrounded by the range of factors that can impinge on the clinician’s performance.

An alternative framing is the distinction between routine and adaptive expertise. Routine experts become good at performing the same task over and over again. Adaptive experts, on the other hand, remain alive to the complexities of their setting and continually challenge themselves to come up with creative solutions to new circumstances. Although routine expertise is essential, especially for repetitive tasks, we must not lose sight of the need for adaptive expertise. It is in the real world that adaptive expertise is required, where the unexpected is the norm. According to Bereiter and Scardamalia, adaptive experts reinvest their freed-up attentional resources into progressive problem-solving, setting out to find situations which go beyond what they already know (Bereiter and Scardamalia, 1993, 2003; Scardamalia and Bereiter, 2003). As they become more comfortable with tasks which initially required all their attention, they are able to extend their learning in different directions. Returning to the motoring parallel, the novice’s attention is at first wholly absorbed by trying to co-ordinate key controls and functions. As those become more familiar, they can concentrate more on managing the traffic and learning to read the road. However, once this in turn becomes second nature (automatised), most people use their freed-up attentional resources to do things unrelated to driving – listening to the radio, for example, or talking to other people in the car. But those who wish to become professional drivers channel these attentional resources into extending their range of driving skills rather than dissipating them in other activities. They seek out ways to corner at speed without losing control, to recover from a skid, and so on. In terms of clinical expertise, any educational framework should allow learners to continually develop and hone their skills at the edges of their existing competence, aiming to achieve excellence.

Experiential learning

There are two ways of thinking about ‘experiential learning’. One relates to deliberately providing learn ers with an experience (learning activity) which enables them to gain knowledge and skills. The second relates to learning which occurs simply as the result of ‘living’. Of course both are important, but it is the former which teachers can influence directly. Kolb and Fry (1975) describe a learning cycle which individuals may enter at any point. Key waypoints include ‘concrete experience’ (learner does something that produces an outcome), ‘observation and reflection’ (learner makes sense of what they have done, especially the particular circumstances that led to the outcome), ‘forming abstract concepts’ (learner extracts key elements of the action and the outcome to predict what might happen next time), and ‘testing in new situations’ (learner tests their prediction). This cycle is continuous and allows an individual to build a progressive repertoire of knowledge and skills. It follows that the more experiences learners have the more likely they are to learn. However, we know that experience is only one of the variables that influence learning. The cycle has obvious application to teaching and learning procedural skills, especially training that provides opportunities for ‘concrete experiences’ in simulated settings.

Reflection

Schön’s concepts of reflection-in-action (immediate ‘thinking on your feet’) and reflection-on-action (later analysis of actions in the light of outcome, prior experience, and new knowledge) describe the responses of practitioners to unexpected events (Schön, 1983, 1987a,b). Schön argued that practitioners seek to place new and unexpected experiences within a personal framework by identifying similar past experiences and then give consideration to possible outcomes by selecting new actions. Reflection-on-action is obviously a process that could be facilitated by peers and teachers, as it takes place after the event, but reflection-in-action requires an immediate response, especially in emergency clinical situations. Although Schön’s approach is criticised by theorists, reflection-on-action is widely embodied in training programmes (e.g. portfolios, critical incident reports, etc.). Much literature focuses on what teachers know and how they might impart their knowledge to learners. But of course there is more to learning than the acquisition of propositional knowledge or psychomotor skills.

Boud et al extends Schön’s concept of reflection-on-action, highlighting that it is important for teachers to work with learners’ experiences. Boud argues that it is especially important to explore adult learners’ experiences in order to help locate new knowledge and skills within their breadth of experience (Boud et al, 1996). Boud describes a ‘critical reflective’ approach which is ‘context conscious’. ‘Critical reflection’ promotes and values both learning content and process. This approach includes both cognitive and affective elements of learning. In order to locate new knowledge and skills in a learner’s experience, the process of learning must be active. The teacher designs the learning experience to make use of what the learner brings and takes from the interaction. The teacher also acknowledges the role of the environment in which learning takes place (the ‘learning milieu’). Vicarious and experiential learning merge imperceptibly, and ‘observing’ and ‘doing’ both offer potential value in supporting learning. Another feature of Boud’s approach is that it is highly structured, addressing three elements: returning to the experience, attending to feelings, and then re-evaluating the experience. In re-evaluation, he further describes:

Vygotsky and the zone of proximal development

A useful framework for simulation-based learning is provided by Vygotsky (1978; Wertsch and Sohmer, 1995). Working with young children in the early twentieth century, he identified the critical role of social interaction in learning. Although formulated decades before simulation became established, Vygotsky’s notion of the zone of proximal development (ZPD) is useful in conceptualising how learners gain and absorb skills. The ZPD highlights the importance of learning supported by peers and what he termed ‘more knowledgeable others’. Vygotsky defines it as:

the distance between the actual development level as determined by independent problem solving and the level of potential development as determined through problem solving under adult guidance or in collaboration with more capable peers.

The ZPD is therefore an intermediate zone between what learners can do on their own and what they cannot do at all. Here, the role of a teacher is crucial because it enables learners to gain and internalise knowledge and skills for themselves. The zone of current development is where the learner currently ‘resides’ and where tuition can move learning forward. From there, the learner can move with assistance from a ‘more knowledgeable other’ to their ZPD. From a contemporary standpoint, the ZPD can be thought of as a ‘learning space’, populated not only by teachers but also by educational resources such as simulation and e-learning (Boud et al, 1996; Schön, 1983, 1987a).

Several theorists have extended Vygotsky’s work, developing the importance of instructional conversation between the learner and teacher. Bruner described the concept of ‘scaffolding’, where it is as important for teachers to know when to step back from supporting learners as it is to provide that support (Bruner, 1986, 1990, 1991; Wood, 1998). Tharp and Gallimore point out that learning is not static but prone to decay, and that when skills are lost, the learner must loop recursively back through earlier phases (Tharp and Gallimore, 1988, 1991).

Communities of practice

The ZPD focuses on learning by individuals but clinicians do not learn or function in isolation. Lave and Wenger’s work on communities of practice and learning highlights how newcomers gradually become part of a professional group, and how learning and clinical practice both take place through gradual absorption into a shared activity with common goals.

Learning viewed as situated activity has as its central defining characteristic a process that we call legitimate peripheral participation. By this we mean to draw attention to the point that learners inevitably participate in communities of practitioners and that the mastery of knowledge and skill requires newcomers to move toward full participation in the sociocultural practices of a community. ‘Legitimate peripheral participation’ provides a way to speak about the relations between newcomers and old-timers, and about activities, identities, artefacts, and communities of knowledge and practice. It concerns the process by which newcomers become part of a community of practice. A person’s intentions to learn are engaged and the meaning of learning is configured through the process of becoming a full participant in a sociocultural practice. This social process includes, indeed it subsumes, the learning of knowledgeable skills.

Chapter 2 contains a more detailed discussion of Lave and Wenger’s theoretical perspective but, for present purposes, their work makes it clear that procedural skills must be part of a wider picture of teamworking and shared approaches to learning and to practice.

Activity theory

Several authors provide stimulating and often challenging perspectives on medical education, drawing on literature around activity theory and actor network theory (Bleakley, 2006a,b; Bligh and Bleakley, 2006; Engestrom, 2001; Engestrom et al, 1999; Lingard, 2007). Activity theory provides a framework for considering ways in which people act. Derived from the work of Vygotsky and other influential scholars in the former Soviet Union, the framework provides a way of looking at the production and shaping of knowledge by individuals within social systems. It is easy to see applications within clinical settings, especially highly specialised and contained ones (e.g. operating theatres). Again, the topic is covered more fully in Chapter 2 but its significance to this chapter is the importance of viewing individuals in the context of the dynamic social environment in which they reside and that individuals’ thoughts are influenced by those around them as they shape their environment too.

Threshold concepts

Elsewhere, it has been argued that personal development as a clinician involves transitions (Kneebone, 2005). Such transitions include developing from a medical student to a doctor and from a registrar to a consultant. Meyer and Land’s work on threshold concepts is illuminating here (Meyer and Land, 2003, 2005). According to their approach, threshold concepts provide new ways of looking at a subject. Such concepts tend to be challenging and require learners to reconfigure their views of the world. This process can be uncomfortable and lead to a sense of alienation and anxiety. This is especially evident in health care education, where part of the development process as a clinician involves coming to terms with thresholds. Dealing with uncertainty, with ambiguity, and with lack of confidence is part of every clinician’s experience, but such feelings are seldom expressed. According to Meyer and Land, many teachers become ensnared by ‘enchantment’, providing an oversimplified version of a complex reality in the hope of helping learners. In fact this can be counter-productive, leading to long-term difficulties in mastering complex domains and interfering with deep understanding. We return to the concept of transition later.

Patient-centred learning and simulated patients

The role of real patients

A significant proportion of undergraduate medical education takes place within clinical care provided by the health service of the country in which it takes place. Taking the United Kingdom as an example, the National Health Service (NHS) placed medical education alongside health care provision at its inception, seeing education as one of its fundamental components and expecting patients to participate and be ‘taught and learned on’ (General Medical Council, 1947, 1957). The role of patients in learning and teaching procedural skills has traditionally been passive (Howe and Anderson, 2003; Spencer et al, 2000; Wykurz, 1999). Learners have been guided by clinicians, with the patient’s contribution limited to simply being present. The same language is less likely to be used to describe the role of patients in medical education today. Although the UK GMC and other regulatory bodies encourage active roles for patients in undergraduate education, each patient’s choice is now paramount. This has triggered a radical shift in the relationship between patients and clinicians, with major implications for education. Practices that were once commonplace (e.g. vaginal examination of anaesthetised female patients without their consent) are no longer acceptable (Coldicott et al, 2003; Nestel and Kneebone, 2003). This is especially the case with invasive procedures, where there is real risk of causing harm. With the advent of patient-centred care and acknowledgement of patients’ own expertise, medical educators have to find ways to represent patient perspectives in curricula. As societal values change, educators must respond by developing methods acceptable to the public. High-profile cases of medical malpractice and doctors’ unprofessional behaviours have focused a spotlight on the medical profession. Medical education has also come under scrutiny from within and outside the profession. Most medical curricula now have lay or patient representation which include considering the acceptability of programme content and educational methods, and of raising the profile of patient perspectives. The ethics of patient involvement in medical education are fully explored in Chapter 1.

The role of simulated patients

For many years, SPs have worked as ‘substitutes’ or ‘proxies’ for real patients, an approach which offers many benefits. SPs are trained to portray patients and to provide feedback to learners. They have the potential to raise the profile of patient perspectives, especially as they relate to communication and other professional behaviours (Bokken et al, 2009; Cleland et al, 2009; Nestel and Kneebone, 2009). Additional benefits of trained SPs include having scenarios that pose predetermined levels of challenge reflecting curriculum goals, the opportunity to tailor learning to individual learner needs, and the provision of standardised scenarios to assess learners in a range of clinical skills (Adamo, 2003; Barrows, 1968; Boulet et al, 2009; Hoppe, 1995; Ker et al, 2005; Nestel et al, 2006). The use of SPs offers major benefits in ‘grounding’ procedural skills training in holistic practice.

Simulation

We have argued earlier that simulation allows learners to gain procedural skills within a changing clinical landscape where traditional methods of learning on patients are no longer acceptable. We also alluded to some of the difficulties and dangers which may follow an uncritical acceptance of simulation. We have highlighted some theoretical positions that we believe can help develop a more integrated approach to simulation; one which helps to bridge the gap between formulaic, impoverished model-based training and the richness and unpredictability of clinical practice. We now move on to a critique of current simulation-based training.

Benefits of simulation

A key advantage of simulation is that it allows procedural skills to be practised and assessed outside the clinical arena, away from the complexities of actual care. This reductionist approach ensures safety. Yet there is increasing acceptance that clinical practice must be holistic and patient-centred, integrating clinical knowledge and skills with professionalism, communication, and patient safety. At the heart of this tension is confusion about what is meant by clinical and procedural skills. From one viewpoint, clinical skills encompass history taking, physical examination, and the skills of performing diagnostic and therapeutic procedures. From another, clinical skills are perceived as procedural skills which are learnt and assessed in a skills lab setting. To us, procedures are simply another form of clinical encounter. At the centre of this encounter lies a technical intervention which requires specific kinds of knowledge and dexterity. But that intervention must take place within a wider arc of care, which starts by ensuring that the patient understands and consents to the procedure and which ends with agreeing a plan for future action. Fragmentation of this process into isolated components can lead to over-focusing on the technical elements of the procedure.

Current approaches to assessment tend to exacerbate this imbalance. Learners who ask ‘Shall I show you how I do it for the OSCE, or how I do it on the ward?’ are highlighting an artificial distinction which has grown up between skills centres and clinical practice. The link between skills taught in centres and actual clinical practice is not readily grasped by learners. The limitations of widely available simulators (crude body parts) entrench this view further, suggesting that all venepunctures are the same. So, the attractions of simulation (bench top models) lead to a distorted perception of what clinical skills are about, and ‘skills’ become synonymous with labs in skills centres rather than patients.

Reconciling expert and novice perspectives

Somewhere in all this, the link between skills labs and real clinical practice becomes lost. This is probably because experts (for whom the skills labs trigger a wealth of clinical experience) make assumptions about novices (who have not yet gained that clinical experience, and who therefore engage with skills lab models at a completely different level). Kneebone has used the ha-ha as a metaphor for the difference in perspective between experts and novices (Kneebone, 2009). The ha-ha is a device used in eighteenth-century English landscape gardening. A deep ditch surrounds a country house and its garden, separating them from the surrounding parkland with its deer and cattle. Viewed from the house, this ditch is invisible, providing a powerful illusion that the house is in the midst of untamed nature. Seen from the park, however, the ditch is very evident, presenting an unscalable barrier. According to this metaphor, experts are in the house, looking out at novices in the park and wondering why they do not simply walk across and join in. From the novices’ perspective, however, they are separated from the experts by a gulf which they cannot yet cross.

In the case of procedural skills, experts (who design the training that the novices undertake) have often lost sight of where the key challenges lie during the earlier stages of learning. Because of their extensive experience with a wide variety of patients, experts no longer find any example of cannulation especially challenging. For the novice, on the other hand, there is a huge difference between an ‘easy’ and a ‘difficult’ cannulation. This difference in perspective lies behind some of the problems we see with conventional skills-based training. It is therefore important to return to first principles when designing educational programmes aimed at novices, ensuring that notions of simplicity and relevance reflect learners’ perspectives as well as those of their teachers and mentors. Observation, peer feedback, and clinical expert supervision are all characteristic of contemporary approaches to the development of procedural skills.

Today, a significant proportion of medical education is delivered in the service arena, where there is a focus on the delivery of safe and cost-effective care to patients. There has been a shift from the traditional distinction between pre-clinical and clinical phases of medical curricula to curriculum designs in which early clinical placements are commonplace. The breadth of health services in which medical students are educated has also expanded. These changes influence the potential timing and settings for procedural skills education. Clinical skills are seen as crucial and are taught early in the curriculum. For obvious reasons, novices cannot practice on patients and so they are exposed to skills labs early on. Learners come to associate clinical skills, skills labs, and OSCEs.

Managing danger and experiencing risk

This issue of managing danger is central here. At one level, it is clearly essential to protect patients from unnecessary risk of harm, especially when undergoing invasive procedures. Yet, from the learner’s point of view, recognising and managing danger is an essential element of becoming an effective clinician. If that sense of danger is stripped out of simulation-based training, how are learners to recognise premonitory signs of trouble and gain insight into their own capacities and responses? It seems to us that effective simulation should be able to recreate in the learner those real-world experiences of uncertainty and fear which are an inseparable component of clinical care. Only by doing so can learners recognise such responses in themselves, and develop the skills and maturity to deal with them effectively.

Too great an emphasis on simulation as a proxy for the real world holds more subtle dangers too. Although it may appear self-evident that initial learning in a safe environment is desirable, clinical practice is inherently risky. Managing such risk is crucial to becoming a mature and flexible practitioner, and dealing with uncertainty, ambiguity, anxiety, and even fear, is part of this process. A risk-averse culture that shies away from any potential danger could have a counter-productive effect, resulting in doctors who are ill-equipped for the realities of practice.

Simulation of clinical procedures therefore offers an alternative to clinical reality which is safe but does not reflect the complexities of authentic practice. Most educators are compelled to work within the limitations of existing models, whose crudeness dramatically reduces the correspondence with clinical reality. This reductionist approach is driven partly by a need to simplify a complex picture and to measure what is most easily measured. Yet a balance needs to be struck between swamping a novice with unmanageable levels of complexity before they have grasped the basics, and creating a sense of infantilism which prevents the learner from developing expertise to cope with a complex and sometimes dangerous reality. Workplace-based assessment, on the other hand, provides selective glimpses into authentic clinical practice but cannot sample the wide range of potential clinical challenges which a clinician should be expected to manage. Partly this is because there are such large numbers of medical students. Partly it is because most observed procedures are carried out on relatively straightforward patients, and so do not address the difficulties and challenges which require true expertise.

Procedural skills training, especially at a novice level, usually takes place within institutions which have to cater for large numbers of learners. Institutions and learners have different agendas. Immediately there appears a tension between the needs of the individual learner to experience individuality and clinical complexity, and the needs of the institution to create conditions of reproducibility and consistency. One major criticism of model-based simulation is that it does not recreate the variability of clinical experience, assuming for example that all instances of venepuncture are similar. In a sense, this is a conflict between validity and reliability. Ideally, every individual’s learning experience would be based on unique encounters with real patients but set in a context which optimises learning. From an institutional standpoint, however, there is increasing pressure to provide a controlled and reproducible exposure for all learners within a given cohort, allowing teaching to be planned and resourced and providing the conditions for formative and summative assessment in an equitable setting which meets the requirements of the curriculum. So, if there are limitations to skills centre practice, how might these be overcome? In the next section, we describe an approach we have developed, which addresses some of those issues.

Learning and teaching procedural skills

In the first section of this chapter, we outlined historical approaches to learning and teaching procedural skills, introduced key conceptual frameworks, and described the now well-established role of clinical skills centres for practising specific techniques on bench top models. We pointed out the combination of pressures and constraints acting upon clinical education. In this section, we make the case for integrating technical skills with other crucial aspects of clinical care and explore possible solutions, which use simulation in innovative ways.

Traditional model-based teaching of procedural skills places the technical aspects of each procedure at the heart of learning. The rationale for this approach lies in the need to build a foundation of technical mastery. And of course such mastery is essential. The question that follows logically is whether technical mastery alone is sufficient. Practice using isolated bench top models offers obvious benefits, especially to novices. At the earliest stages of learning, it is obviously essential to grasp the basics of technique, the instruments and equipment that are being used, and the fundamentals of each procedure. By practising the technical components of a procedure away from the pressures of clinical care, learners (so the argument runs) can give their undivided attention to the task in hand, without fear of causing damage. In one sense, all simulation relies upon simplification. Simulation demands the abstraction of key elements of real life and their representation in a format that allows learning to take place.

But simplification is a double-edged sword. In real life, attention is never undivided, and technical skills cannot be isolated from their clinical context. Too much emphasis on the technical can mask the fact that practising procedures is only relevant because procedures are carried out on patients. A defining characteristic of clinical practice is its complexity. Although much educational energy is directed towards learning ‘generic’ skills of history taking, physical examination, and diagnosis, each clinical encounter is unique and must be managed on its merits. The technical elements of procedures carried out on conscious patients are only one part (although a crucially important part) of a wider clinical encounter which includes communication, professionalism, and much else besides. Addressing this complexity is a key challenge. This applies as much to invasive procedures as to any other area. Inserting an intravenous cannula, for example, might be seen as a straightforward procedure requiring relatively low levels of expertise. That is true of inserting a cannula in a co-operative patient with ‘easy’ veins but certainly not true when it comes to inserting a cannula in an elderly, demented, and unco-operative patient with low blood pressure. Being able to do the first does not imply an ability to do the second. To conceive of the procedure simply as ‘cannulation’ is to oversimplify a complex picture.

Patient-focused simulation

Invasive procedures occupy a special position in clinical education, as they can cause harm. The dangers of inexpert attempts to take blood, insert a urinary catheter, or perform a lumbar puncture are obvious. Sooner or later, of course, every learner must perform their first procedure on a real patient but there is a strong argument for getting as far along the learning curve as possible before this happens. In that sense, the advantages of simulation are obvious. From the patient’s perspective, it is not acceptable to be used as a guinea pig for a purely technical exercise. This raises the question of how preliminary integration can be achieved. We propose a conceptual model which shifts the emphasis from a technical skill per se to a clinical encounter which involves a procedure. The technical element, although no less important, becomes modulated by the clinician–patient relationship. For this purpose, we require a proxy for clinical practice which satisfactorily represents all the key elements of the encounter.

As a focus for discussion, we use our own work on patient-focused (hybrid) simulation (Higham et al, 2007; Kneebone et al, 2002, 2003, 2005, 2006a). For several years, we have been exploring the potential of combining SPs with bench top models to create realistic quasi-clinical encounters which combine the benefits of simulation (safety, the opportunity for repeated practice, a framework for feedback and assessment) with the complexities of clinical practice. In our initial work, we aligned existing bench top models with SPs to create the illusion of performing a procedure on a real person (Figures 11.1 and 11.2). The model acted as a proxy for that part of the patient on which the procedure was performed, while the SP acted as a proxy for the whole person. Rather to our surprise, we discovered that integrating the two proxies could create high levels of perceived realism despite the apparent crudeness of the technical model. This high level of tolerance to apparently unrealistic elements raises important issues about priorities within simulation design. The crucial factor appears to be the presence of a real person within a procedurally focused encounter. In ways we are still exploring, this seems to break down the ‘skills lab mentality’, foregrounding the clinical nature of the encounter, and moving it away from being seen as a technical exercise.

As highlighted earlier, there is an extensive literature on SPs. Much has also been written about the wide variety of models and computer simulators which are now available. Much less has been written about the intersection zone between SPs and bench top models. We see hybrid simulation as a means to reconcile some of the imbalances of clinical care (where the patient’s needs are paramount and the learner becomes subsidiary) and skills centre activity (where the learner’s needs are central but the patient ‘disappears’). Our model provides a balance between those two drivers, ensuring that additional elements (such as other clinical team members) can be provided.

Integrated procedural performance instrument

After exploring the concept of patient-focused simulation (PFS) in various settings and with a range of procedures, we developed the IPPI. This consists of eight 10-minute scenarios built around the clinical procedures expected of new medical graduates and set out in Tomorrow’s Doctors (General Medical Council, 2003; Box 11.1). These included intravenous cannulation, urinary catheterisation, injections, suturing, and venepuncture. We have reported the IPPI concept elsewhere (Kneebone et al, 2006b, 2008; Leblanc et al, 2009; Moulton et al, 2009; Nestel et al, 2008a) and now use our experience with this technique as a focus for discussion. Each procedure was performed within a clinical encounter which specified the clinical scenario, the patient’s role, and what the participant (junior doctor) was required to do. Each patient’s role was played by SPs (professional actors) and was designed to reflect situations which new graduates might be expected to deal with. Alongside the technical procedure were specific challenges relating to communication, patient safety, and professionalism. These included patients who were distressed, hostile, visually or aurally impaired, unable to speak English, or accompanied by anxious relatives. The procedures themselves were performed on models attached to or aligned with SPs, using the hybrid approach described earlier. Each participant rotated through all eight scenarios. Encounters were recorded using a small video camera. There was no observer physically present in the scenario room. Each encounter was rated by expert clinicians, by the SP (acting as a proxy for the patient), and by the participant themselves. A combination of global ratings, checklists, and free text comments was used. Because the primary purpose was formative, participants were able to access their assessments via the web and review each of their videotaped performances.

We set out to create a panel of tasks which, taken together, would sample a range of clinical skills required at a given level of experience. We wished to create scenarios which moved away from the OSCE, with its tacit assumption that there is a formulaic set of steps which, if memorised and displayed, will satisfy the examiners. Instead, we tried to offer clinical situations where there was no single right answer, but rather a need to make choices and follow up their consequences. From the perspective of educational design, this posed a problem. From a practical point of view, it is clearly unacceptable to provide a set of free-range scenarios with no guidance on how to conduct them. On the other hand, too rigid a framework would constrain the very sense of authenticity and real-world uncertainty we were trying to achieve. We therefore designed scenarios to provide a consistent format (10-minute scenario) and a standardised opening gambit, but considerable latitude for how the encounter might evolve. Rather as the pieces on a chessboard start each game in the same positions but rapidly develop a unique disposition, simulations of this kind begin from a standard opening but can then develop in many possible directions depending on the relationship between the patient and clinician.

This unpredictability raises obvious potential difficulties. What might happen, for example, if a clinician lacked the skills and insight to defuse a hostile situation caused by an abusive patient? It is certainly better to address such issues within a simulation than a real encounter, but situations must not go out of control or result in physical violence. By using professional actors, we were able to set limits in advance, ensuring that the scenario would be terminated if a certain point was reached. The actors’ detachment and professionalism provided a backstop which allowed us to trade realism against safety. We aimed for each scenario to be a managed microcosm of clinical reality, where participants could display a range of skills and behaviours while experiencing a range of feelings. In particular, we wished to create conditions for displaying adaptive expertise. Although individual scenarios might be challenging or upsetting, our aim was to provide these within a supportive overall matrix where participants’ educational needs were our priority. The primary focus of the IPPI is procedural skills but we believe that this integrated approach allowed us to sample a wide range of relevant behaviours.

To gain further insights, we explored learners’ responses to both a formative OSCE and the IPPI immediately prior to a high stakes OSCE (Nestel et al, 2009). Learners valued both assessments, identifying that they met different needs. The OSCE prepared learners for their forthcoming exam, while the IPPI prepared them more for clinical practice, since it was perceived as ‘holistic’ and included ‘patients’. Learners reported that the IPPI provided an opportunity to ‘think on their feet’ while dealing with a patient’s problems. Almost all learners thought this was good preparation for their future as a doctor, providing them with an opportunity to work beyond their limits and explore boundaries of their competence which they were unable to do ‘safely’ in real practice. Learners did not believe that their course had prepared them for the IPPI style of assessment, and reported that the IPPI scenarios forced them to think and be creative. The IPPI seemed to be providing a means of moving between ‘routine’ and ‘adaptive’ expertise.

Since it is acknowledged that assessment drives learning, medical educators need to design assessments that reflect the needs of safe and effective clinical practice. Our experience with this study confirms the widely held view that learners adjust their learning to task-focused assessments. Contextualised and patient-focused assessments are more likely to align learning with real clinical practice, in line with the values underpinning Good Medical Practice (GMC, 2006) and the NHS.

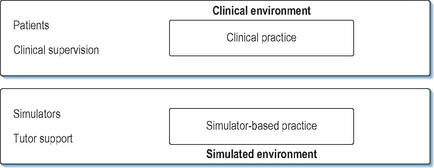

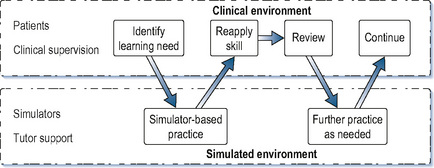

A continuum of learning

We have previously put forward a conceptual model of simulation as complementing clinical practice rather than taking place in isolation from it (Kneebone et al, 2004). Instead of an impermeable barrier between the clinical and simulated settings, we see a porous membrane, which allows simulation to reflect and support specific aspects of clinical practice (Figures 11.3 and 11.4). Current developments are placing such an approach within reach. We now suggest a continuum model for procedural skills (Figure 11.5). On the left are isolated bench top models, allowing novices to become oriented to a new procedure and learn its basics. On the right lie complex simulations designed to challenge learners by recreating real-world situations with no right or wrong answers. Between those poles lie a range of intermediate stages. Towards the left are formulaic, prescriptive settings whose primary focus is technical skill, while towards the right lie non-linear encounters which reflect the realities of clinical work. All are provided within a supportive, learner-centred setting which allows for feedback and debriefing and is sensitive to each participant’s individual requirements and level of development. Such a continuum would allow the needs of learners to be systematically mapped against their level of clinical experience. In the initial stages of training, or when learning a new procedure, learners would spend time towards the left. As their skills and confidence increased, they would spend more time within a quasi-clinical context towards the right.

Transitions

We have alluded earlier to the work of Meyer and Land on threshold concepts, highlighting how they may relate to issues of transition between states (between the medical student and doctor, for example, or registrar and consultant). We described their notion of ‘enchantment’, where oversimplified pictures of complex realities may be presented by a teacher with the misguided intention of aiding learning. There is a danger that such a strategy may backfire, providing an incomplete and partial view that interferes with full understanding. In our view, oversimplification of clinical procedures by over-focusing on models for technical tasks can lead to a similar enchantment, providing learners with a false sense of security which is abruptly overthrown when they are confronted by real patients. For this reason, simulation should set out to recreate all the necessary components of complex clinical encounters, rather than stripping them down to simple components. Simulation, if imaginatively designed and rigorously applied, can support learners in crossing the many thresholds which straddle the path to maturation as a competent and caring clinician. Such a model would take into account the transitions described earlier.

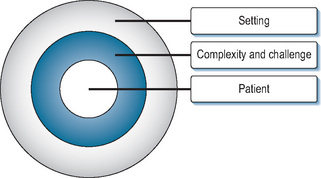

Layered learning

We end by proposing a conceptual model for simulation-based learning of procedural skills. The touchstone of clinical learning must be the real world of patient care. Simulation can only be useful if it is an adjunct to clinical experience. Without clinical care as its aim, simulation loses all meaning. Such care is, of course, driven by the needs of individual patients; the learning which takes place around such care must necessarily be subordinate to the care itself. The clinical needs of the patient must always take priority over the educational needs of the learner. This of course affects the balance between what may be desirable educationally and what is acceptable clinically. It may therefore be useful to consider what compromises can and should be made in order to achieve the best of both these worlds. This onion-like model consists of concentric layers which mediate between clinical and educational agendas (Figure 11.6). The model’s core is the learner’s interaction with a patient while performing an invasive procedure. The simulation sets out to recreate as accurately as possible the characteristics of a real clinical encounter. Conceptually, therefore, a real patient stands at the focal point. In reality, of course, compromises have to be made to maximise the educational benefits and minimise the risk of harm (to the patient and learner). These compromises take several forms and can be seen as a series of protective layers surrounding the ‘idea’ of the real patient.

Layer 1: the patient

First is the need to represent patients themselves. Presenting a real person (in the form of an SP) provides a high level of face validity and overcomes many of the problems around engagement and willing suspension of disbelief which beset the use of mannequins. A drawback is that the SP is not really a patient and may not respond authentically in a given scenario. This can be overcome to a large extent through rigorous role development and SP training. Although SP roles are often based on individual or ‘combinations’ of patients, they are usually crafted by clinicians and educators (Morris, 2006; Nestel and Kneebone, 2009). This is often a result of the pressure to produce new roles for teaching and assessment purposes. These composite roles may therefore be quite different from the authentic experiences of individuals. We know that those who deliver health care experience it differently from those who receive it. Therefore, we must take care that SP roles reflect authentic rather than clinician-interpreted experiences. Real patients can contribute directly to the development of SP roles and scenarios (Black et al, 2006; Nestel et al, 2008b). In our experience, involving real patients in scenario design has been salutary for SPs, clinicians, and educators involved in the process of role development and SP training. The line between authenticity and drama is a fine one, requiring careful management (Nestel and Kneebone, 2009).

Alongside the patient must be a convincing representation of the interventional procedure. In essence, an appropriate model is attached to or aligned with the SP. In order to minimise the task of suspending disbelief, the conjunction should be as realistic as possible. Careful design ensures that no harm can result to the SP, even from clumsy or inexpert intervention. As described earlier, technology in this area is developing rapidly and new approaches to link physical models with SPs are starting to emerge (see later). This combination of SP and model provides a working proxy for a real patient. In this sense, the actual patient is protected by a representation of that patient. At least two aspects of patient safety must be ensured. One is safety from physical harm, ensured as described earlier. The other is safety from any mental distress which might be caused by the conditions of education (as opposed to clinical care). Such distress might include: tiredness (from repeated encounters with many learners); anxiety (if inexperienced learners mistakenly suggest the presence of cancer or other serious disease); unwillingness to offend (if invited to give feedback); learner reaction to feedback; and pressure to comply with being ‘taught on’ (especially within the power gradient around clinical care).

Layer 2: complexity and challenge

The second layer refers to the level of challenge and complexity which is presented. As has been alluded to earlier, even an apparently simple procedure can become challenging if the patient or their circumstances are perceived as difficult. Such challenges might include patient behaviour (aggression, hostility, anxiety, confusion, or intoxication), environmental problems (missing, malfunctioning, or unfamiliar equipment), team working issues (dysfunctional relationships with colleagues), or personal issues (tiredness and lack of confidence in own skill). Simulation offers the opportunity to address those elements and ensure that they are aligned appropriately. This high degree of control allows simulations to be calibrated according to the level of challenge required by individual learners and to be used sequentially to build up an evolving picture of skills.

Layer 3: the setting

The final layer relates to the educational setting within which an encounter takes place. Such settings should support a learner-centred approach which provides opportunities for experience, feedback, and developing a systematic approach to the acquisition of skills. From the institution’s point of view, learners must be able to practice as required, and their learning must be scheduled within the practical constraints of the curriculum.

Using SPs as a mirror for clinical encounters can satisfy many of these desiderata. SPs are able to ‘sit on their own shoulder’ while playing a role, monitoring the learner’s response as well as providing detailed and systematic feedback tailored to the needs and capacities of individual learners. Locating simulations within purpose-designed facilities allows video recording to be used. This has a powerful effect in consolidating learning and allowing learners to view their own performance as others have seen it. This provides additional resources for documenting the acquisition of expertise and building up longitudinal portfolios. Such issues lie beyond the scope of this chapter, but are likely to become increasingly prominent. This deliberate design allows safeguards to be embedded, ensuring that scenarios can be monitored and stopped if they are generating unduly high levels of anxiety or provoking potentially destructive behaviours.

Future directions

In our view, a major limiting factor in the development of effective simulation has been the limited availability of satisfactory models for procedural practice. Interesting possibilities are now emerging. Imaginative use of prosthetics techniques from the film and television industries seems likely to create new solutions to the limitations of current simulators. It seems likely that hybrid simulation will be developed widely in the future, offering increasing levels of realism and engagement. We are now, for example, working towards much higher levels of perceived realism through the use of sophisticated materials which can be seamlessly joined to SPs. This concept of seamlessness between realistic models and SPs eliminates much of the artificiality of crude simulators, creating a ‘believable patient’ where both the person and the procedure are sufficiently convincing to engage clinicians. This minimises obstacles to suspending disbelief and makes it much easier to engage with the scenario as a clinical encounter. Crucially, this approach allows bespoke simulations to be developed in response to educational need, rather than being constrained by which models are available for use.

Implications for practice

In this chapter, we have outlined key issues affecting how procedural skills are learnt, taught, and assessed. Providing learner-centred education within an increasingly embattled health care system creates new complexities and challenges for which solutions must be found. We have put forward a conceptual model and described practical ways of encouraging excellence while protecting patients from harm. Procedural skills must take their place within a wider curriculum, supported by all the elements of educational design which underpin health care education. We end on a note of caution. However seductive the attractions of simulation, our touchstone must remain clinical practice. Simulation cannot be an end in itself but must serve an educational need. At the heart of every simulation lies a patient, either explicitly or implicitly. Simulation can only ever be an adjunct to clinical experience, never a substitute. The challenge is to ensure that simulation remains rooted in clinical experience and does not develop into a self-referential universe which loses touch with reality (Bligh and Bleakley, 2006). Technology has much to offer in expanding what simulation can offer. But it must remain a tool in the service of education.

Adamo G. Simulated and standardized patients in OSCEs achievements and challenges 1992–2003. Med Teach. 2003;25:262-270.

Barrows H.S. Simulated patients in medical teaching. Can Med Assoc J. 1968;98:674-676.

Bereiter C., Scardamalia M. Surpassing ourselves: an inquiry into the nature and implications of expertise. Chicago, IL: Open Court; 1993.

Bereiter C., Scardamalia M. Learning to work creatively with knowledge. De Corte E., et al, editors. Unravelling basic components and dimensions of powerful learning environments. 2003. EARLI Advances in Learning and Instruction Series

Black S., Nestel D., Horrocks E., et al. Evaluation of a framework for case development and simulated patient training for complex procedures. Simul Healthc. 2006;1:66-71.

Bleakley A. Broadening conceptions of learning in medical education: the message from teamworking. Med Educ. 2006;40:150-157.

Bleakley A.A. A common body of care: the ethics and politics of teamwork in the operating theater are inseparable. J Med Philos. 2006;31:305-322.

Bligh J., Bleakley A. Distributing menus to hungry learners: can learning by simulation become simulation of learning? Med Teach. 2006;28:606-613.

Bokken L., Linssen T., Scherpbier A., et al. Feedback by simulated patients in undergraduate medical education: a systematic review of the literature. Med Edu. 2009;43:202-210.

Boots R., Gegerton W., McKeering H., et al. They just don’t get enough! Variable intern experience in bedside procedural skills. Internal Med J. 2009;39:222-227.

Boud D., Keogh R., Walker D. Promoting reflection in learning. In: Edwards R., Hanson A., Raggat P., editors. Boundaries in adult learning. New York: Routledge, 1996.

Boulet J., Smee S., Dillon G., et al. The use of standardized patient assessments for certificate and licensure decisions. Simul Healthc. 2009;4:35-42.

Bradley P.P. The history of simulation in medical education and possible future directions. Med Educ. 2006;40:254-262.

Bruner J.S. Actual minds. Possible worlds. Cambridge, MA: Harvard University Press, 1986.

Bruner J.S. Acts of meaning. Cambridge, MA: Harvard University Press, 1990.

Bruner J.S. The narrative construction of reality. Crit Inq. 1991;18:1-21.

Calman K. Medical education, past, present and future. Edinburgh: Churchill Livingstone Elsevier, 2007.

Cave J., Goldacre M., Lambert T., et al. Newly qualified doctors’ views about whether their medical school had trained them well: questionnaire surveys. BMC Med Educ. 2007;7:38.

Cleland J., Abe K., Rethans J., et al. The use of simulated patients in medical education. AMEE Guide No 42 Medical Teacher. 2009;31(6):477-486.

Coldicott Y., Pope C., Roberts C., et al. The ethics of intimate examinations – teaching tomorrow’s doctors. [Commentary]: Respecting the patient’s integrity is the key. [Commentary]: Teaching pelvic examination – putting the patient first. BMJ. 2003;326:97-101.

De Cossart L., Fish D. Cultivating a thinking surgeon. Shrewsbury: tfm Publishing, 2005.

Dreyfus H., Dreyfus S. Mind over machine: the power of human intuition and expertise in the era of the computer. Oxford: Basil Blackwell, 1986.

Engestrom Y. Expansive learning at work: toward an activity-theoretical reconceptualisation. London: Institute of Education, 2001.

Engestrom Y., Miettinen R., Punamaki R., editors. Perspectives on activity theory,. Cambridge: Cambridge University Press. 1999.

Ericsson K. Deliberate practice and the acquisition and maintenance of expert performance in medicine and related domains. Acad Med. 2004;79:S70-S81.

Ericsson K. Recent advances in expertise research: a commentary on the contributions to the special issue. Appl Cognit Psychol. 2005;19:233-241.

Faulkner H., Regehr G., Martin J., et al. Validation of an objective structured assessment of technical skill for surgical residents. Acad Med. 1996;71:1363-1365.

Fitts P., Posner M. Human performance. Belmont, CA: Brooks/Cole Publishing Co, 1967.

Flexner A. Medical education in the United States and Canada: a report to the Carnegie Foundation for the Advancement of Teaching. New York: Carnegie Foundation for the Advancement of Teaching, 1910.

General Medical Council. Recommendations as to the medical curriculum. London: HMSO, 1947.

General Medical Council. Recommendations as to the medical curriculum. London: GMC, 1957.

General Medical Council. Tomorrow’s doctors. London: General Medical Council, 2003.

GMC. Good medical practice. 2006.

Harden R.M. Twelve tips for organizing an objective structured clinical examination (OSCE). Med Teach. 1990;12:259-264.

Harden R.M., Gleeson F.A. Assessment of clinical competence using an objective structured clinical examination (OSCE). Med Educ. 1979;13:41-54.

Higham J., Nestel D., Lupton M., et al. Teaching and learning gynaecology examination with hybrid simulation. Clin Teach. 2007;4:238-243.

Hodges B. Validity and the OSCE. Med Teach. 2003;25:250-254.

Homer M., Pell G. The impact of the inclusion of simulated patient ratings on the reliability of OSCE assessments under the borderline regression method. Med Teach. 2009;31:420-425.

Hoppe R.B. Standardized (simulated) patients and the medical interview. In: Lipkin M., Putnam S., Lazare A., editors. The medical interview. New York: Springer-Verlag, 1995.

Howe A., Anderson J. Involving patients in medical education. Br Med J. 2003;327:326-328.

Ker J.S., Dowie A., Dowell J., et al. Twelve tips for developing and maintaining a simulated patient bank. Med Teach. 2005;27:4-9.

Kneebone R.L. Clinical simulation for learning procedural skills: a theory-based approach. Acad Med. 2005;80:549-553.

Kneebone R. Perspective: simulation and transformational change: the paradox of expertise. Acad Med. 2009;84:954-957.

Kneebone R., Bello F. Technology in surgical education. Taylor I., Johnson C., editors. Recent advances in surgery, 28. London: Royal Society of Medicine Press, 2005.

Kneebone R., Kidd J., Nestel D., et al. An innovative model for teaching and learning clinical procedures. Med Educ. 2002;36:628-634.

Kneebone R.L., Nestel D., Moorthy K., et al. Learning the skills of flexible sigmoidoscopy – the wider perspective. Med Educ. 2003;37(Suppl. 1):50-58.

Kneebone R.L., Scott W., Darzi A., et al. Simulation and clinical practice: strengthening the relationship. Med Educ. 2004;38:1095-1102.

Kneebone R.L., Kidd J., Nestel D., et al. Blurring the boundaries: scenario-based simulation in a clinical setting. Med Educ. 2005;39:580-587.

Kneebone R., Nestel D., Wetzel C., et al. The human face of simulation: patient-focused simulation training. Acad Med. 2006;81:919-924.

Kneebone R., Nestel D., Yadollahi F., et al. Assessing procedural skills in context: exploring the feasibility of an Integrated Procedural Performance Instrument (IPPI). Med Educ. 2006;40:1105-1114.

Kneebone R., Nestel D., Bello F., et al. An Integrated Procedural Performance Instrument (IPPI) for learning and assessing procedural skills. Clin Teach. 2008;5:45-48.

Kolb D., Fry R. Toward an applied theory of experiential learning. In: Cooper C., editor. Theories of group process. London: Wiley, 1975.

Lave J., Wenger E. Situated learning. Legitimate peripheral participation. Cambridge: Cambridge University Press, 1991.

Leblanc R., Tabak D., Kneebone R., et al. Psychometric properties of an integrated assessment of technical and communication skills. Am J Surg. 2009;197:96-101.

Lingard L. The rhetorical ‘turn’ in medical education: what have we learned and where are we going? Adv Health Sci Educ Theory Pract. 2007;12:121-133.

Ludmerer K. Time to heal. Oxford: Oxford University Press, 1999.

MacRae H., Regehr G., Leadbetter W., et al. A comprehensive examination for senior surgical residents. Am J Surg. 2000;179:190-193.

Maran N., Glavin R. Low- to high-fidelity simulation – a continuum of medical education? Med Educ. 2003;37:22-28.

Martin J.A., Regehr G., Reznick R., et al. Objective structured assessment of technical skill (OSATS) for surgical residents. Br J Surg. 1997;84:273-278.

Medical Education Committee of the British Medical Association. The training of a doctor: a report of the Medical Education Committee of the British Medical Association. London: HMSO, 1948.

Meyer J., Land R. Threshold concepts and troublesome knowledge: linkages to ways of thinking and practising within the disciplines. In Enhancing Teaching and Learning Environments in Undergraduate Courses. Edinburgh: School of Education, University of Edinburgh; 2003.

Meyer J., Land R. Threshold concepts and troublesome knowledge 2: epistemological considerations and a conceptual framework for teaching and learning. High Educ. 2005;49:373-388.

Miller G. The assessment of clinical skills/competence/performance. Acad Med. 1990;65(9):S63-67.