Chapter 16 Clinical Effectiveness Skills in Practice

Introduction

Keeping professional knowledge and skills up to date and ensuring best practice by monitoring and reviewing the ongoing effect of our activity, making changes accordingly (Health Professions Council 2005) is easier said than done.

The challenge is not so much about finding the right evidence to do the right practice at the right time in the right place; it is about being the right person to deliver the practice, about feeling enabled to implement new practice and having the resources to keep up to date with all the new evidence being generated.

Being clinically effective is non-negotiable. So how can effective practice become the norm and something that all practitioners feel able to engage with? This chapter explores the core components of clinical effectiveness, highlights the barriers to engagement and provides tools to assist practitioners to contribute to the clinical effectiveness agenda in their workplace. Effective practice is not just about clinical practice though, it is about all practice; it includes managers, educationalists, researchers, clinicians, care providers and service users. Clinical effectiveness interlinks with several other skills for practice and this chapter should therefore be read and used in conjunction with other chapters within this book, as many of them discuss and present particular aspects of effective practice. Clinical effectiveness doesn’t just happen. It requires reflection of planning and action. This chapter, however, is not an academic appraisal or ‘how to’ guide to clinical effectiveness. It is an introduction to the topic and reference point for further reading with direct links to everyday occupational therapy practice.

Context

Policy, both in the UK (NHS Executive 1998, NHS Wales 1998, Scottish Executive 1998, College of Occupational Therapists 1999) and internationally, has seen an increasing emphasis on the quality of health service provision and the drive for evidence-based interventions over the last 20 years. Within the UK this is set in a backdrop of high-profile cases where serious malpractice has injured the credibility of health-care practitioners (Department of Health 2002a, 2002b, 2004) and led to the rise of a process by which every part of the health service was able to quality assure its decisions. More recently there has been progress from a measuring and assuring process to a more mature approach to developing and improving services which involves working in partnership across organisations and with services users and their carers. This transparency is promoting a culture of improvement that is driven by learning from and with partners (NHS Plan 2000, Scottish Executive 2005).

Clinical effectiveness is a tool or a group of tools that can be used to improve individual practice and whole services. There are many available resources that assist in overcoming barriers and developing practitioners’ clinical effectiveness skills, and ensure that they become part and parcel of everyday practice (see Tables 16.1, 16.2).

Table 16.1 Overcoming Barriers to Implementing Clinical Effectiveness

| Barriers | Possible solutions |

|---|---|

| Lack of theoretical and practical clinical effectiveness skills | Consult colleagues with good current clinical effectiveness skills: for example new graduates, research practitioners. Involve your local clinical effectiveness departments who will support development of skills through education. Join or form a journal club where skills can be developed and practised. |

| Access to resources/information | Many electronic databases are now available and easily accessible from your department, local hospital or community library. Get involved in specific practice networks. For example special interest groups and local, national and international practice development networks. |

| Support – local, organisational | Develop links with peers. Use or create coffee break support groups, join formal and informal networks, managed clinical networks, etc. Find out about local clinical effectiveness departments and their agendas. Highlight significant areas within your organisation’s plan which support your clinical effectiveness work and present this to managers and peers. Ensure that there are clinical effectiveness objectives within your personal development plans. |

| Time | There is no easy answer to this but consider how you use your time. Use or negotiate CPD sessions – at least ½ session per month is recommended. |

| Authority to act | Clarify this via supervision. Ensure your work fits with your organisation’s strategic plan. Establish links with your clinical effectiveness departments/organisational development or change and innovation departments. Seek out champions who are both credible and influential within the practice context and/or who will support/champion your work within their area. |

| Resources | Use electronic resources. Make links with higher education institutes. There are a number of grants that can be accessed both nationally and locally. Check your professional body, local clinical effectiveness departments, and government health departments. Think small change. |

| Colleagues’ apathy | The model for improvement can show colleagues the possibilities. |

| Motivation | Buddy systems. Coffee break CE groups. |

Table 16.2 Critical appraisal Primary filters (Adams 1999b)

Frequently practitioners are involved in clinical effectiveness activities of some shape and form but do not always label it as such. Prior to commencing an allied health professions clinical effectiveness network project in Scotland, allied health professionals were questioned about their involvement and confidence with clinical effectiveness. The responses highlighted that specific clinical effectiveness activities were patchy with a large number of respondents stating that they needed assistance to undertake such projects. A limited number of respondents highlighted that they were already implementing guidelines within their practice (Holdsworth et al 2005). The barriers to engaging with clinical effectiveness are highlighted later in this chapter but many of the issues that stopped practitioners in this study from practising clinical effectiveness activities related to their confidence.

What is clinical effectiveness?

Various definitions of clinical effectiveness exist:

The extent to which specific clinical interventions, when deployed in the field for a particular patient or population, do what they are intended to do - that is, maintain and improve health and secure the greatest possible health gain from available resources (NHS Executive 1996). There are a significant number of issues and methodologies concerned with the quality of clinical care, for example clinical audit, R&D, education and training, continuous quality improvement, integrated care pathways, clinical guidelines. The real world definition and application of these terms varies, sometimes for organisational or historical reasons …. The umbrella term ‘clinical effectiveness’ refers to activities that have as their focus the measuring, monitoring and improvement of clinical care. Clinical Effectiveness is a component of Clinical Governance (Scottish Executive 1999).

The Chartered Society for Physiotherapists’ definition of clinical effectiveness has been widely used and states that it is, ‘the right person (you) doing: the right thing (evidence based practice) in the right way (skills and competence) at the right time (providing treatment/services when the patient needs them), in the right place (location of treatment/services), with the right result (clinical effectiveness/maximising health gain)’ (Chartered Society of Physiotherapists 2007).

The NHS Scotland online education resource states that clinical effectiveness has been actively used to improve the quality of treatments and services since the late 1980s. Health professionals are involved in audits and improvement projects as an integral part of promoting good clinical practice. Clinical effectiveness is reliant on the wealth of expertise, knowledge and skill of those who work with patients and who have an insight and understanding as to how the service works and how it could be improved (NHS Scotland 2007).

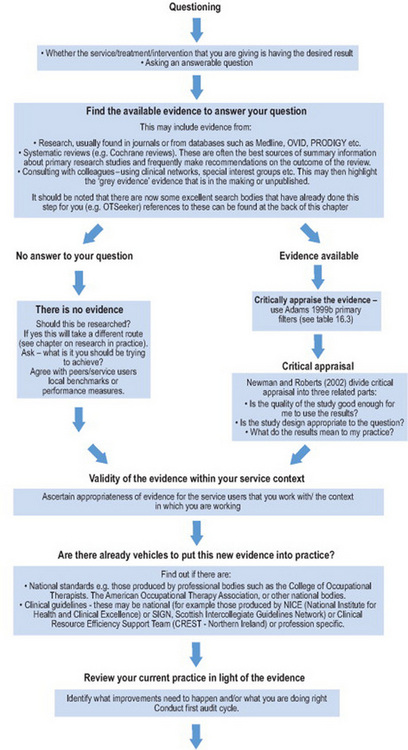

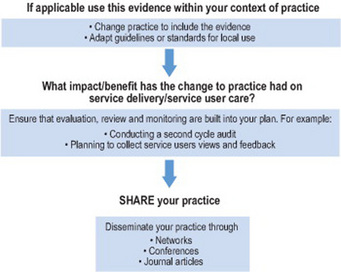

All these definitions highlight that clinical effectiveness is about practitioners continually assessing and analysing what they do, in context of the evidence that is available to improve care and practice. Clinical effectiveness is a cycle that entails a series of steps. These can at times seem onerous, until you realize the resources that are available for each step (see Figure 16.1 and Table 16.2).

‘A successful clinical effectiveness initiative not only identifies the best information about those interventions which work but also makes that information available in an accessible and understandable format and ensures that it is used in practice’ (McClarey and Duff 1997). But in reality how does all this work? Vignette 16.1 provides one example.

Vignette 16.1

In 2002 a group of occupational therapists within a NHS Board in Scotland set out to ensure that the records they were keeping met the standards set by the College of Occupational Therapists (2002, 2005). The relevance of this standard in the context of clinical effectiveness has been further highlighted more recently by the Department of Health. It states:

‘Records are a valuable resource because of the information they contain. High quality information underpins the delivery of high quality evidence based health care…. Information is of greatest value when it is accurate, up to date and accessible. An effective records management service ensures that information is properly managed and is available when needed:

(Department of Health 2006, section 2)

What is the clinical question?

The occupational therapists asked the question ‘Do our records meet the required standard as stated within the College of Occupational Therapists Code of Ethics and Professional Conduct (2005) and the record keeping core standard (2000)?’

What is the best evidence for good practice?

The group undertook a literature review on record keeping using Medline and CINAHL electronic databases and identified 98 relevant articles. They also included a letter in OT News that attracted interest and information from as far afield as Hong Kong.

Is the evidence applicable for our area of practice?

The available literature was read and appraised. A retrospective audit tool was designed which differed from the COT tool in the fact that it had additional responses. The tool was piloted in four different geographical areas and across three different specialisms. This highlighted areas for amendment and the need for a guidance sheet to be developed in order to ensure uniformity of interpretation.

Is there local evidence of implementing good practice in relation to record keeping?

There were no local examples of auditing occupational therapy record keeping within this health board area so there was no sound evidence of good practice. The audit tool and guidance notes were distributed across the region and services were audited retrospectively against the standards.

Did the audit confirm that the OT service was providing good practice?

The audit was returned by 78% of occupational therapists and was analysed anonymously by the audit resource department within the health Board. The results showed that the audit tool was successful in identifying both good practice and areas requiring improvement.

Is a change in practice required?

The audit identified areas requiring change. These and other areas of good practice were fed back to the services via the heads of department. Offers of facilitation to develop and implement local action plans ahead of the second cycle were also given.

A second cycle was developed and implemented and improvements had occurred. The additional benefits were also highlighted by the group.

Dissemination and sharing of practice

The learning from this project was not only shared locally amongst occupational therapists but also within the local health board record keeping group set up by the clinical governance department. Nationally it was presented at a conference and submitted to the Clinical Improvements (CLIP) database (www.eguidelines.co.uk ) A poster was made and has been presented at a variety of conferences. The tool has been disseminated internationally (Gibson et al 2004).

Room for improvement?

Looking at this piece of work (Vignette 16.1) several years on it is clear that there is a missing component in the process – the engagement and involvement of service users. The project team highlighted this in their conclusions. If this tool was examined today one would expect to find that it also supported service users, both in the development of the audit tool and in reporting their involvement with their clinical records. This could take the form of a questionnaire regarding their involvement with the treatment process particularly asking for evidence of collaborative goal setting, or ease of access to their occupational therapy notes when requested. It may also include a question on service users ease of access to local standards of record keeping.

The ingredients for success

There were obviously a number of factors that contributed to the success of the record-keeping project.

Success factors

The above list is not exhaustive but these elements form some of the important aspects that are key to the success of effective practice. However this level of support and influence is not always available. The next section, therefore, explores other models of participation.

‘But I don’t have the time to do that’

The record-keeping project, although relatively simple, was complex in its involvement of a whole health board occupational therapy service. What could you have done if you did not have the support of your colleagues or manager, a major barrier to implementing effective practice?

The literature search and review

Could you link with higher education establishments and request to work with undergraduate students as a possible project? Could this be carried out within monthly clinical professional development sessions? Could you form a journal club; reviewing with peers may also have the desired effect of motivating others to take part.

Development of the audit tool

In the UK most health and social care establishments have clinical effectiveness, clinical governance or quality departments. These resources can be invaluable as they provide tools to use in the workplace but they are also a very useful resource to support practitioners’ skills in audit and practice development. In the original project the team collaborated with their local clinical effectiveness department to develop their record-keeping audit tool. This is a sound method of undertaking a high-quality piece of work and also ensures that local clinical effectiveness departments are engaged in the programme.

Start small

Having considered the evidence – start small. Practitioners should look at their own practice and record keeping and find out if they are achieving the standards. Working with another colleague enables the audit of each other’s notes, highlighting any issues and developing an action plan.

Change management

Making changes within a service can be very difficult, especially when this is trying to be achieved alone. Change management skills are crucial within clinical effectiveness projects. There is an extensive evidence-base that suggests practitioners should take a multifaceted approach to changing practice (Grol 1997, Glanville et al 1998, West et al 2001). Grol (1997) specifically highlights that practitioners need to identify their barriers to change before they begin. Another useful change management model (the model for improvement) was developed by Langley et al (1992). The benefits of this approach are that it looks at very small and manageable changes.

The model for improvement

The model was developed by Langley et al (1992) and is now being used extensively within organisations. It works on a series of small change cycles that can grow into fairly substantive improvements. Because it takes a change cycle at a time it is less intrusive and less risky than implementing major change. It allows practitioners to test their work as they go along to ensure that it is really making an improvement to the service they are delivering.

The model has two parts – thinking and doing.

Thinking

Ask three fundamental questions:

Doing: Plan, Do, Study, Act (The PDSA cycle)

The PDSA process allows practitioners to test out their ideas about the changes that they believe are required to make an improvement (Plan), working on one idea at a time. The secret of success is to focus on very small-scale changes (Do). This means that there is very little altered and it is easy to revert back to the original way of working if something is unsuccessful. It also means that changes can be made and feedback received very quickly – how motivating is that! If it does work and information supports the change it can go ahead and be fully implemented within the service.

The ‘Study’ part of the cycle gives space to reflect on and learn from what happened.

The ‘Act’ part allows practitioners to question whether they re-run the same cycle again to gather more evidence, adapt what they have done following reflection, or develop further cycles to move the project forward.

In relation to record keeping (Vignette 16.1; see Chapter 13) it might be that an organisation wants to ensure that service users are involved in their own goal planning and that this is easily identifiable within their occupational therapy notes. Trying this out with one service user allows the approach to be easily and speedily tested.

It can be helpful to use this model to examine a hunch about something that might make a difference. Once the PDSA cycle is complete it will be clear if the hunch was correct. One example of this is when a team of practitioners decided to introduce a two-page assessment which they thought would assist with the implementation of a low back pain guideline. When they took this to the multidisciplinary team there were concerns that it would lengthen the initial assessment. It was agreed that two clinics would trial this assessment. The results of these trials highlighted that the assessment form had in fact speeded up the initial assessment process and was easy to use. The rest of the team then adopted this new process. The PDSA approach supported the practitioners’ initial hunch but was tested before full-scale adoption, a far less risky approach. It is useful to record your PDSA reasoning at each stage as this supports the rationale for change which can then be more easily shared with colleagues as in the example above.

The barriers – reality laced with possible solutions

There is a massive volume of literature out there – it really is no wonder that many practitioners feel overwhelmed and paralysed. The reality is that evidence-based practice is not yet part and parcel of everyday practice (Holdsworth et al 2005) and although there has been progress there are still a large number of practitioners who are practising without a sound basis of evidence. That does not always mean that what they are doing is wrong but practitioners are not in a strong position to justify its existence if they do not make any attempt to prove its validity and value. There are of course practitioners who do consciously develop clinical effectiveness strategies and these are highly valued (see Vignette 16.2).

Vignette 16.2

During a 3-year project to set up allied health professions clinical effectiveness networks many clinicians were adamant that they were not involved in clinical effectiveness but when questioned further were in fact doing some really innovative work. One group of senior practitioners had begun to meet informally on a regular basis for coffee and peer support. The support was very much welcomed and as staff got to know each other they began to speak about wider issues like new treatment approaches and interesting journal articles. When the Scottish Intercollegiate Guidelines Network (SIGN) guidelines for stroke came out they found themselves discussing the implications for their area of practice. The next session one of them brought an occupational therapy journal article on implementing guidelines that they read and discussed. A couple of them admitted feeling rusty on critical appraisal and so the following coffee break meeting they had a practical review session and from that session on they became a clinical effectiveness group for neurology. The group evolved from a basis of mutual respect and trust where all participants felt safe to explore practice. This foundation has grown confident practitioners who run monthly journal clubs, implement professional guidelines and have presented their work at conferences. One member of the group has become a regional practice development network representative.

Much has been written on the barriers to implementing clinical effectiveness and evidence-based practice (Groll 1997, Haynes et al 1998, Nolan et al 1998, SIGN 2008). Local, national and international resources have been established to assist clinicians in overcoming these barriers (Glanville et al 1998, West et al 2001, Holdsworth et al 2005). Table 16.1 highlights some of these barriers and outlines possible solutions which are detailed further in the resources listed in Table 16.3 in the Resources Section.

Table 16.3 Resources to support clinical effectiveness

| Clinical effectiveness and research information pack http://www.dh.gov.uk/prod_consum_dh/groups/dh_digitalassets/@dh/@en/documents/digitalasset/dh_4042462.pdf |

Achieving effective practice – a clinical effectiveness and research information pack for nurses, midwives and health visitors |

| Clinical Effectiveness Support Unit (CESU) http://www.cesu.wales.nhs.uk/ |

Clinical Effectiveness Support Unit (CESU) for Wales supporting the Welsh Office Clinical Effectiveness Initiative and implementation of clinical governance |

| Clinical Governance – Educational resource http://www.clinicalgovernance.scot.nhs.uk/section2/introduction.asp |

This website aims to help you use clinical governance and risk management quality improvement methods in your work. The website can be used in 3 ways as a: Programme of learning Reference source Training resource |

| Cochrane Collaboration www.cochrane.org |

The Cochrane Collaboration produces core content for The Cochrane Library, including the Cochrane Database of Systematic Reviews |

| Clinical Governance Support Team (CGST) http://www.cgsupport.nhs.uk/ |

This site has been developed for healthcare professionals, and is a central resource for clinical governance. It provides information, guidance to assist organisations in understanding and successfully implementing clinical governance |

| Clinical Resource Efficiency Support Team (CREST) http://www.crestni.org.uk/ | The Clinical Resource Efficiency Support Team (CREST) group comprises 19 health care professionals from the health service in Northern Ireland with an active interest in promoting clinical efficiency |

| The Critical Appraisal Skills Programme (CASP) http://www.phru.nhs.uk/casp/critical_appraisal_tools.htm |

This programme helps to develop the skills to find and make sense of research evidence |

| Centre for evidence-based medicine http://www.cebm.net/critical_appraisal.asp |

Provides information on how to find and appraise evidence |

| Crombie (1996) The pocket guide to critical appraisal | A pocket guide which provides a basic introduction to the principles underlying critical appraisal and details the process of appraisal, identifying the types of questions to be asked of each paper |

| Effectiveness Matters www.york.ac.uk/inst/crd/pdf/em51.pdf |

Volume (5, Issue 1) of this regular publication from the NHS Centre for Reviews and Dissemination, University of York provides information on how to access and interpret clinical effectiveness resources efficiently |

| E-library (although Scottish based is available online worldwide) www.elib.scot.nhs.uk |

Huge resource of electronic information, databases, library facilities. Also houses the following: |

| Communities of practice | Communities of Practice are ‘groups of people who share a concern, a set of problems, or a passion about a topic, and who deepen their knowledge and expertise by interacting on an ongoing basis’. (Wenger et al: Cultivating Communities of Practice: Harvard Business School Press, 2002) Communities of Practice reflect the fact that much of the knowledge within the health service lies in the heads of its staff, rather than in databases. Communities are therefore a natural place to seek out and access specialised knowledge |

| The knowledge exchange | The knowledge exchange service provides a virtual workspace which Communities of Practice are invited to use in a way that integrates knowledge support with their day to day practice and service modernisation |

| Managed Knowledge Networks MKN – elibrary | The name given to large groups of healthcare staff who need to access, share and apply knowledge in a common broad area of interest – e.g., cancer, coronary heart disease, mental health, diabetes, stroke, healthcare associated infections. Overall aim of developing MKNs is to ensure that knowledge is managed effectively across boundaries of discipline, organisation and sector to support patient care and delivery of health services |

| Evidenced-Based Occupational Therapy Web-portal http://www.otevidence.info/ |

This Evidence-Based Occupational Therapy Web-portal has been designed to provide strategies, knowledge and resources to aid occupational therapists in finding out about and using evidence |

| Health Exec TV www.healthexectv.tv |

An online television channel for managers and professionals across the NHS. Using best practice case studies, high profile interviews, expert panel discussions and field reports to examine the latest developments in health-care management |

| The Joanna Briggs Institute (JBI) www.joannabriggs.edu.au |

An international collaboration involving nursing, medical and AHP researchers, clinicians, academics and quality managers across 40 countries. The role is to improve the feasibility, appropriateness, meaningfulness and effectiveness of health-care practices and health care outcomes by facilitating international collaboration between collaborating centres, groups, expert researchers and clinicians throughout the world |

| The Model for Improvement Langley, Nolan et al |

Tried and tested approach to achieving successful change |

| NHS Quality Improvement Scotland (NHS QIS) www.nhshealthquality.org |

The role of NHS QIS is to lead on improving quality of care and treatment delivered by the health service |

| Practice Development Unit http://www.nhshealthquality.org/nhsqis/1977.html |

A dedicated unit within NHS QIS works to promote a consistent and cohesive approach to health care by: issuing Best Practice Statements organising Practice Development programmes supporting networking for nurses, midwives and allied health professionals encouraging the sharing of best practice |

| The Practice Development Network for Allied Health Professions | The network is made up of uniprofessional networks. You can access information about the occupational therapy network via the NHS QIS website |

| National Institute for Health and Clinical Excellence (NICE) www.nice.org |

The National Institute for Health and Clinical Excellence (NICE) is the independent organisation responsible for providing national guidance on the promotion of good health and the prevention and treatment of ill health |

| National Library for Health http://www.library.nhs.uk |

Includes: Evidence based reviews, Bandolier, Cochrane Library etc Guidance – NICE, care pathways etc Specialist libraries Evidence updates NICE/Published clinical guidelines |

| OT Seeker http://www.otseeker.com/ |

OTseeker is a database that contains abstracts of systematic reviews and randomised controlled trials relevant to occupational therapy |

| Social Care Institute for Excellence (SCIE) www.scie.org.uk |

SCIE’s aim is to improve the experience of people who use social care by developing and promoting knowledge about good practice in the sector. Using knowledge gathered from diverse sources and a broad range of people and organisations |

A common barrier in this lack of engagement is time. And despite many clinical effectiveness initiatives this issue does not seem to be going away; so how do we get around it? Consider a meeting where a senior health board executive told the audience that the key components of effective practice were:

His advice was to forget the IDEAS, as the organisation ‘is awash with ideas’. What was needed, the audience were informed, was the WILL to change and the EXECUTION of the agreed health board priorities.

Whilst an initial feeling of indignation may appear justified (after all, clinicians and service users surely need to contribute their expertise, knowledge and skill to generate IDEAS too) it may be he was not completely wrong and may have had a point! Perhaps the best way to overcome the barriers listed above is to have the WILL to work in partnership; teaming up people with different skills and the tenacity to EXECUTE the ideas together.

There are some really good examples of how partnerships have worked in this way – senior clinicians working with undergraduates to look at the evidence underpinning certain aspects of their everyday work; new graduates being encouraged to use their research and critical appraisal skills (Table 16.2) from the moment they graduate so that the skills are not lost and their organisation fully uses the strengths of its workforce; managers using their skills in change management to bring on the implementation of effective practice; and in some dynamic practices the teaming up with an often underestimated partner, the service users and carers themselves. When it comes to change management and influencing skills the power of a personal narrative can really change hearts and minds.

Practice development

There is an increasing interest in the concept of practice development which addresses the complex arenas in which practitioners work. It recognises that clinical effectiveness requires additional scope so that practice can be examined and valued even when the same effect is not regularly produced. It has been suggested that practice development is a prerequisite to clinical effectiveness (Manley 2000).

The broad approach that practice development adopts is perhaps closer to what many practitioners feel they are involved in. Described as,

‘a continuous process of improvement towards increased effectiveness in person-centred care… brought about by helping health care teams to develop their knowledge and skills and to transform the culture and context of care. It is enabled and supported by facilitators committed to systematic, rigorous continuous processes of emancipatory change that reflect the perspectives of service users’ (Garbett and McCormack 2002: 88).

Practice development was initially a nursing concept but there is growing interest from other professionals.

Page (2002) describes practice development as

This approach is frequently viewed as both motivating and acceptable by practitioners. It identifies with commonly held values and practices and allows practitioners to feel that they have something to offer in the development of effective practice. Becoming part of a practice development network enables practitioners to meet with others working in the same field to discuss approaches, identify underpinning research which may be influencing ways of working and even begin to develop some rigorous multisited research projects. McCormack et al (2004) is an excellent resource for practitioners interested in developing their understanding of practice development.

Resources

Conclusions

This chapter has provided an introduction and overview of clinical effectiveness in practice. The references and resources provided in this chapter are an opportunity to examine your own practice and recognise areas where you are already developing effective practice and others where more can still be achieved. However, these resources are merely tools and in themselves cannot make the necessary changes. At the end of the day the key to clinically effective practice is you.

Acknowledgements

Thanks to Fiona Duncan, Sue Young and Michael Sykes for allowing me to use the case study highlighted in Vignette 16.1.

Adams C. Clinical effectiveness: Part two. Finding the best evidence. Community Practitioner. 1999;72(7):205-207.

Adams C. Clinical effectiveness: Part three. Interpreting your evidence. Community Practitioner. 1999;72(9):289-292.

Armstrong D, Jones R, Reyburn H. A Study of general practitioners’ reasons for changing their prescribing behaviour. British Medical Journal. 1996;312:949-952.

Chartered Society of Physiotherapists Clinical Effectiveness. Accessed on 3 http://www.csp.org.uk/director/effectivepractice/clinicaleffectiveness.cfm, November 2007.

College of Occupational Therapists. Position Statement on Clinical Governance. London: College of Occupational Therapists, 1999.

College of Occupational Therapists. Occupational therapy record keeping core standard. Standards for practice (SP002). London: College of Occupational Therapists, 2000.

College of Occupational Therapists. College of occupational therapists code of ethics and professional conduct. London: College of Occupational Therapists, 2005.

Crombie IK. The pocket guide to critical appraisal. London: BMJ Books, 1996.

Department of Health. The Shipman inquiry Safeguarding Patients: Lessons from the Past – Proposals for the Future. London: The Stationery Office, 2002.

Department of Health. Learning from Bristol: the Department of Health’s Response to the Report of the Public Inquiry into Children’s Heart Surgery at the Bristol Royal Infirmary 1984–1995. London: The Stationery Office, 2002. Command Paper CM 5363

Department of Health. The Shipman Inquiry Fifth Report Safeguarding Patients: Lessons from the Past – Proposals for the Future, Command paper. London: The Stationery Office, 2004.

Department of Health. Records Management: NHS Code of Practice. 2006:5. Part 1, section 2. 14

Department of Health and Social Services in Northern Ireland Fit for the Future – a new approach. The Stationery Office, London

Doumit G, Gattellari M, Grimshaw J, et al. Local opinion leaders: effects on professional practice and health care outcomes. Reviews Issue 3. Art. No.: CD000125. DOI: 10.1002/14651858. CD000125, pub 3. Cochrane Database of Systematic. 2006.

Garbett R, McCormack B. A concept analysis of practice development. Journal of Research in Nursing. 2002;7(2):87-100.

Gibson F, Sykes M, Young S. Record Keeping in Occupational Therapy: Are we meeting the standards set by the College of Occupational Therapists? British Journal of Occupational Therapy December. 2004;67(12):547-550.

Glamville J, Haines M, Auston I. Getting research findings into practice. Finding information on clinical effectiveness. British Medical Journal. 1998;317:200-203.

Grol R. Beliefs and Evidence in Changing Clinical Practice. British Medical Journal. 1997;315:418-421.

Haynes B, Haines A. Getting research findings into practice. Barriers and bridges to evidence based clinical practice. British Medical Journal. 1998;317:273-276.

Health Professions Council. Standards of Proficiency for Occupational Therapy. London: Health Professions Council, 2005.

Holdsworth L, Blair A, Miller J. The Scottish physiotherapy clinical effectiveness network: Supporting clinical effectiveness activity? Clinical Governance: An International Journal. 2005;10(2):148-164.

Langley GJ, Nolan KM, Norman CL, et al. The Improvement Guide: A Practical Approach to Enhancing Organisational Performance. San Francisco, CA: Jossey-Bass, 1992.

Lomas J, Enkin M, Anderson G. Opinion leaders versus audit and feedback to implement practice guidelines. Journal of the American Medical Association. 1991;266(9):1217.

Laming Lord. The Victoria Climbie Inquiry – Report of an inquiry. London: HMSO, 2003.

Manley K. Operationalising an advanced practice/consultant nurse role: an action research study. Journal of Clinical Nursing. 1997;6:179-190.

Manley K. Organisational culture and consultant nurse outcomes: part 1. Organisational culture. Nursing Standard. 2000;14(36):34-38.

McClarey M, Duff L. Clinical effectiveness and evidence-based practice. Nursing Standard. 1997;11(51):31-35.

McCormack B, Manley K, Garbett R. Practice Development in Nursing. Oxford, UK: Blackwell Publishing Ltd, 2004.

Newman M, Roberts T. Critical Appraisal 1: is the quality of the study good enough for you to use the findings? In: Craig JV, Smyth RL, editors. The Evidence-based Practice Manual for Nurses. Edinburgh, UK: Churchill Livingstone, 2002.

Nolan M, Morgan L, Curran M, et al. Evidence-based care: can we overcome the barriers. British Journal of Nursing. 1998;7(20):1273-1278.

NHS Executive. Promoting Clinical Effectiveness – A framework for action in and through the NHS. London: Department of Health, 1996.

NHS Executive. A first class service; Quality in the new NHS. London: HMSO, 1998.

NHS Executive. NHS Plan. London: HMSO, 2000.

NHS Scotland. Educational Resource: Clinical Governance. 2007. http://www.clinicalgovernance.scot.nhs.uk/section2/introduction.asp, November 2007. Accessed on 3

NHS Wales. Putting Patients First. London: The Stationary Office, 1998.

Page S. The role of practice development in modernising the NHS. Nursing Times. 2002;98(11):34-36.

Scottish Executive. Designed to Care – Renewing the National Health Service in Scotland. Edinburgh, UK: Scottish Executive Health Department, 1998.

Scottish Executive. Goals for Clinical Effectiveness MEL. Edinburgh, UK: Scottish Executive Health Department, 1999.

Scottish Executive. Building a Service Fit for the Future – A national framework for service change in the NHS Scotland. Edinburgh, UK: Scottish Executive Health Department, 2005.

Scottish Intercollegiate Guidelines Network. SIGN 50: A guideline developers’ handbook. 2001. http://www.sign.ac.uk/guidelines/fulltext/50/index.html, November 2007. Accessed on 3

Scottish Intercollegiate Guidelines Network. SIGN 50: A guideline developers’ handbook. Edinburgh, UK: SIGN Executive, 2008.

Wenger E, McDermott R, Snyder W. Cultivating Communities of Practice: A guide to managing knowledge. Boston: Harvard Business School Press, 2002.

West BJM, Wimpenny P, Duff L, et al. An educational initiative to promote the use of clinical guidelines in nursing practice. Aberdeen, UK: The Centre for Nurse Practice Research and Development, 2001.