7 Creating a learning environment

Approaches to participation: expansive approach Fuller and Unwin describe a continuum of approaches to participation. At one end of the spectrum is an expansive approach. This approach has the following characteristics: departments offer their learners broad access to multiple communities of practice and stimulate boundary crossing; learners receive enough time for reflection and off-the-job time; departments aim to help learners become rounded experts and strive to align the development of learners’ and organisational capabilities; a named individual is present to support learners; the status of learner is explicitly recognised.

Approaches to participation: restrictive approach A restrictive approach represents the other end of the continuum from an expansive one. This approach has the following characteristics: departments offer their learners narrow access to learning in terms of tasks, knowledge, and different locations; there is limited boundary crossing; learners have practically no opportunities to reflect; departments aim to help learners become partial experts, tailored to organisational needs. Ad hoc support is offered to learners and there is ambivalent recognition of their status as learners.

Dundee Ready Educational Environment Measure (DREEM) An instrument widely used to measure undergraduate learning environments.

Dutch Residency Educational Climate Test (D-RECT) An instrument to measure the postgraduate learning climate.

Herzberg’s motivation-hygiene theory Herzberg developed his motivation-hygiene theory in 1959 according to which ‘hygiene factors’ (like working conditions or physical surroundings) prevent dissatisfaction, while ‘motivation factors’ (like recognition of achievement or the work itself) induce satisfaction. Dissatisfaction and satisfaction are, thus, not two ends of a continuum; they are separate entities. His work implies that some interventions will reduce dissatisfaction, but not necessarily generate satisfaction.

Outline

Imagine that you are a medical student on your first day in hospital. You are feeling excited but also anxious. You are keen to get started but, when you arrive on the ward, you find it busier than you ever imagined. Your consultant does not expect you (in fact, he is not even on the ward yet), there is nowhere safe for you to put your bag, and the nurses tell you to stop getting in the way. You are put in an office and asked to wait until the consultant finishes the ward round. How would that influence your subsequent learning experience?

This is an example of a learning environment that is poorly organised at a number of levels, but probably all too familiar to many of our students. It shows how such an environment can have a negative impact on the learners within it. To be able to learn effectively, students need to be welcomed and supported. They need to feel that they belong to their place of learning and are not simply ‘spare parts’ getting in the way of other people. Learning environments are increasingly identified as having an influence on those within them – just as ‘good’ or ‘bad’ teachers can affect learners’ experiences, so too can learning environments. This chapter explores learning environments in undergraduate and postgraduate medical education. It describes why they are important and what factors combine to make up one. It then explores some of those factors in detail before considering how learning environments can be measured. Towards the end of the chapter, we emphasise how important it is for organisations to invest in optimal learning environments, and offer some suggestions as to how organisations may optimise them. By aiming to develop learning environments that are positive, welcoming, and supportive to learners, institutions, organisations, and departments may avoid replicating the situation described above.

Why are we interested in learning environments?

From as early as primary school, learning environments have been identified as an important influence on the learners within them (DfES, 2006). Just as children’s environments are vital to their development, so too are the environments in which our students and junior doctors learn. By gaining a better understanding of them and the particular influence they may exert upon students, it might be possible to alter them to improve learning experiences. Equally, by looking at the outcomes of individual ones, it may be possible to identify relationships between outcomes and the environments that produced them. If such a relationship can be shown, then it is reasonably to postulate that, by adjusting one, we may influence the other.

Many medical schools, specialty boards, and governments expect, and progressively, inspect the quality of their medical education. Policy makers want insight into the educational functioning of medical schools and teaching hospitals. Using those insights, institutions become aware of their strengths and weaknesses and are able to do something about them. Often, quality improvement efforts are doomed to fail because it is an arduous task to change daily routines. Evaluation of the current situation, however, is an important first step on the way towards better medical education. Of course, institutions should see to it that succeeding steps follow; they should make plans to improve the assessed shortcomings, and actually make the improvements and evaluate again to see whether the situation has really improved. That is, in fact, a description of the famous Plan-Do-Check-Act cycle, which Deming designed in the 1950s to promote quality improvements (Tague, 2004, pp 390–392). So, in conclusion, learning environments are important because they influence the learners working within them (and vice versa) and because measuring their climates shows how institutions are functioning educationally.

What makes a learning environment?

Meanings of the terms ‘learning’ and ‘environment’

There are many published descriptions of the importance of learning environments (often interchangeably referred to as educational climates, educational environments, or learning climates) within the curricula of institutions but fewer publications explore what makes up a learning environment. The word ‘environment’ can mean physical space (with synonyms like surroundings, terrain, settings) and it can also mean atmosphere or ambiance. The term learning environments has several connotations, which include physical space and settings, teacher–learner relationships, and feelings. A big criticism of the term, however, is its all-embracing nature. People use it to describe purely material facilities such as the number of available computers or the architecture of a building or – at completely the opposite end of the spectrum – everything that happens on a campus or in a department (Genn, 2001; Hutchinson, 2003). For the purpose of this chapter, we must therefore be explicit about what we mean by learning environments.

First, it is important to dwell on our understanding of learning. As discussed in Chapters 2 and 12, Sfard describes two major outlooks on learning using two metaphors (Sfard, 1998). The first, traditional, metaphor is ‘acquisition’, which implies acquiring and possessing knowledge. The last few decades have seen a new metaphor of learning grow apace in medical education: the ‘participation’ metaphor. This term refers to learning by doing and becoming part of a greater whole. In this chapter, we have deliberately chosen not to use one metaphor because we agree with Sfard that both metaphors have value in understanding learning.

Formal and informal environments

Roughly, two types of environment exist within medical education. The first environment exists within universities and is predominantly designed for learning. Students attend classes, learn in small and large group formats, and are regularly assessed on their competence. The first and foremost aim of the students is to learn. The second environment is in (teaching) hospitals and community health facilities, where the focus is on working. Medical students in the clinical phase of their training and residents learn their craft through participating in daily practice. Their predominant aim is to work. Although most learners acknowledge the importance of learning by doing, there is always a tension between service delivery and education. Formal learning environments may have different characteristics from informal ones, but in our opinion non-clinical and clinical environments show many similarities when it comes to considering them as learning environments. In this chapter, therefore, we describe several general aspects that are equally applicable to both environments. In the section that considers how to measure learning environments, we list measures that are applicable to undergraduate and postgraduate environments.

Four aspects of learning environments

As was explained earlier, you can consider several aspects of learning environments. First, their material aspects: What kind of facilities exist to support learners? What aspects should be addressed in order to improve them? What are the organisational arrangements to facilitate learning? Second, the social world is an important facet of learning environments. During the last decade, interest in socio-cultural perspectives on learning has grown apace, as discussed in Chapter 2. Those understandings draw heavily on the work of Vygotsky, who stated that all learning is social (Vygotski, 1962). For example, Vygotsky described children pointing their fingers. First, this is an accidental movement, but when they see the reaction of others, they start using it purposefully. Vygotsky’s work led on to Lave and Wenger’s conceptualisations of situated learning (Lave and Wenger, 1991; Wenger, 1998). Their theory stressed (even more) the importance of local contexts (i.e. learning environments). They stated that all learning is situated and that knowledge is intimately connected to participation in activities. The importance of ‘experience-based learning’ has been replicated in recent studies among medical students (Dornan et al, 2007) and residents (Teunissen et al, 2007). People learn from other human beings and together construct new understandings of the world. Third, it is important to look at learning environments’ influence on learners’ behaviour, emotions, and practical competences. As learners grow and develop in social worlds, learning environments have an impact on the intra-psychological ‘worlds’ of individual learners, which are evident in their behaviour, emotions, and practical competences, such as skills, knowing how to learn, and applied knowledge. Fourth, you can get an indication of the educational functioning of departments or medical schools through measuring learning environments. These four aspects will be described in further detail below.

Learning environments at different organisational levels

Finally, learning environments exist at a number of different organisational levels ranging from large national institutions to small groups of students in a single department. Each of those levels is subject to different pressures and influences and able to exert different degrees of influence on other organisational levels. Learning environments may encompass variable numbers of people – from single learners to entire universities or hospitals. They come in all shapes and sizes. Whilst general impressions or overviews of learning environments exist, individual students will experience their own unique personal versions of them. This presents a challenge for those who seek to measure and alter learning environments, as any one institution or department may be made up of multiple smaller learning environments that combine to make the whole.

Organisational levels

Material facilities

Learning environments are shaped by organisational arrangements that facilitate or impede learners’ participation and learners’ perceptions of the quality of those arrangements may not accord with faculty’s perceptions of them. By arranging teaching sessions off the ward when it is very busy, for example, students may be pulled away from valuable ‘informal’ activities to attend ‘formal’ activities that they perceive (rightly or wrongly) to be less valuable. Papers referring to learning environments and how they should be improved often suggest focusing on material aspects; for instance, the need to buy more computers. This section describes the importance of material contexts.

The influence of material context has been studied by researchers interested in satisfaction and motivation. Two psychologists, Maslow and Herzberg, have been highly influential in this regard. In 1943, Maslow described a hierarchy of needs for reaching ‘self-actualisation’ (see also Chapter 9, Maslow, 1943) and the need for each level to be fulfilled before it is possible to progress to the next level. The first level consists of ‘basic physiological needs’ such as getting enough food, sleep, and shelter. After those needs are fulfilled, one can move up to the next level and attain ‘feelings of security and justice’. Only if these needs are satisfied, can one attain ‘belongingness needs’, such as having a partner, a family, and good relationships with friends or peers. Having fulfilled these needs, a person ‘strives for achievement’ and a reasonable amount of status. And only after fulfilment of those last needs does one reach ‘self-actualisation’. This influential theory is used in many management courses. Few studies have, however, confirmed the strict hierarchy as originally proposed; for example, people can be motivated without basic needs being met.

Herzberg proposed his motivation-hygiene theory in 1959 (Herzberg, 1968; Herzberg et al, 1959). He claimed that job satisfaction is the consequence of motivation factors, whereas job dissatisfaction is the consequence of hygiene factors. According to his theory, job satisfaction and job dissatisfaction are not two ends of a continuum – they are separate entities. Hygiene factors are, for instance, salary, working conditions, and physical environment. If those factors are improved, they have no structural influence on job satisfaction but they prevent dissatisfaction. Motivation factors are, for instance, achievement, recognition of achievement, the work itself, and responsibility. They lead to increased job satisfaction. This theory implies that many interventions aimed at improving job satisfaction (like salary rises or improvements in physical surroundings) lead only to dissolving job dissatisfaction. To improve job satisfaction, Herzberg proposes enriching jobs by offering more responsibility, accountability, and acknowledgement of work delivered. The theory is based on data collected using the critical incident technique, where people are asked to recount vivid examples of situations under study. A diverse group of people – engineers, accountants, hospital maintenance personnel, nurses, and others – contributed. The distinction between job dissatisfaction and job satisfaction has been criticised and may be a result of the research technique used, although other studies have seemed to confirm the distinction. Also, many authors criticise the assumption that more satisfied workers get more work done, which is prevalent in the work of both Maslow and Herzberg.

The work of those two researchers sheds light on material components of educational environments. It is clear that the physiological needs of learners have to be fulfilled for a learning environment to stand any chance of being optimal. If medical students have not slept for days or are feeling hungry or cold, they will be less inclined to learn. Also, so-called hygiene factors are important to prevent dissatisfaction with the material context of learning environments. Learning environments can be enhanced by good facilities, although they are not a sine qua non. Institutions aiming to improve their learning environments often begin by investing in physical surroundings (relatively easy to change, ‘just’ costing time and money), while they fail to make long-lasting investments in the organisational and social context (which are much harder to change). Those relatively superficial changes may not lead to an improved perception of the learning environments (think back to Herzberg’s hygiene factors).

To sum up, the material context is an important determinant of learning environments, which influences (at least) motivation. Departments should support learners’ basic needs and offer amenities to facilitate learning. Efforts to improve learning environments should not, however, be restricted to investing in material aspects alone and should include attention for participation and interaction as well.

Organisational priority for education

Organisations exert a strong influence on the learning environments within them. An organisation that values teachers and teaching activities highly will provide its students with learning environments that reflect those values and will differ from other organisations where teaching is valued less. If learners see teachers being rewarded – for example by promotion – this may encourage them to strive to become good teachers themselves. Equally, showing that poor-quality teaching or unprofessional behaviour on the part of teachers is not tolerated is a positive feature of learning environments. Still, academic promotion is often awarded to researchers rather than teachers (Chandran et al, 2009). That is partly because educators’ contributions are not so visible (Simpson et al, 2007), which has led to the development of analytic tools to help evaluators see educators’ achievements (Chandran et al, 2009; Simpson et al, 2007).

As stated earlier, learning environments exist at different organisational levels; for example at institutional and departmental levels, each of which is subject to different internal and external influences and potential conflict between them. The pressures on a medical school dean will be very different from those on first year medical students and the learning environments that surround them will reflect this. It is also true that the influence exerted by people in one learning environment on the development of others will be affected by their different hierarchical levels. Medical students will be able to shape their own learning environments and exert influence on their peers but will struggle to make major changes at an institutional level. Deans can influence learning environments around and downstream from them, but will find it harder to influence learning environments at a national level. Students themselves clearly have a role to play in this ‘regulation’ of learning environments. By asking them to complete a learning environment measurement tool and become more discerning about positive and negative elements of their learning environments, they will be better placed to shape their own micro-learning environments, whilst also having a greater impact on the holistic learning environments within their institution.

Social processes

Participation

Lave and Wenger firmly put participation on the map by writing their landmark book, Situated learning: legitimate peripheral participation (Lave and Wenger, 1991). They described five case studies among midwives, Vai Gola tailors, quartermasters, butchers, and reformed alcoholics, showing how newcomers gradually became involved in daily practice and learned their craft ‘just’ through situated participation. Their work, also reviewed in Chapters 2 and 12, has had a strong influence on conceptions of learning today. Regarding learning environments, Fuller and Unwin (2003) built on Lave and Wenger’s work to propose a continuum of approaches adopted by institutions offering apprenticeships in the United Kingdom. At one end of their continuum lies an expansive approach, which includes broad access to activities (boundary crossing), explicit acknowledgement of students’ status as learners, and the aim of becoming a full participant. At the other end lies a restrictive approach, with access to a more limited range of activities, a greater focus on the benefits of work to the institution than on students’ learning needs, and lack of explicit recognition of students’ status as learners (see Table 7.1 for a complete oversight). Both approaches imply learning through participation. The expansive approach, however, offers a richer learning environment that is more focused on learners’ growth than the restrictive approach. Recent work in the undergraduate medical setting by Dornan and colleagues showed the importance of supported participation, which resembles the expansive approach above (Dornan et al, 2007).

Table 7.1 Characteristics of approaches to participation

| Expansive approach | Restrictive approach |

|---|---|

| Participation in multiple communities of practice in and outside the workplace | Restricted participation in multiple communities of practice |

| Primary community of practice has shared ‘participatory memory’: cultural inheritance of apprenticeship | Primary community of practice has little or no ‘participatory memory’: little or no tradition of apprenticeship |

| Breadth: access to learning fostered by cross-company experiences built into the programme | Narrowness: access to learning restricted in terms of tasks/knowledge/location |

| Access to range of qualifications including knowledge-based vocational qualifications | Access to competence-based qualification only |

| Planned time off the job including attending college and for reflection | Virtually all on job: limited opportunities for reflection |

| Gradual transition to full participation | Fast transition |

| Apprenticeship aim: rounded expert – full participant | Apprenticeship aim: partial expert - full participant |

| Post-apprenticeship vision: progression of career | Post-apprenticeship vision: static |

| Explicit institutional recognition of, and support for, apprentice’s status as learner | Ambivalent institutional recognition of, and support for, apprentice’s status as learner |

| Named individual acting as dedicated support to apprentices | No dedicated individual; ad hoc support |

| Apprenticeship used as a vehicle for aligning the development of individual and organisational capability | Apprenticeship used to tailor individual capability to organisational need |

| Apprenticeship design fosters opportunities to extend identity through boundary crossing | Apprenticeship design limits opportunities to extend identity: little boundary crossing |

| Reification of apprenticeship highly developed (e.g. through documents, symbols, language, tools) and assessable to apprentices | Limited reification of apprenticeship, patchy access to reificatory aspects of practice |

After Fuller and Unwin (2003).

In summary, the availability of diverse opportunities for participation is an important property of learning environments. Newcomers should be able to move from the periphery to a more central position in a community of practice and, eventually, become full participants. All activities should be oriented towards reaching this status. For example, a resident in specialist training should be involved in the whole diversity of patient care and, in addition, the managerial and supervisory tasks of a specialist.

Teacher–learner relationships

Within learning environments, innumerable interactions take place every day. Interactions in medical education are between teachers and learners, teachers and patients, and learners and patients. Those interactions are complex and multi-faceted, and can contribute either positively or negatively to learners’ experiences of their learning environments. Relationships between learners and teachers are a particularly influential aspect of any learning environment. The importance of those relationships was acknowledged by Hippocrates, who began his famous oath with the statement that one should “…hold him who has taught me this art as equal to my parents…” and, later on, one should “…give a share of precepts and oral instruction and all the other learning to my sons and to the sons of him who has instructed me and to pupils who have signed the covenant and have taken the oath according to medical law, but to no one else” (North, 2002). Those statements show that learning and teaching have been central to the medical profession from at least ancient Grecian times and they remain a powerful influence on learning to this day.

Tiberius et al (2002) describe changes in our understanding of teacher–learner relationships over the last half century. First, there was acceptance of the notion that teachers could be trained; rather than believing that ‘good teachers are born, not made’, people started to believe that didactic skills were trainable. In the 1960s and 1970s, objectivist models using metaphors like transfer and malleability became popular views of teaching and learning. Teachers possessed knowledge, which they could transfer to learners as if they were vessels waiting to be filled. Moreover, learners were seen as raw material that teachers could mould into any preferred form or shape. If learners did not learn enough, flaws in the material were to blame (‘a leak in the vessel’). Those metaphors show similarities to the ‘acquisition metaphor’ described earlier in this chapter and Chapter 2. At the end of the 1970s and 1980s, interactionist and constructivist metaphors like growth and conversation came increasingly to the fore. According to this line of thought, the social context is pivotal to learning. Learners construct meaning through interaction with others in social settings. Teachers should arrange learning material in such a way that it engages learners’ interest and helps them connect it to their earlier experiences. Teachers must interact with learners to find out how to incite their interest and, so, induce growth. The final understandings of teaching and learning Tiberius et al describe are relational models using the inclusion and transformation metaphors. Like good patient–doctor relationships, good teacher–learner relationships are the vehicle of learning. The difference between these last two understandings is the focus on individual relationships between learners and teachers in the latter and the roles of groups and community in the former. Both, however, have similarities to the earlier described participation metaphor.

Teaching as a feature of learning environments

Research on teachers predominantly focuses on clinical as opposed to science teaching. There have been many studies describing the roles of ideal clinical teachers. Ullian et al (1994) published a categorisation of four roles: the supervisor; physician; teacher; and person. A recent qualitative study among obstetric-gynaecologic residents showed the importance of the person role; almost half of all remarks related to this role. Apparently, residents value direct and personal interaction (Boor et al, 2008b).

Lyon’s (2003, 2004) research on interactions between teachers and learners in the operating room (OR) has been very informative. She performed an extensive qualitative study including in-depth interviews, periods of observation in the OR, and student surveys. One important finding was that students had “…to negotiate the social relations of work, to find a legitimate role to play in order to participate in the ‘community of practice’, constituted by the operating theatre and its personnel.” In further exploring this theme, she found that trust, legitimacy, involvement, and participation were factors facilitating positive learning experiences. In addition, she described the process of ‘sizing up’, where learners and surgeons continuously re-evaluated one another. Students sought out student-friendly surgeons, and procedures that offered possibilities for them to participate. Moreover, they presented themselves as pro-active, motivated students who deserved attention and teaching. Surgeons, for their part, observed students’ motivation, interest, and professional conduct and then decided how to distribute valuable teaching time and participation opportunities (Lyon, 2003, 2004). Other authors have also stressed the importance of students trying to show ‘good’ behaviour in order to be rewarded with more opportunities to participate (Boor et al, 2008a; Sheehan et al, 2005).

It is important to realise that not only do learning environments influence learners but also learners influence learning environments. Moran and Volkwein (1992) have given a historical overview of understandings of organisational climates over the last few decades. First, organisational climates were seen to exist apart from the participants working in them. Then, organisational climates were seen as products of participants’ perceptions of the climates. Third came an interactive view, where participants and organisations together formed emerging organisational climates. We favour this last view, which has also been replicated in qualitative studies among medical students (Boor et al, 2008a) and residents (Boor, 2009). A learning environment changes through its ‘inhabitants’.

How teachers interact with their patients is an important influence on learning environments, which may have a direct impact on how learners then treat patients. By acting as role models (either implicitly or explicitly), teachers impart information to students about how they themselves should treat patients. Bandura has performed groundbreaking research on modelling. In his studies of aggressive behaviour, he showed how people could impart their behaviour to young children observing them (Bandura et al, 1961). This research from the 1960s has had a major impact on discussions about aggressive television shows and computer games as well as aggressive behaviour within families (for instance, children who witness abuse within their family have higher chances of becoming abusive themselves). Often, unintended behaviour is imitated; think of smoking or swearing. That has implications for teacher–learner relationships in medical education; teachers have to be aware of their position as role models all the time. They can say that it is important to take time to listen to patients but if they do not show that behaviour, students will copy their actual behaviour rather than follow their directions. In addition, Bandura suggested four steps in learning through modelling:

This work has clear implications for learning environments. Teachers and learners must be aware of the central role their interactions (not only with each other but also with patients and colleagues) play in the development of medical learning environments. If teachers want to serve as role models, they must be constantly aware of their behaviour and give learners opportunities to learn the behaviour by facilitating the above-described four steps. In turn, learners need to be aware of their own behaviour, as well as that of their teachers, and be pro-active in recognising those who may act as positive (and negative) role models. Ultimately, these learners will become teachers, and it is therefore important that they develop the attributes of positive role models.

Behaviour, emotions, and practical competences

Learning environments influence learners’ behaviour and emotional well-being (Seabrook, 2004). Recent research that included in-depth exploration of undergraduate medical students’ and staff’s narratives suggests that learning environments have an important emotional element. Many students described learning environments using emotional language such as ‘feel’ and ‘safe’, and staff felt that it was important to make students ‘feel welcome’ (Isba, 2009). It is possible, therefore, that emotion represents a common final pathway between other elements. That is unlikely to be a static relationship, and the learning environment may then ‘feed back’. It appears that emotional aspects of learning environments are more prominent in the perceptions of students than was previously been thought, and this fits with work emerging from other areas of medical education research on the value of emotions in learning (Isba, 2009). Observations relating to the role played by emotions in learning environments have huge implications for those responsible for delivering medical education, and may mean that a radical re-think of the ‘How, why, and where’ of teaching is on the horizon.

Another qualitative study amongst medical students clearly showed how learning environments affect learners’ behaviour, emotions, and practical competences (Boor et al, 2008a). Students described, for instance, how they lost their motivation and enthusiasm in a department with a restrictive approach towards participation (Boor et al, 2008a). They felt discouraged from taking any initiatives. In addition, they described how they lacked practical skills (in this case obstetric and gynaecologic abilities). Another study showed how ‘participation was influenced by, and influenced, respondents’ emotions, which reached high peaks and low troughs’: another example of learning environments’ influencing students’ emotions (Dornan et al, 2007). Organisational psychology also suggests a relation between environments and intra-psychological changes. Research shows a slight relationship between organisational climate and job performance (DeCotiis and Summers, 1987; Pritchard and Karasick, 1973), as well as a manifest influence on motivation (DeCotiis and Summers, 1987) and job satisfaction (DeCotiis and Summers, 1987; Pritchard and Karasick, 1973). It may, however, be difficult to prove a relationship between organisational culture and performance, since the former is such a multi-faceted construct (Scott et al, 2003).

Measuring medical learning environments

It is important to those involved in the delivery of undergraduate and postgraduate medical education to be able to quantify learning environments within their institutions for a number of reasons. By measuring them, it may be possible to identify strengths, weaknesses, and priority areas for improvement or resource allocation. Measuring learning environments can therefore act as a powerful curriculum evaluation and development tool, collecting data from those on the receiving end of a curriculum. By asking learners what they think about their learning environment, you are indicating that you value their opinion, and that is an important part of learner–institution interactions. An additional benefit of measuring learning environments is that their quantification allows comparison with other institutes. In addition, as stated in the introduction, measuring learning environments is the beginning of a quality cycle that should then be followed by interventions and new evaluations.

Having said earlier that learning environments are many things to many different people, and operate at so many different levels, is it even possible to measure them? Research in the area of learning environments has, like many areas of medical education research, benefited from the duality of ‘mixed methods’. By using qualitative approaches such as focus groups alongside quantitative methods, it has been possible to get a more detailed impression of learning environments than would have been possible from a single methodological approach alone (Boor, 2009; Isba, 2009). For the purpose of this chapter, however, we focus on quantitative instruments currently available for measuring learning environments. When considering an instrument, it is vital they not only measure what they set out to measure, but do so in a valid and reliable way. The American Psychological and Education Research Associations have published standards identifying five sources to support validity (American Education Research Association and American Psychological Association, 1999), summarised in Table 7.2. Reliability coefficients are used to estimate measurement error and are a means of quantifying an instrument’s measurement consistency. The most commonly used measures of reliability are Cronbach’s α (based on test–retest characteristics, and indicating internal consistency), the Kappa statistic (a correlation coefficient that indicates inter-rater reliability), ANOVA (also indicating inter-rater reliability), and generalisability theory (an estimate of the concurrent effects of multiple sources on reliability).

Table 7.2 Five sources of validity evidence

| Validity evidence source | Definition |

|---|---|

| Content | The relationship between a test’s content and the construct it is intended to measure. Refers to themes and wording of items. Includes experts’ input. Also includes development strategies to ensure appropriate content representation |

| Response process | Analyses of responses, including the strategies and thought processes of individual respondents. Differences in response processes may reveal sources of variance that are irrelevant to the construct being measured. Also includes instrument security, scoring, and reporting of results |

| Internal structure | The degree to which items fit the underlying construct. Most often reported as measures of internal consistency and factor analysis |

| Relation to other variables | The relationship between scores and other variables relevant to the construct being measured. Relationships may be positive (convergent or predictive) or negative (divergent or discriminant) |

| Consequences | Surveys are intended to have some desired effect, but they also have unintended effects. Evaluating such consequences can support or challenge the validity or score interpretations |

After Beckman et al (2005).

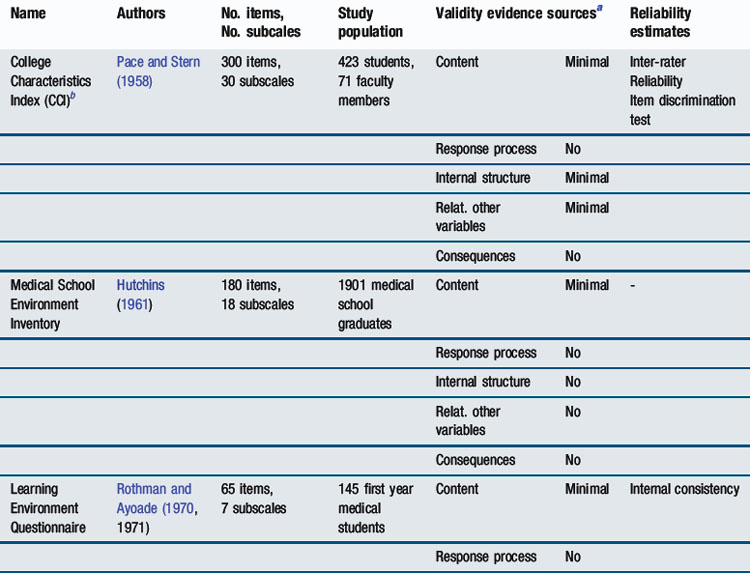

A number of instruments have been developed to quantify learning environments from the perspective of those learning within them – be they students, residents, or specialists. Each of those instruments has had its own set of strengths and weaknesses, both with regard to their design and also reliability and validity. A summary of survey instruments that have been developed to measure learning environments (all but one in medical education) over the last half century appears in Table 7.3.

Whilst some of the measurement instruments that appear in Table 7.3 are no longer widely used, some remain in extensive use across the world, despite not having the most robust psychometric qualities. Arguably, the most widely used instrument for measuring undergraduate medical learning environments at the current time is the Dundee Ready Educational Environment Measure (DREEM). DREEM has spawned multiple other questionnaires like PHEEM (Roff et al, 2005), ATEEM (Holt and Roff, 2004), and STEEM (Cassar, 2004) (see Table 7.3) mostly developed by researchers working in Dundee, Scotland, United Kingdom. DREEM will now be explored in detail as an example of a tool to measure learning environments. There are, in addition, a number of newer learning environment measurement tools, which have set out to include rigorous testing of reliability and validity as part of their development; one will be described in more detail.

Undergraduate learning environments: DREEM

Undergraduate medical education takes place before qualifying as a doctor under the auspices of a medical school within a parent university. DREEM was developed in 1997 by a team of medical education researchers based in Dundee, Scotland, along with more than 80 collaborators around the world (Roff et al, 1997). Since then, it has been widely translated and remains the cornerstone of quantitative learning environment research in undergraduate medical education. It was developed as a generic tool for measuring learning environments in any country and was reported to be ‘culture-free’ and therefore transferable between any culture or curricular style. It uses a five-point Likert scale format, with 50 items each being assigned a score between 0 and 4. Combining those scores gives a total score out of a possible 200. The items can also be subgrouped into five headings – perceptions of learning, perceptions of teachers, academic self-perceptions, perceptions of atmosphere, and social self-perception – each containing a different number of items. However, recent work has shown these subscales are not reproducible across study cohorts (Isba, 2009).

Like any other instrument, DREEM has strengths and weaknesses. Whilst it is relatively easy to understand, there are some ambiguous items. It allows easy comparison with curricular outcomes like exam results, although reports of its reliability have not been uniformly positive. It has the potential to drive curriculum development because it represents student feedback but it may provide only a very broad overview of a learning environment. In sum, there is only minimal (and equivocal) evidence for its validity and reliability (Oliveira Filho et al, 2005).

Postgraduate learning environment: D-RECT

In 2008, a Dutch Residency Educational Climate Test (D-RECT) was developed with the specific aim of overcoming some of the above-mentioned psychometric shortcomings of existing scales (Boor, 2009). First, D-RECT built on earlier qualitative research among residents from various specialties and with different levels of experience. This research showed that an ideal learning climate integrated work and training while attuning to residents’ personal needs. Those themes provided a basis for the preliminary D-RECT (75 items). Two complementary approaches safeguarded the psychometric quality of the instrument: in multiple anonymous rounds, a Delphi panel consisting of 38 experts (residents, specialists, educationalists, policy makers) (dis)approved of items to be included in it; at the same time, 1278 residents from 26 specialties filled out the preliminary questionnaire. Six hundred randomly selected questionnaires were used for an exploratory factor analysis. The outcomes from this combined with input from the Delphi panel led to a 50-item questionnaire, consisting of 11 subscales whose Cronbach’s α coefficients varied from 0.64 to 0.85. The remaining surveys were used for a confirmatory factor analysis to confirm the subscale structure. This analysis showed a good fit. Generalisablity analyses showed D-RECT to be reliable with a number of 11 participating residents (although most scales could be interpreted reliably with the input of only eight residents). The 11 subscales cover subjects such as ‘supervision’, ‘coaching and assessment’, ‘teamwork’, and ‘professional relations between consultants’. D-RECT’s aim is formative, which means that departments can get information on the strengths and weaknesses of their clinical learning environments as a basis for improvement efforts. Two sources of validity evidence (content and internal structure) seem well accounted for and D-RECT’s reliability has been carefully established.

Summary

Learning environments are of interest to those with responsibility for delivering medical education at all levels because they influence the ongoing development of medical students and qualified doctors and may have an impact upon curricular or programme outcomes. Learning environments are made up of, and influenced by, many things. Certain aspects of learning environments may be grouped into themes – preconditions, processes, and outcomes. Perhaps the most valuable element of any learning environment, however, remains the people within it – learners, teachers, and patients. People can have profound impacts – either positive or negative – upon learners and play a large part in the process of learning. Other elements of learning environments include physical space, opportunities, resources, and experiences.

Learning environments lend themselves to exploration in a number of different ways, both quantitative and qualitative. Whilst there are a number of quantitative instruments available to measure learning environments in postgraduate and undergraduate medical education, no one tool in current use is supported by the robust evidence and rigorous psychometric testing now expected in medical education research. However, new measurement tools are being developed. By measuring learning environments, it is possible to identify their strengths and weaknesses, which can lead on to improvement efforts. This is of particular importance as it offers institutions the opportunity to target their (often limited) resources on areas that learners have identified as important.

Much research remains to be done, particularly the further development of measurement instruments. Exploratory work also needs to be done to examine relationships between learning environments and learning outcomes. If such relationships exist, there is potential for harnessing learning environments to improve outcomes. Whilst learning environments are complex and hitherto poorly understood, they offer considerable potential for future development in medical education.

Implications for practice

Optimising learning environments

Each aspect of learning environments explored earlier in the chapter can, potentially, be improved. We now consider them in turn, suggesting how it might be possible to improve them to the benefit of the learning environment as a whole. Material aspects are the most easily optimised, though budgetary constraints will determine how close it is possible to get to the ideal. Learning environments need to be conducive to legitimate participation. Learners must feel they ‘belong’ and their learning is supported. Thinking back to the poor learning environment described at the start of this chapter, it is quite easy to see ways in which they could be improved. Learners need to be expected and welcomed, and supported emotionally as well as intellectually. They need to have positive role models and see how the environment encourages professional, ethical behaviour, and good clinical practice.

The evolution of learning environments is shaped by the multiple, complex interactions that occur within them, which opens the way to improving them by optimising teacher–learner relationships. ‘Teach the teacher’ courses and mentoring schemes that allow learners to align themselves with teachers for whom they feel a professional affinity have a clear role to play. Teaching and learning activities offered to learners, including supervision and feedback, may also be optimised. Finally, if it is possible to differentiate one learning environment from another and quantify their different strengths and weaknesses, then it should be positive for positive elements of one learning environment to be emulated by another.

American Education Research Association and American Psychological Association. Standards for educational and psychological testing. Washington, DC: American Education Research Association, 1999.

Bandura A., Ross D., Ross S.A. Transmission of aggression through imitation of aggressive models. J Abnorm Soc Psychol. 1961;63:575-582.

Beckman T.J., Cook D.A., Mandrekar J.N. What is the validity evidence for assessments of clinical teaching? J Gen Intern Med. 2005;20(12):1159-1164.

Boor K. The clinical learning climate. Amsterdam: VU Medical Center, 2009.

Boor K., Scheele F., van der Vleuten C.P.M., et al. How undergraduate clinical learning climates differ: a multi-method case study. Med Educ. 2008;42(10):1029-1036.

Boor K., Teunissen P.W., Scherpbier A.J., et al. Residents’ perceptions of the ideal clinical teacher – a qualitative study. Eur J Obstet Gynecol Reprod Biol. 2008;140(2):152-157.

Cassar K. Development of an instrument to measure the surgical operating theatre learning environment as perceived by basic surgical trainees. Med Teach. 2004;26(3):260-264.

Chandran L., Gusic M., Baldwin C., et al. Evaluating the performance of medical educators: a novel analysis tool to demonstrate the quality and impact of educational activities. Acad Med. 2009;84(1):58-66.

DeCotiis T.A., Summers T.P. A path analysis of a model of the antecedents and consequences of organizational commitment. Hum Relat. 1987;40(7):445-470.

DfES. Early years foundation stage consultation document. Nottingham: DfES, 2006.

Dornan T., Boshuizen H., King N., et al. Experience-based learning: a model linking the processes and outcomes of medical students’ workplace learning. Med Educ. 2007;41(1):84-91.

Feletti G.I., Clarke R.M. Construct validity of a learning environment survey for medical schools. Educ Psychol Meas. 1981;41:875-882.

Fuller A., Unwin L. Learning as apprentices in the contemporary UK workplace: creating and managing expansive and restrictive participation. J Educ Work. 2003;16(4):407-426.

Genn J.M. AMEE Medical Education Guide No. 23 (Part 2): curriculum, environment, climate, quality and change in medical education – a unifying perspective. Med Teach. 2001;23(5):445-454.

Herzberg F. One more time: how do you motivate employees? Harv Bus Rev. 1968;46(1):53-62.

Herzberg F., Mausner B., Snyderman B.B. The motivation to work. New York: Wiley, 1959.

Holt M.C., Roff S. Development and validation of the Anaesthetic Theatre Educational Environment Measure (ATEEM). Med Teach. 2004;26(6):553-558.

Hutchins E.B. The 1960 medical school graduate: his perception of his faculty, peers, and environment. J Med Educ. 1961;36:322-329.

Hutchinson L. Educational environment. BMJ. 2003;326(7393):810-812.

Isba R. DREEMs, myths and realities: learning environments within the University of Manchester Medical School. Manchester: University of Manchester, 2009.

Kanashiro J., McAleer S., Roff S. Assessing the educational environment in the operating room – a measure of resident perception at one Canadian institution. Surgery. 2006;139(2):150-158.

Lave J., Wenger E. Situated learning: legitimate peripheral participation. Cambridge: Cambridge University Press, 1991.

Lyon P. A model of teaching and learning in the operating theatre. Med Educ. 2004;38(12):1278-1287.

Lyon P.M. Making the most of learning in the operating theatre: student strategies and curricular initiatives. Med Educ. 2003;37(8):680-688.

Marshall R.E. Measuring the medical school learning environment. J Med Educ. 1978;53(2):98-104.

Maslow A.H. A theory of human motivation. Psychological Review. 1943;50:370-396.

Moran E.T., Volkwein J.F. The cultural approach to the formation of organizational climate. Hum Relat. 1992;45(1):19-47.

Mulrooney A. Development of an instrument to measure the Practice Vocational Training Environment in Ireland. Med Teach. 2005;27(4):338-342.

Nagraj S., Wall D., Jones E. The development and validation of the mini-surgical theatre educational environment measure. Med Teach. 2007;29(6):e192-197.

North, M. Hippocratic oath, Bethesda. National Library of Medicine, 2002.

Oliveira Filho G.R., Vieira J.E., Schonhorst L. Psychometric properties of the Dundee Ready Educational Environment Measure (DREEM) applied to medical residents. Med Teach. 2005;27(4):343-347.

Pace C.R., Stern G.G. An approach to the measurement of the psychological characteristics of college environments. J Educ Psychol. 1958;49(5):269-277.

Pololi L., Price J. Validation and use of an instrument to measure the learning environment as perceived by medical students. Teach Learn Med. 2000;12(4):201-207.

Pritchard R.D., Karasick B.W. The effects of organizational climate on managerial job performance and job satisfaction. Organ Behav Hum Perform. 1973;9:126-146.

Roff S., McAleer S., Harden R.M., et al. Development and validation of the Dundee Ready Education Environment Measure (DREEM). Med Teach. 1997;19:295-299.

Roff S., McAleer S., Skinner A. Development and validation of an instrument to measure the postgraduate clinical learning and teaching educational environment for hospital-based junior doctors in the UK. Med Teach. 2005;27(4):326-331.

Rotem A., Godwin P., Du J. Learning in hospital settings. Teach Learn Med. 1995;7:211-217.

Rothman A.I., Ayoade F. The development of a learning environment: a questionnaire for use in curriculum evaluation. J Med Educ. 1970;45:754-759.

Scott T., Mannion R., Marshall M., et al. Does organisational culture influence health care performance? A review of the evidence. J Health Serv Res Policy. 2003;8(2):105-117.

Seabrook M.A. Clinical students’ initial reports of the educational climate in a single medical school. Med Educ. 2004;38(6):659-669.

Sfard A. On two metaphors for learning and the dangers of choosing just one. Educ Res. 1998;27(2):4-13.

Sheehan D., Wilkinson T.J., Billett S. Interns’ participation and learning in clinical environments in a New Zealand hospital. Acad Med. 2005;80(3):302-308.

Simpson D., Fincher R.M., Hafler J.P., et al. Advancing educators and education by defining the components and evidence associated with educational scholarship. Med Educ. 2007;41(10):1002-1009.

Tague R.N. The quality toolbox, ed 2. ASQ Quality Press, 2004.

Teunissen P.W., Scheele F., Scherpbier A.J.J.A., et al. How residents learn: qualitative evidence for the pivotal role of clinical activities. Med Educ. 2007;41(8):763-770.

Tiberius R.G., Sinai J., Flak E.A. The role of teacher-learner relationships in medical education. In: Norman G.R., van der Vleuten C.P., Newble D.I., editors. International handbook of research in medical education. Dordrecht: Kluwer Academic Publishers; 2002:462-498.

Ullian J.A., Bland C.J., Simpson D.E. An alternative approach to defining the role of the clinical teacher. Acad Med. 1994;69(10):832-838.

Vygotski L.S. Thought and language. Cambridge, MA: MIT Press, 1962.

Wenger E. Communities of practice. Learning, meaning, and identity. Cambridge: Cambridge University Press, 1998.