Clinical Chemistry

In both human and veterinary medical practice, current trends indicate a move toward greater point-of-care capabilities. This translates into better customer service and enhances the practice of veterinary medicine. Determinations of levels of the various chemical constituents in blood can be an important aid in the formulation of an accurate diagnosis, prescription of proper therapy, and documentation of the response to treatment. The chemicals being assayed are generally associated with particular organ functions and may be enzymes associated with particular organ functions or metabolites and metabolic by-products that are processed by certain organs. Analysis of these components usually requires a carefully collected blood serum sample. Plasma may be used in some cases. Chemical measurements should be completed within 1 hour after blood collection. If testing will be delayed, freezing of the sample will preserve the integrity of most of the constituents. Freezing may interfere with some test methods, however. Certain anticoagulants may also interfere with particular chemical analyses. Many factors other than disease influence the results of chemistry tests. These factors may be preanalytical, analytical, or postanalytical (see Chapter 1).

Many veterinary practices own or lease chemistry analyzers to perform routine chemical assays. This focus on in-house laboratory work makes the veterinary technicians’ laboratory skills perhaps their biggest asset to the practice.

As the person most likely to be in charge of the laboratory, the veterinary technician must become familiar with the types of analytic instruments available (see Chapter 1), the variety of testing procedures used, and the rationale underlying the analyses. The most important contribution the technician can make to the practice laboratory is accurate and reliable test results. In vitro results must reflect, as closely as possible, the actual in vivo levels of blood constituents.

SAMPLE COLLECTION

Most chemical analyses require collection and preparation of serum samples. Whole blood or blood plasma may be used for some test methods or with specific types of equipment. The instructions accompany the chemistry analyzers and should be consulted for the type of sample required. Collection of a high-quality sample on which to perform an assay has a direct effect on the quality of test results. Most adverse influences on sample quality can be avoided with careful consideration of sample collection and handling.

Specific blood collection protocols vary depending on the patient species, volume of blood needed, method of restraint, and types of samples needed. Chapter 2 contains additional information on blood collection protocols, supplies, and equipment. Blood samples for chemical testing should always be collected before treatment is initiated. Administration of certain medications and treatments may affect results of biochemical testing. Preprandial samples, or samples from an animal that has not eaten for 12 hours, are preferred. Postprandial samples, or samples collected after an animal has eaten, may produce erroneous results. Samples taken after the patient has eaten can produce false values for a number of blood components, including glucose, urea, and lipase. Regardless of the method of blood collection, the sample must be labeled immediately after it has been collected. The tube should be labeled with the date and time of collection, the owner’s name, the patient’s name, and the patient’s clinic identification number. If submitted to a laboratory, include with the sample a request form that includes all necessary sample identification and a clear indication of which tests are requested.

Plasma

Plasma is the fluid portion of whole blood in which the cells are suspended. It is composed of approximately 90% water and 10% dissolved constituents, such as proteins, carbohydrates, vitamins, hormones, enzymes, lipids, salts, waste materials, antibodies, and other ions and molecules. Procedure 3-1 describes obtaining a plasma sample. The sample must not be contaminated with any cells from the bottom of the tube after centrifugation. If the sample cannot be centrifuged within 1 hour, it must be refrigerated. If heparinized plasma has been stored overnight after separation or has been frozen, the sample should be centrifuged again to remove any fibrin strands that may have formed. Freezing may affect certain test results; the test instructions should be consulted for all the tests that must be run before a plasma sample is frozen.

Serum

Serum is plasma from which fibrinogen, a plasma protein, has been removed. During the clotting process, the soluble fibrinogen in plasma is converted to an insoluble fibrin clot matrix. When blood clots, the fluid that is squeezed out around the cellular clot is serum. Obtaining a serum sample is described in Procedure 3-2. Centrifuging at speeds greater than 2000 to 3000 rpm or for a prolonged time may result in hemolysis. Serum separator tubes (SST) contain a gel that forms a physical barrier between serum or plasma and blood cells during centrifugation. The inside walls of the tube also contain silica particles that assist in clot activation. Blood collected into an SST should be mixed by inverting the tube several times and then allowing the sample to clot for 30 minutes before centrifugation. SST transport tubes are also available. These contain approximately double the amount of gel in a standard SST. The additional gel barrier helps minimize any interaction between the serum and cells after centrifugation so that test results are not likely to be affected if tests are delayed. Any prolonged delays in testing require that the serum be removed from the SST and placed in a sterile tube. The tube can then be refrigerated or frozen. Freezing may affect some test results; therefore the test instructions should be consulted for all the tests that must be run before a serum sample is frozen.

Factors Influencing Results

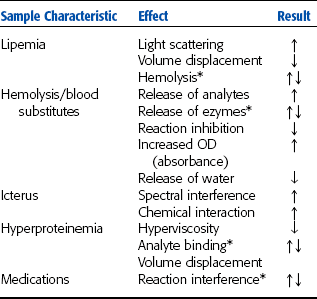

Many factors other than disease influence the results of chemistry tests. Hemolysis, lipemia, certain medications, and inappropriate sample handling can all lead to inaccurate results. Effects of sample compromise are summarized in Table 3-1.

TABLE 3-1

*Variable effect depending on analyte and test method.

From Sirois M: Principles and practice of veterinary technology, ed 2, St Louis, 2004, Mosby.

Hemolysis

Hemolysis may result when a blood sample is drawn into a moist syringe, mixed too vigorously after sample collection, forced through a needle when being transferred to a tube, or frozen as a whole blood sample. A syringe must be completely dry before it is used because water in the syringe may cause hemolysis. The needle from a syringe should be removed before blood is transferred to a tube. Forcing blood through a small needle opening may rupture cells. When transferring a blood sample to a tube, the veterinary technician should expel the blood slowly from the syringe without causing bubbles to form. Hemolysis can also result when excess alcohol is used to clean the skin and not allowed to dry before beginning the blood collection procedure.

Hemolysis, regardless of cause, can greatly alter the makeup of a serum or plasma sample. For example, fluid from ruptured blood cells can dilute the sample, resulting in falsely lower concentrations of constituents than are actually present in the animal. Certain constituents normally not found in high concentrations in serum or plasma escape from ruptured blood cells, causing falsely elevated concentrations in the sample. Hemolysis may elevate levels of potassium, organic phosphorus, and certain enzymes in the blood. Hemolysis also interferes with lipase activity and bilirubin determinations. Therefore, plasma or serum is frequently the preferred sample over whole blood, and serum is frequently preferred over plasma.

Chemical Contamination

Sterile tubes are not necessary for collection of blood samples for routine chemical assays. However, the tubes must be chemically pure. Detergents must be completely rinsed from reusable tubes so that the detergents do not interfere with test results.

Improper Labeling

Serious errors may result if a tube containing the sample is not labeled immediately after the sample is collected. The tube should be labeled with the date, time of collection, patient’s name, and clinic number. The veterinary technician should double check the sample identification with the request form, if one is used, as the sample is prepared and the test is run.

Improper Sample Handling

Ideally, all chemical measurements should be completed within an hour of sample collection, which is not always feasible. In this case, samples must be properly handled and stored so that levels of their chemical constituents approximate those in the patient’s body at the time of collection. Samples must not be allowed to become too warm. Heat may be detrimental to a sample, destroying some chemicals and activating others, such as enzymes. If a serum or plasma sample has been frozen, it must be thoroughly mixed after thawing to avoid concentration gradients.

Patient Influences

If practical, a sample should be obtained from a fasting animal. The blood glucose level can be elevated and the inorganic phosphorus level decreased immediately after a meal. Also, postprandial (after-eating) lipemia results in a turbid or cloudy plasma or serum. Kidney assays are also affected due to the transient increase in GFR after eating. Water intake need not be restricted before obtaining a blood sample.

REFERENCE RANGES

Reference ranges are also known as normal values. The reference range for a particular blood constituent is a range of values derived when a laboratory has repeatedly assayed samples from a significant number of clinically normal animals of a given species by specific test methods. Numerous medicine and clinical pathology books list the reference ranges of blood constituents for domestic species. Alternatively, reference ranges may be formulated by local diagnostic laboratories or in individual practice laboratories.

Establishing reference range values for any laboratory is time-consuming and expensive. To establish a list of reference values for the laboratory, the veterinary technician would have to assay samples from a significant number of clinically normal animals. Some investigators recommend analysis of at least 20 animals and others recommend more than 100 animals with similar characteristics. Other considerations include the variety of breeds and species most often seen in the veterinary practice; the gender and sexual status, such as intact or neutered, of the tested animals; the environ-ment, including husbandry and nutrition, of these animals; and climate. Climate is a consideration because drastic seasonal changes may also affect assay results.

PROTEIN ASSAYS

Plasma proteins are produced primarily by the liver and the immune system, consisting of reticuloendothelial tissues, lymphoid tissues, and plasma cells. Proteins have many functions in the body, and alterations in plasma protein concentrations occur in a variety of disease conditions, especially disease of the liver and kidneys. More than 200 plasma proteins exist. Some plasma protein concentrations change markedly during certain diseases and can be used as diagnostic aids. Other protein concentrations change little during disease. Age-related changes in plasma protein concentrations are also seen. Plasma protein functions include the following:

• Helping form the structural matrix of all cells, organs, and tissues

• Maintaining osmotic pressure

• Serving as enzymes for biochemical reactions

• Acting as buffers in acid-base balance

• Functioning in blood coagulation

• Defending the body against pathogenic microorganisms

• Serving as transport/carrier molecules for most constituents of plasma

The plasma protein assays commonly performed in veterinary medicine include total protein, albumin, and fibrinogen.

Total Protein

Total plasma protein measurements include fibrinogen values, whereas total serum protein determinations measure all the protein fractions except fibrinogen, which is removed during the clotting process. The total protein concentration may be affected by altered hepatic synthesis, altered protein distribution, and altered protein breakdown or excretion, as well as dehydration and overhydration.

Total protein concentrations are especially valuable in determining an animal’s state of hydration. A dehydrated animal usually has a relatively elevated total protein concentration (hyperproteinemia), whereas an overhydrated animal usually has a relatively decreased total protein concentration (hypoproteinemia). Total protein concentrations also are useful as initial screening tests for patients with edema, ascites, diarrhea, weight loss, hepatic and renal disease, and blood clotting problems.

Two methods are commonly used for determination of total protein levels: the refractometric method and the biuret photometric method. The refractometric method measures the refractive index of serum or plasma with a refractometer (see Chapter 1). The refractive index of the sample is a function of the concentration of solid particles in the sample. In plasma, the primary solids are the proteins. This method is a good screening test because it is fast, inexpensive, and accurate. The biuret method measures the number of molecules containing more than three peptide bonds in serum or plasma. This method is commonly used in analytic instruments in the laboratory. It is a simple method and yields accurate results. Other chemical tests to measure protein include dye-binding methods, precipitation methods, and the Lowry method. These tests are not commonly performed in veterinary practice. They are usually used to measure a small amount of protein in urine and cerebrospinal fluid (CSF). Specialized tests to separate the various protein populations are performed in some reference laboratories and research facilities. These methods include salt fractionation, chromatography, and gel electrophoreses (see Chapter 8).

The aformentioned tests can be performed on samples other than serum and plasma (e.g., urine, CSF). Other tests also used include the sulfosalicylic acid test, Pandy test, and Nonne-Apelt test. For the Pandy test, 1 drop of CSF is added to 1 ml of saturated aqueous phenol. Turbidity is observed before and after the mixture is shaken. After shaking, the CSF disperses as small droplets in the phenol, which should not be confused with a positive reaction (i.e., development of turbidity). If the sample is normal, no appreciable immunoglobulin is present and the solution remains clear (at most, slightly turbid), which is considered a negative result. If immunoglobulin is present at a concentration of 25 mg/dl or more, the solution becomes cloudy white. The degree of turbidity may be subjectively graded from 11 to 41, corresponding to increasing immunoglobulin concentration. For the Nonne-Apelt test, 1 ml of saturated ammonium sulfate solution is overlaid carefully with 1 ml of CSF and allowed to stand undisturbed for 3 minutes. The junction between the two fluids remains clear with normal CSF. However, if CSF immunoglobulin concentration is increased, a white-gray zone forms at the junction. This reaction may be graded subjectively from 11 to 41, reflecting increasing immunoglobulin concentration. The sulfosalicylic acid test is described in Chapter 5.

Albumin

Albumin is one of the most important proteins in plasma or serum. It makes up 35% to 50% of the total plasma protein in most animals, and any significant state of hypoproteinemia is most likely caused by albumin loss. Hepatocytes synthesize albumin, and any diffuse liver disease may result in decreased albumin synthesis. Renal disease, dietary intake, and intestinal protein absorption also may influence the plasma albumin level. Albumin is the major binding and transport protein in the blood and is responsible for maintaining osmotic pressure of plasma. The primary photometric test for albumin is the bromcresol green dye-binding method.

Globulins

The globulins are a complex group of proteins. Alpha globulins are synthesized in the liver and primarily transport and bind proteins. Two important proteins in this fraction are high-density lipoproteins and very-low-density lipoproteins. Beta globulins include complement (C3, C4), transferrin, and ferritin. They are responsible for iron transport, heme binding, and fibrin formation and lysis. Gamma globulins (immunoglobulins) are synthesized by plasma cells and are responsible for antibody production (immunity). Immunoglobulins (Ig) identified in animals are IgG, IgD, IgE, IgA, and IgM.

Direct chemical measurements of globulin are rarely performed. Globulin concentration is normally estimated by determining the difference between the total protein and albumin concentrations.

Albumin/Globulin Ratio

An alteration in the normal ratio of albumin to globulin (A/G) is frequently the first indication of a protein abnormality. The ratio is analyzed in conjunction with a protein profile. The A/G can be used to detect increased or decreased albumin and globulin concentrations. Many pathologic conditions alter the A/G. However, if the albumin and globulin concentrations are reduced in equal proportions, such as with hemorrhage, no alteration in A/G will be present.

The A/G is determined by dividing the albumin concentration by the globulin concentration. In dogs, horses, sheep, and goats, the albumin concentration is usually greater than the globulin concentration (A/G is more than 1.00). In cattle, pigs, and cats, the albumin concentration is usually equal to or less than the globulin concentration (A/G is less than 1.00).

Fibrinogen

Fibrinogen is synthesized by hepatocytes. It is the precursor of fibrin, the insoluble protein that forms the matrix of blood clots, and is one of the factors necessary for clot formation. If fibrinogen levels are decreased, blood does not form a stable clot or does not clot at all. Fibrinogen makes up 3% to 6% of the total plasma protein content. Because it is removed from plasma by the clotting process, no fibrinogen is found in serum. Acute inflammation or tissue damage may elevate plasma fibrinogen levels. The most common method of fibrinogen evaluation is the heat precipitation test described in Chapter 2. The fibrinogen value is calculated by subtracting the total plasma protein value of the heated tubes from that of the unheated tubes. (This value should be lower because fibrinogen has been removed from the plasma.) Plasma is the only sample that may be used because serum does not contain fibrinogen. Plasma collected with ethylenedianine tetraacetic acid (EDTA) is preferred. Heparinized plasma may yield falsely low results.

HEPATOBILIARY ASSAYS

The liver is the largest internal organ and is complex in structure, function, and pathologic characteristics. It has many functions, including metabolism of amino acids, carbohydrates, and lipids; synthesis of albumin, cholesterol, plasma proteins, and clotting factors; digestion and absorption of nutrients related to bile formation; secretion of bilirubin, or bile; and elimination, such as detoxification of toxins and catabolism of certain drugs. These functions are run by enzymatic reactions. The gallbladder is closely associated with the liver, both anatomically and functionally. Its primary function is as a storage site for bile. Malfunctions in the liver or gallbladder result in predictable clinical signs of jaundice, hypoalbuminemia, problems with hemostasis, hypoglycemia, hyperlipoproteinemia, and hepatoencephalopathy.

Hepatic cells exhibit extreme diversity of function and are capable of regeneration if damaged. As a result, more than 100 different types of tests are available to evaluate liver function. Usually liver disease is greatly progressed before clinical signs appear. Liver function tests are designed to measure substances that are produced by the liver, modified by the liver or released when hepatocytes are damaged or those enzymes with altered serum concentrations as a result of cholestasis. Liver cells also compartmentalize the work so that damage to one zone of the liver may not affect all liver functions. Liver function tests are usually performed with serial determinations and several different types of liver tests completed to assist in verifying the functional status of the organ. No single test is superior to any other for detecting hepatobiliary disease. New tests are being developed to allow detection of hepatic disease before the liver is severely damaged. The primary tests used in veterinary medicine for evaluation of the liver and gallbladder are summarized in Box 3-1.

Enzymes Released from Damaged Hepatocytes

With this type of liver disease, the hepatocytes are damaged and enzymes leak into the blood, causing a detectable rise in blood levels of enzymes associated with liver cells. These components, commonly referred to as the “leakage enzymes,” include the transferase enzymes alanine aminotransferase (ALT) and aspartate aminotransferase (AST) and the dehydrogenase enzymes sorbitol dehydrogenase (SDH) and glutamate dehydrogenase (GLDH). Transferases catalyze the reactions that transfer amine groups from amino acids to keto acids in the production of new amino acids. The enzymes are therefore found in tissues that have high rates of protein catabolism. Although other transferases are present in hepatocytes, the only readily available tests are for ALT and AST. Dehydrogenases catalyze the transfer of hydrogen groups, primarily during glycolysis. Transferases and dehydrogenases are found either free in the cytoplasm of hepatocytes or bound to the cell membrane. The serum levels of these enzymes vary in different species, and most also have nonhepatic sources.

Alanine Aminotransferase

ALT was formerly known as serum glutamic pyruvic transaminase (SGPT). In dogs, cats, and primates, the major source of ALT is the hepatocyte, where the enzyme is found free in the cytoplasm. ALT is considered a liver-specific enzyme in these species. Horses, ruminants, pigs, and birds do not have enough ALT in the hepatocytes for this enzyme to be considered liver specific. Other sources of ALT are renal cells, cardiac muscle, skeletal muscle, and the pancreas. Damage to these tissues may also result in increased serum ALT levels. Administration of corticosteroids or anticonvulsant medications may also lead to increases in serum ALT. ALT is used as a screening test for liver disease because it is not precise enough to identify specific liver diseases. No correlation exists between the blood levels of the enzyme and the severity of hepatic damage. Increases in ALT are usually seen within 12 hours of hepatocyte damage and peak levels are seen in 24 to 48 hours. The serum levels will return to reference ranges within a few weeks unless a chronic liver insult is present.

Aspartate Aminotransferase

AST was formerly known as serum glutamic oxaloacetic transaminase (SGOT). AST is present in hepatocytes, both free in the cytoplasm and bound to the mitochondrial membrane. More severe liver damage is required to release the membrane-bound AST. AST levels tend to rise more slowly than do ALT levels and return to normal levels within a day, provided chronic liver insult is not present. AST is found in significant amounts in many other tissues, including erythrocytes, cardiac muscle, skeletal muscle, the kidneys, and the pancreas. An increased blood level of AST may indicate nonspecific liver damage or may be caused by strenuous exercise or intramuscular injection. The most common causes of increased blood levels of AST are hepatic disease, muscle inflammation or necrosis, and spontaneous or artifactual hemolysis. If the AST level is elevated, the serum or plasma sample should be examined for hemolysis. Creatine kinase activity should also be assessed to rule out muscle damage before attributing an AST increase to liver damage.

Sorbitol Dehydrogenase

The primary source of SDH is the hepatocyte. Smaller amounts of the enzyme are found in the kidney, small intestine, skeletal muscle, and erythrocytes. SDH is present in the hepatocytes of all common domestic species but is especially useful for evaluating liver damage in large animals such as sheep, goats, swine, horses, and cattle. Large animal hepatocytes do not contain diagnostic levels of ALT, so SDH offers a liver-specific diagnostic test. The plasma level of SDH rises quickly with hepatocellular damage or necrosis. SDH assay can be used in all species to detect hepatocellular damage or necrosis, thus eliminating the need for other tests, such as the ALT assay. The disadvantage of SDH analysis is that SDH is unstable in serum and its activity declines within a few hours. If testing is delayed, samples should be frozen. SDH tests are not readily available to the average veterinary laboratory. Samples sent to outside laboratories should be packed in ice for transport.

Glutamate Dehydrogenase

GLDH is a mitochondrial-bound enzyme found in high concentrations in the hepatocytes of cattle, sheep, and goats. An increase in this enzyme is indicative of hepatocyte damage or necrosis in cattle and sheep. GLDH could be the enzyme of choice for evaluating ruminant and avian liver function, but no standardized test method has been developed for use in a veterinary practice laboratory.

Enzymes Associated with Cholestasis

Blood levels of certain enzymes become elevated with cholestasis (bile duct obstruction), metabolic defects in liver cells, and administration of certain medications and also as a result of the action of certain hormones, especially those of the thyroid. These enzymes are primarily membrane bound. The exact mechanism that induces increased levels of these enzymes in cholestasis is not well documented.

Alkaline Phosphatase

Alkaline phosphatase (AP) is present as isoenzymes in many tissues, particularly osteoblasts in bone; chondroblasts in cartilage, intestine, and placenta; and cells of the hepatobiliary system in the liver. The isoenzymes of AP tend to remain in circulation for approximately 2 to 3 days, with the exception of the intestinal isoenzyme that circulates for just a few hours. A corticosteroid isoenzyme of AP has been identified in dogs with exposure to increased endogenous or exogenous glucocorticoids. Because AP occurs as isoenzymes in these various tissues, the source of an isoenzyme or location of the damaged tissue may be determined by electrophoresis and other tests performed in commercial or research laboratories.

In young animals, most AP comes from osteoblasts and chondroblasts because of active bone development. In older animals, nearly all circulating AP comes from the liver as bone development stabilizes. The assays used for AP in a practice laboratory determines the total blood AP concentration. AP concentrations are most often used to detect cholestasis in adult dogs and cats. Because of wide fluctuations in normal blood AP levels in cattle and sheep, this test is not as useful for detecting cholestasis in these species.

Gamma Glutamyltranspeptidase

Gamma glutamyltransferase (GGT or γGT) is sometimes referred to as gamma glutamyltranspeptidase. GGT is found in many tissues, including renal epithelium, mammary epithelium (particularly during lactation), and biliary epithelium, but its primary source is the liver. Cattle, horses, sheep, goats, and birds have higher blood GGT activity than dogs and cats. Other sources of GGT include the kidneys, pancreas, intestine, and muscle cells. The blood GGT level is elevated with liver disease, especially with obstructive liver disease.

Hepatocyte Function Tests

Many substances are taken up, modified, produced, and/or secreted by the liver. Alteration in the ability to perform these specific functions provides an overview of liver function. Tests of hepatocyte function performed in veterinary practice include bilirubin and bile acids. Other substances produced by hepatocytes are less-sensitive indicators of liver function because test results may not show abnormalities until two thirds to three fourths of liver tissue is damaged. These less-sensitive tests include albumin, ammonia, and cholesterol.

Bilirubin

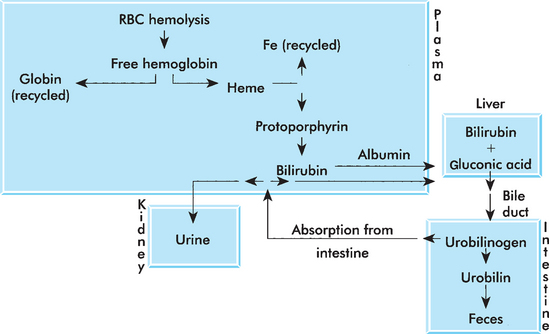

Bilirubin is an insoluble molecule derived from the breakdown of hemoglobin by macrophages in the spleen. The molecule is bound to albumin and transported to the liver. The hepatic cells metabolize and conjugate the bilirubin to the molecule bilirubin glucuronide. This molecule is then secreted from the hepatocytes and becomes a component of bile. Bacteria within the gastrointestinal system act on the bilirubin glucuronide and produce a group of compounds collectively referred to as urobilinogen. Urobilinogen is broken down to urobilin before being excreted in feces. Bilirubin glucuronide and urobilinogen may also be absorbed directly into the blood and excreted by the kidneys (Fig. 3-1).

Figure 3-1 Bilirubin metabolism. (From Sirois M: Principles and practice of veterinary technology, ed 2, St Louis, 2004, Mosby.)

Measurements of the circulating levels of these various populations of bilirubin can help pinpoint the cause of jaundice. Differences in the relative solubility of each of these molecules allow them to be individually quantified. In most animals, the prehepatic (bound to albumin) bilirubin comprises approximately two thirds of the total bilirubin in serum. Increases in this population indicate problems with uptake (hepatic damage). Increases in conjugated bilirubin indicate bile duct obstruction.

Assays can directly measure total bilirubin (conjugated bilirubin plus unconjugated bilirubin) and conjugated bilirubin. Conjugated bilirubin is sometimes referred to as direct bilirubin because test methods directly measure the amount of conjugated bilirubin in the sample. Unconjugated bilirubin is sometimes referred to as indirect bilirubin because its concentration is indirectly calculated by subtracting the conjugated bilirubin concentration from the total bilirubin concentration of the sample.

Bilirubin is assayed to determine the cause of jaundice, evaluate liver function, and check the patency of bile ducts. Blood levels of conjugated (direct) bilirubin are elevated with hepatocellular damage or bile duct injury or obstruction. Blood levels of unconjugated (indirect) bilirubin are elevated with excessive erythrocyte destruction or defects in the transport mechanism that allow bilirubin to enter hepatocytes for conjugation.

Bile Acids

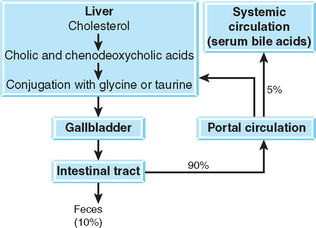

Bile acids serve many functions. They aid in fat absorption (enabling the formation of micelles in the gastrointestinal system) and modulate cholesterol levels by bile acid synthesis. Bile acids are synthesized by hepatocytes from cholesterol and are conjugated with glycine or taurine. Conjugated bile acids are secreted across the canalicular membrane and reach the duodenum by way of the biliary system. The gallbladder stores bile acids (except in the horse) until contraction associated with feeding. When bile acids reach the ileum, they are transported to the portal circulation and travel back to the liver. Ninety percent to 95% of the bile acids are actively resorbed in the ileum. The remaining 5% to 10% is excreted in the feces. The reabsorbed bile acids are carried to the liver where they are reconjugated and excreted as part of the enterohepatic circulation of bile acids (Fig. 3-2).

Spillover bile acids that escape from enterohepatic circulation may be detected in normal animals; serum concentrations of bile acids correlate portal concentrations. As a result, postprandial serum bile acid (SBA) concentrations are higher than fasting concentrations. Any process that impairs the hepatocellular, biliary, or portal enterohepatic circulation of bile acids results in elevated SBA levels. The great advantage of SBA determinations as a liver function test is that they evaluate the major anatomic component of the hepatobiliary system and are stable in vitro.

The SBA level is normally elevated after a meal because the gallbladder has contracted and released increased amounts of bile into the duodenum. Paired serum samples performed after 12 hours of fasting and 2 hours postprandial are needed to perform the test. The difference in the bile acid concentration of the samples is reported. In horses, a single sample is tested. Inadequate fasting or spontaneous gallbladder contraction can increase fasting bile acid levels. Exposing the patient to even the aroma of food can result in spontaneous gallbladder contraction. Prolonged fasting and diarrhea can decrease bile acids.

Elevated SBA levels usually indicate liver diseases such as congenital portosystemic shunts, chronic hepatitis, hepatic cirrhosis, cholestasis, or neoplasms. Bile acid levels are unspecific regarding the type of liver problem that exists and are therefore used as a screening test for liver disease. Bile acid levels may detect liver problems before an animal becomes icteric. They also may be used to follow the progress of liver disease during treatment. Increased bile acid concentrations can also result from extrahepatic diseases that secondarily affect the liver. Decreased bile acid concentration may be seen in intestinal malabsorptive diseases. In horses, increased bile acid concentrations can be the result of hepatobiliary disease or decreased feed intake. The reference ranges for bile acids in cows are widely variable. Bile acid testing is not a sensitive indicator of disease in cows.

Bile acids may be determined by several methods; the most commonly used is an enzymatic method. The 3-hydroxy bile acids react with 3-hydroxisteroid dehydrogenase and then with diformazan. Color generation is measured by end point spectrophotometry. Lipemic postprandial samples must be cleared by centrifugation to avoid interference with spectrophotometry. A bile acid test that uses immunologic methods (enzyme-linked immunosorbent assay) is now available for use in the veterinary clinic.

Cholesterol

Cholesterol is a plasma lipoprotein produced primarily in the liver, as well as ingested in food. Cholestasis caused an increase in serum cholesterol in some species. However, large differences exist in lipoprotein profiles of different species, and the clearance of lipoproteins is not well characterized in most veterinary species. A number of automated analyzers are available that provide cholesterol and other lipoprotein values. Hyperlipidemia is often secondary to other conditions (Box 3-2). Primary hyperlipidemia is rare and associated with inherited conditions in some breeds.

Cholesterol assay is sometimes used as a screening test for hypothyroidism. Thyroid hormone controls synthesis and destruction of cholesterol in the body. Insufficient thyroid hormone, or hypothyroidism, results in hypercholesterolemia because the rate of cholesterol destruction is relatively slower than the rate of synthesis. Other diseases associated with hypercholesterolemia include hyperadrenocorticism, diabetes mellitus, and nephrotic syndrome. Dietary causes of hypercholesterolemia are rare but may include high-fat diets or postprandial lipemia.

Cholesterol by itself does not cause the grossly lipemic plasma seen after eating; triglycerides also are usually present. Administration of corticosteroids also may cause an elevated blood cholesterol concentration. Fluoride and oxalate anticoagulants may elevate enzymatic method results.

Other Tests of Liver Function

Although not commonly performed in the veterinary practice, several additional tests are available at reference laboratories and research facilities. The tests are based on the ability of the liver to excrete waste and foreign substances and include the dye excretion tests, ammonia tolerance test, and caffeine clearance test.

Dye Excretion

The two available dye excretion tests are the bromsulfophthalein (BSP) excretion and indocyanine green (ICG) excretion tests. Both require administration of a dye that binds to a protein in serum. The dyes are taken up by the hepatocytes and excreted into the bile. The disappearance of the dye from the plasma requires functional hepatocytes, adequate hepatic blood flow, and bile flow. The dye used for BSP is no longer used in human medicine, and its availability is limited. The overall complexity and expense of the testing have relegated their use primarily to research facilities.

Bromsulfophthalein Excretion: BSP excretion is a sensitive hepatic function test that is especially useful for detecting chronic lesions or portosystemic shunts with no leakage of liver enzymes. Coagulation defects and mysterious anemias sometimes may be explained by BSP test results. In horses, the test is handy to differentiate the jaundice of hepatic disease from that of simple anorexia, and hepatoencephalopathy from “wobbler syndrome.” Specific indications in ruminants include fascioliasis, liver abscesses, ketosis, and photosensitization. BSP clearance may aid in diagnosis of aflatoxicosis in swine. Hepatic lesions delay BSP excretion. Delays caused by hepatocellular injury indicate a loss of at least 55% of the liver’s functional mass. The magnitude of delay is poorly correlated to both the extent of hepatic lesions, when mild, and the clinical signs of liver dysfunction.

Delayed BSP clearance can be erroneous. Slowed BSP excretion results from perivascular dye injection (which can be painful), poor hepatic perfusion (shock, heart failure, dehydration), and fever. Ascites also interferes with BSP clearance because the dye lingers in pooled fluids. Certain inborn defects of BSP metabolism occur without liver disease, notably in some Southdown and Corriedale sheep. Obesity prolongs BSP retention because the amount of BSP per unit of body mass is relatively increased. In conclusion, bilirubin competes with BSP for excretion by the liver. For this reason, the BSP test should not be performed in animals with hyperbilirubinemia of 3 mg/dl or more. The results reveal nothing more than the already apparent jaundice.

Two conditions may disguise liver disease by speeding BSP clearance. Because albumin carries BSP in the plasma, hypoalbuminemia (nephrotic syndrome, protein-losing gastroenteropathies, extreme liver disease) speeds clearance by increasing hepatic access to the free dye. Phenobarbital use also hastens BSP clearance. BSP clearance has no correlation with the extent of fatty infiltration of the liver.

Indocyanine Green Clearance: ICG is an organic dye similar to BSP. Introduced to human medicine because of occasional reactions to BSP, it is used to estimate hepatic blood flow.

Preinjection plasma is required to prepare a blank and a standard solution. This test can be used in both fed and fasted animals, but the latter is preferred. An intravenous dosage of 0.8 to 1.1 mg/kg body weight is recommended for horses, whereas 1 mg/kg body weight is recommended for dogs and 1.5 mg/kg body weight for cats. Usually five to six plasma samples are taken between 0, 5, 10, 15, and 30 minutes after injection. ICG concentration is measured photometrically at 805 nm, and the half-life is determined. Normal ICG clearances are as follows: dogs, 8.4 ± 2.3 minutes; horses (fed), 3.5 ± 0.67 minutes; and horses (fasted), 1.6 ± 0.57 minutes. Normal 30-minute retention is 14.7% ± 5% in dogs and 7.3% ± 2.9% in cats. Delayed ICG clearance has been reported in a variety of disorders, such as hyperbilirubinemia, hypoproteinemia, decreased hepatic blood flow, hepatic necrosis, and extrahepatic bile duct obstruction.

Ammonia Tolerance

Ammonia is produced by enteric microflora and during amino acid metabolism and transported to the liver through the portal circulation. Enzymes in the liver convert the ammonia to urea for excretion. Any condition that reduces the uptake of ammonia or conversion of ammonia to urea can lead to increased plasma ammonia concentration. However, normal compensatory mechanisms of the liver may result in normal fasting plasma ammonia concentrations. Impaired ammonia uptake is best identified with an ammonia tolerance test. A fasting sample is collected to provide baseline date. Patients identified with increased plasma ammonia in fasting samples should not undergo the tolerance test because of the high risk of nervous system damage. After collection of the fasting sample, ammonium chloride is administered either rectally or orally by stomach tube or in a gelatin capsule. An additional sample is collected 30 minutes later. In patients with adequate hepatic function, the postadministration results may be the same as the fasting sample or moderately increased. Patients with urea cycle enzyme deficiencies (arginosuccinate synthetase) and abnormal portal blood flow, particularly congenital portovascular anomalies, often demonstrate a threefold to tenfold increase in plasma ammonia concentrations above the baseline. The test’s chief limitation is in the handling of blood samples. Samples must be collected in ammonia-free heparin and the blood must be centrifuged immediately. The plasma should be placed on ice and analyzed within 30 minutes of collection or frozen at 220° C. Ammonia levels are stable in frozen plasma for a few days. Whole blood cannot be tested because its ammonia content increases with storage. Although the test can dramatically elevate the blood ammonia level, it does not cause nor worsen neurologic signs. Occasional vomiting with oral administration of the ammonia chloride is the only problem.

Caffeine Clearance

This is a specific assay of hepatic microsomal function. Demethylation of caffeine depends only on the specific P448 microsomal system of healthy hepatocytes; therefore it accurately reflects aberrations in hepatocellular function. This test is now used in human medicine; few experimental studies have been performed in canine species.

Caffeine sodium benzoate is dissolved in 2 ml sterile water (50:50 wt/wt), and 7 mg of caffeine/kg body weight are then injected intravenously. Plasma samples are collected from 15 to 480 minutes, and the caffeine concentration (in milligrams per milliliter) is measured by automated enzyme immunoassay. Plasma caffeine clearance and half-life are calculated from those values.

Normal half-life values in dogs are 6 ± 0.6 hours, and clearance is 1.7 ± 0.1 ml/min per kilogram of body weight. Elimination of caffeine is prolonged in hepatic insufficiency, with a clearance of 0.8 ± 0.1 ml/min per kilogram of body weight.

KIDNEY ASSAYS

The kidneys play a major role in maintaining homeostasis in animals. Their primary functions are to conserve water and electrolytes in times of a negative balance and increase water and electrolyte elimination in times of a positive balance; excrete or conserve hydrogen ions to maintain blood pH within normal limits; conserve nutrients, such as glucose and proteins; remove the end products of nitrogen metabolism, such as urea, creatinine, and allantoin, so that blood levels of these end products remain low; produce renin (an enzyme involved in controlling blood pressure), erythropoietin (a hormone necessary for erythrocyte production), and prostaglandins (fatty acids used to stimulate contractility of uterine and other smooth muscle, lower blood pressure, regulate acid secretion in the stomach, regulate body temperature and platelet aggregation, and control inflammation); and aid in vitamin D activation.

The kidneys receive blood from the renal arteries. The blood enters the glomerulus of the nephrons where nearly all water and small dissolved solutes pass into the collecting tubules. Each nephron contains sections that function to reabsorb or secrete specific solutes. Resorption of glucose occurs in the proximal convoluted tubule. Mineral salts secretion and reabsorption occurs in the ascending limb of the loop of Henle and distal convoluted tubule. The nephron has a specific resorptive capability for each substance called the renal threshold. Most water is reabsorbed as well. As a result of water reabsorption, the volume excreted is less than 1% of the volume that originally entered the kidney. Blood returns from the kidneys to the rest of the body through the renal veins, which connect to the caudal vena cava. Urine and blood may be analyzed to evaluate kidney function. Chapter 5 details urinalysis procedures. The primary serum chemistry tests for kidney function are urea nitrogen and creatinine. Other tests include various assays designed to evaluate the rate and efficiency of glomerular filtration.

Blood Urea Nitrogen

Some references use the term serum urea nitrogen (SUN) instead of blood urea nitrogen (BUN). Urea is the principal end product of amino acid breakdown in mammals. BUN levels are used to evaluate kidney function on the basis of the ability of the kidney to remove nitrogenous waste (urea) from blood. Under normal conditions, all urea passes through the glomerulus and enters the renal tubules. Approximately half of the urea is reabsorbed in the tubules and the remainder excreted in the urine. If the kidney is not functioning properly, sufficient urea is not removed from the plasma, leading to increased BUN levels.

Contamination of the blood sample with urease-producing bacteria (e.g., Staphylococcus aureus, Proteus spp., and Klebsiella spp.) may result in decomposition of urea and subsequently decreased BUN levels. To prevent this, analysis should be completed within several hours of collection or the sample should be refrigerated. A variety of photometric tests are available for measurement of urea nitrogen. All have an acceptable level of accuracy and precision. Chromatographic tests are also available and provide a semiquantitative serum urea nitrogen result. These methods tend to be less accurate and should be used only as quick screening tests.

Urea is an insoluble molecule and must be excreted in a high volume of water. Dehydration results in increased retention of urea in the blood (azotemia). High-protein diets and strenuous exercise may cause an elevated BUN level because of increased amino acid breakdown, not because of decreased glomerular filtration. Differences in rate of protein catabolism in male versus female animals, as well as young and older animals, will also affect BUN levels.

Serum Creatinine

Creatinine is formed from creatine, which is found in skeletal muscle, as part of muscle metabolism. Creatinine diffuses out of the muscle cell and into most body fluids, including blood. If physical activity remains constant, the amount of creatine metabolized to creatinine remains constant and the blood level of creatinine remains constant. The total amount of creatinine is a function of the animal’s total muscle mass. Under normal conditions, all serum creatinine is filtered through the glomeruli and eliminated in urine. Any condition that alters the glomerular filtration rate (GFR) will alter the serum creatinine levels. Creatinine also may be found in sweat, feces, and vomitus and may be decomposed by bacteria.

Blood creatinine levels are used to evaluate renal function on the basis of the ability of the glomeruli to filter creatinine from blood and eliminate it in urine. Like BUN, creatinine is not an accurate indicator of kidney function because nearly 75% of the kidney tissue must be nonfunctional before blood creatinine levels rise. Commonly used test methods for serum creatinine include the Jaffe method, as well as several enzymatic methods. Postprandial decreases in creatinine occur from transient increase in the GFR after a meal.

BUN/Creatinine Ratio

Because BUN and creatinine both have a wide range of reference intervals, their use as indicators of renal function is limited. The GFR may be decreased as much as four times below normal before changes are seen in the BUN or serum creatinine levels. In addition, healthy animals often have values below the reference ranges. In renal disease, hyperplasia of renal tissue may mask early signs of renal failure. The ratio of BUN to creatinine is used in human medicine for diagnosis of renal disease. Although this is not yet well established in veterinary species, it can be used to assess patient status during treatment.

BUN and creatinine have an inverse logarithmic relationship. The reciprocal of creatinine tracked over time can be used to track progress of disease and effectiveness of treatment. A disproportionate increase in BUN can indicate dehydration, dietary treatment failure, or owner noncompliance with treatment regimens.

Urine Protein/Creatinine Ratio

Quantitative assessment of renal proteinuria is of diagnostic significance in renal disease. In the absence of inflammatory cells in the urine, proteinuria indicates glomerular disease. For accurate determination of proteinuria, a 24-hour urinary protein value should be determined. This is a tedious task, and errors are common. A mathematical method that compares the urine protein level with the urine creatinine levels in a single urine sample is more accurate and comprehensive. This urine protein to creatinine (P/C) ratio is based on the concept that the tubular concentration of urine increases both the urinary protein and creatinine concentrations equally.

This method has been validated for the canine species. Usually 5 to 10 ml of urine are collected between 10 am and 2 pm, preferably by cystocentesis. The urine sample should be kept at 4° C or stored at 20° C. The sample is centrifuged and the supernatant is used. The protein and creatinine concentrations for each sample can be determined by a variety of photometric methods. The urine P/C ratio for healthy dogs should be less than 1. A urine P/C between 1 and 5 may have prerenal (hyperglobulinemia, hemoglobinemia, myoglobinemia) or functional (exercise, fever, hypertension) origin, whereas urine P/C greater than 5 is caused by renal disease.

Uric Acid

Uric acid is a metabolic by-product of nitrogen catabolism and is found mainly in the liver. Uric acid is usually transported to the kidneys bound to albumin. In most mammals, the compound passes through the glomerulus and is largely reabsorbed by the tubule cells. It is then converted to allantoin and excreted in the urine. In Dalmatian dogs a defect in uric acid uptake into hepatocytes results in decreased conversion to allantoin. Therefore this breed excretes uric acid, and not allantoin, in urine.

Uric acid is the major end product of nitrogen metabolism in avian species. It constitutes approximately 60% to 80% of the total nitrogen excreted in avian urine and is secreted actively by the renal tubules. Measurement of plasma or serum uric acid is used as an index of renal function in birds. Uric acid can also be increased artifactually in samples from toenail clippings because of fecal urate contamination. Uric acid concentrations will increase after a meal in carnivorous birds. With renal disease, uric acid concentrations increase when the kidney has lost more than 70% of its functional capacity.

Tests of Glomerular Function

In patients with azotemia or those that are symptomatic for renal disease without azotemia, several additional tests can be performed to evaluate kidney function. These clearance studies require collection of timed, quantified urine samples along with concurrent plasma samples. Two primary types of clearance studies are performed: the effective renal plasma flow (ERPF) and GFR. The ERPF uses test substances eliminated by both glomerular filtration and renal secretion, typically the amide p-aminohippuric acid. The GFR uses test substances eliminated only by glomerular filtration, typically creatinine, inulin, or urea. The test substance is administered and urine and plasma samples collected. The ERPF or GFR are then calculated as follows:

where Ux represents substance present in urine (in milligrams per milliliter), V represents the amount of urine collected over a defined period (in milligrams per kilogram per minute), and Px represents the plasma concentration of substance.

Creatinine Clearance Tests

Endogenous Creatinine Clearance: Because creatinine appears in the glomerular filtrate with negligible tubular secretion, it is a natural tracer of glomerular filtration. Fortunately, its short-term blood concentrations are stable enough to satisfy the clearance formula used for steady infusion studies of inulin and p-aminohippuric acid. The test is relatively simple (Box 3-3). A measure of blood creatinine and an accurate, timed urine collection are required for this test. Precision is of the utmost importance. Sloppy bladder catheterization and sampling ruin the results, especially with the briefer methods. The bladder must be rinsed before and after the test, saving the after-rinses with the urine for creatinine analysis. Clearance is calculated by dividing urinary creatinine excretion (urine creatinine concentration × urine volume) by plasma creatinine concentration. The estimate, if imprecise, is practical.

To avoid errors, plasma creatinine should be determined by the combination creatinine PAP test instead of the Jaffe method. The combination creatinine PAP test is an enzymatic chromogenic method to determine creatinine concentration. The Jaffe method also determines noncreatinine chromogens in plasma, which do not appear in urine. Excess serum ketones, glucose, and proteins all falsely elevate GFR estimates because of chromatic interference and cross-reactivity.

Exogenous Creatinine Clearance: Exogenous creatinine clearance is an accurate method to measure GFR in small animals. Plasma creatinine concentration increases, making plasma noncreatinine chromogens concentration negligible. This allows the application of the Jaffe method to determine creatinine concentrations (Box 3-4). Avoiding dehydration in the animal is critical in the performance of this test; free access to water must be ensured before any glomerular filtration tests.

Single-Injection Inulin Clearance

Inulin is excreted entirely by glomerular filtration, without tubular secretion, reabsorption, or catabolism. As a result, inulin clearance tests that use a constant infusion rate and quantitative urine sampling may be considered the best method to evaluate GFR. Single-injection inulin clearance is a simpler method that alternatively may be used. After a 12-hour fast (free access to water is permitted during the test), inulin is injected intravenously at a dosage of 100 mg/kg or 3 g/m2 (body surface calculation gives more accurate results); serum samples are then obtained at 20, 40, 80, and 120 minutes. Total inulin clearance is calculated from the decrease of serum inulin concentration by using a two-compartment model. Normal dogs present a GFR of 83.5 to 144.3 ml/min per square meter of body surface area.

Sodium Sulfanilate

Sodium sulfanilate is removed only by glomerular filtration in dogs; its disappearance from the plasma is an index of glomerular filtration. The test can detect unilateral nephrectomy and diminished renal function in dogs before azotemia develops. The half-life of sodium sulfanilate is prolonged up to five times in horses with glomerulonephritis. Although the test also is performed in cats, the mode of sodium sulfanilate excretion is not confirmed in this species. This test is no longer widely used.

Phenolsulfonphthalein Clearance

Phenolsulfonphthalein is an organic dye excreted by the renal tubules. Nonetheless, its clearance is accepted as a measure of renal blood flow because this usually limits its efflux more than tubular secretion rates. Phenolsulfonphthalein clearance is decreased only when more than two thirds of the nephrons are nonfunctional or when renal perfusion is compromised. This test is no longer widely used.

Other Estimates of Glomerular Filtration Rate and Effective Renal Plasma Flow

These methods are performed at reference and research centers and require specialized equipment. The double-isotope method involves injection of a tracer solution and uses a gamma camera for nuclear imaging of kidneys at serial time intervals. The nonisotope method is similar, but after injection of contrast media serial blood samples are collected and analyzed with x-ray fluorescent laboratory assays.

Water-Deprivation Tests: Polyuria or polydipsia may lead to suspicions about the kidney, which may be erroneous. Diuresis and subsequent polydipsia may mean failing nephrons or kidney function disrupted by hyperadrenocorticism (Cushing’s disease), diabetes mellitus, or nephrogenic diabetes insipidus. The kidneys may be normal but not receive the signal to concentrate urine, as in neurogenic diabetes insipidus. Finally, the diuresis may be a totally appropriate renal compensation for pathologic water intake (psychogenic polydipsia).

Vasopressin or antidiuretic hormone (ADH), from the neurohypophysis, signals the kidneys to retain water by increasing the duct’s permeability to water. Water in the urine passes out of the collecting duct and into the hypertonic renal medulla, concentrating the urine that remains behind in the collecting duct. If the system fails (e.g., inappropriate diuresis), either the neuroendocrine pathway that releases ADH in response to hypovolemia/plasma hyperosmolarity has been interrupted or the nephrons are unable to respond.

Water-Deprivation Test: This test is performed by observation of the response to endogenous or exogenous ADH. The basis for this test is to dehydrate the patient safely until a definite stimulus exists for endogenous ADH release (usually at approximately 5% body weight loss). That end point may vary. When denied water, patients dehydrate at different rates and must be monitored for weight loss, clinical signs of dehydration, and increased urine osmolarity or specific gravity. At the end point, the kidney should be under strictest endocrine orders to concentrate urine. Continued diuresis and dilute urine indicate lack of endogenous ADH or unresponsive nephrons. In dogs with kidney failure, this unresponsiveness precedes azotemia.

Contraindications to this test include dehydration and azotemia. Dehydrated patients risk hypovolemia and shock. They already should have maximal ADH release; if they could concentrate urine, they would. The test then is useless and dangerous, especially in animals with diabetes insipidus or neurogenic diabetes insipidus. Azotemia already attests to kidney dysfunction. Again, the test reveals nothing new and adds a prerenal component to the azotemia.

Vasopressin Response: When patients demonstrate the above-mentioned signs or a previous water-deprivation test has failed, a vasopressin response test is indicated. The vasopressin response test is simply a challenge with exogenous ADH; it focuses on the kidneys’ abilities to respond. Urine osmolarity or specific gravity is the index of function. Normal kidneys should concentrate urine with this technique despite the patient’s free access to water. Vasopressin must be handled carefully because it is a labile drug and settles out in oil suspensions. Test failures may result from use of old or poorly mixed solutions. Also, intramuscular vasopressin injection causes pain. Because of vasopressin’s vasomotor activity, its use is theoretically contraindicated in pregnancy.

In both tests, even normal kidneys may be unable to concentrate urine to normal extremes. Diuresis quickly washes solutes from the renal medulla, weakening the osmotic gradient that draws water from the collecting ducts. Gradual water deprivation over a 3- to 5-day period before use of the water deprivation test is recommended to renew renal solutes and allow an evaluation of the impact of dehydration on the animal.

The basic water deprivation and vasopressin response tests may be combined in a single protocol that may differentiate several causes of polyuria/polydipsia (Box 3-5). The modified water-deprivation test is specifically contraindicated in patients with known renal disease, uremia resulting from prerenal or primary renal disorder, or suspected or obvious dehydration.

Fractional Clearance of Electrolytes: The fractional clearance (FC), also referred to as fractional excretion (FE), of electrolytes is a mathematical manipulation that describes the excretion of specific electrolytes (particularly sodium, potassium, and phosphorus) relative to the GFR. The most commonly used FE test is that of sodium. Bicarbonate and chloride FE testing is rarely performed. The tests can differentiate prerenal from postrenal azotemia. Random, concurrent blood and urine samples are required. The FEX is calculated as follows:

where X is the electrolyte measurement used, which can be any of the four (sodium, potassium, phosphorus, and chloride); UX and PX are the urine and plasma concentrations, respectively, of that specific electrolyte; and PCR and UCR are the urine and plasma concentrations of creatinine, respectively. Normal results are as follows:

Urethral Pressure Profilometry: Urinary incontinence is a common complaint in canine medicine. Most cases are caused by sphincter mechanism incompetence. The specific functional test to explore the urethral sphincter mechanism is the urethral pressure profilometry. This test requires appropriate equipment and is restricted to referral institutions. A double-sensor microtip pressure transducer catheter is used. The catheter is inserted through the urethra into the urinary bladder. The tip sensor measures intravesical pressure at the same time as the other sensor records urethral resistance to perform a continuous comparison.

Inorganic Phosphorus: Serum inorganic phosphorus (Pi) is usually the reciprocal of serum calcium. Normally, serum Pi is reabsorbed in kidney tubules. This mechanism is under hormonal control (parathyroid hormone) and is affected by serum pH. Initially, renal damage that alters the GFR leads to decreased urinary Pi and increased serum Pi. Subsequent alteration in calcium and Pi leads to increase in serum calcium and decrease in serum Pi. See the electrolyte information later in this chapter for additional information on testing for Pi.

Enzymuria: Many of the chemical tests performed on serum or plasma can also be performed on urine samples. Enzymes that may be present in urine of patients with renal disease include urinary GGT and urinary N-acetyl-d-glucosaminidase (NAG). Urinary GGT and NAG are enzymes released from damaged tubule cells. Comparison of the units of GGT or NAG per milligram of creatinine can indicate the extent of renal damage. Both GGT and NAG increase rapidly with nephrotoxicity, and increases occur sooner than changes in serum creatinine, creatinine clearance, or fractional excretion of electrolytes.

PANCREAS ASSAYS

The pancreas is actually two organs, one exocrine and the other endocrine, held together in one stroma.

The exocrine portion, also referred to as the acinar pancreas, comprises the greatest portion of the organ. This portion secretes an enzyme-rich juice that contains enzymes necessary for digestion into the small intestine. The three primary pancreatic enzymes are trypsin, amylase, and lipase. These digestive enzymes are released into the lumen of other organs through a duct system. Trauma to pancreatic tissue is often associated with pancreatic duct inflammation that results in a backup of digestive enzymes into peripheral circulation.

Interspersed within the exocrine pancreatic tissue are arrangements of cells that, in a histologic section, take on the appearance of “islands” of lighter-staining tissue. These are called the islets of Langerhans. Four types of islet cells are present but they cannot be distinguished on the basis of their morphologic characteristics. The four cell types are designated α, β, δ, and PP cells. The δ and PP cells comprise less than 1% of the islet cells and secrete somatostatin and pancreatic polypeptide, respectively. β-Cells comprise approximately 80% of the islet and secrete insulin. The remaining area, nearly 20%, consists of α-cells that secrete glucagon and somatostatin. The pancreas has little regenerative ability. When pancreatic islets are damaged or destroyed, pancreatic tissue becomes firm and nodular with areas of hemorrhage and necrosis. These islets are no longer able to function. Diseases of the pancreas may result in inflammation and cellular damage that causes leakage of digestive enzymes or insufficient production or secretion of enzymes.

Exocrine Pancreas Tests

The tests commonly performed to evaluate the acinar functions of the pancreas include amylase and lipase. Trypsinlike immunoreactivity and serum pancreatic lipase immunoreactivity are also available as tests for pancreatic function. In cats, serum amylase and lipase activities have been shown to have limited clinical significance in the diagnosis of pancreatitis. In experimentally induced pancreatitis in cats, serum amylase actually decreases. Serum activities of both enzymes are frequently normal in cats with pancreatitis.

Amylase

The primary source of amylase is the pancreas, but it is also produced in the salivary glands and small intestine. Increases in serum amylase are nearly always caused by pancreatic disease, especially when accompanied by increased lipase levels. The rise in blood amylase level is not always directly proportional to the severity of pancreatitis. Serial determinations provide the most information.

Amylase functions to break down starches and glycogen in sugars, such as maltose and residual glucose. Increased levels of amylase appear in blood during acute pancreatitis, flare-ups of chronic pancreatitis, or obstruction of the pancreatic ducts. Enteritis, intestinal obstruction, or intestinal perforation may also result in increased serum amylase from increased absorption of intestinal amylase into the bloodstream. In addition, because amylase is excreted by the kidneys, a decrease in GFR for any reason can lead to increased serum amylase. Serum amylase activity greater than three times the reference range usually suggests pancreatitis.

Two amylase test methods are available: the saccharogenic method and the amyloclastic method. The saccharogenic method measures production of reducing sugars as amylase catalyzes the breakdown of starch. The amyloclastic method measures the disappearance of starch as it is broken down to reduce sugars through amylase activity. Calcium-binding anticoagulants, such as EDTA, should not be used because amylase requires the presence of calcium for activity. The presence of lipemia may reduce amylase activity. The saccharogenic method is not ideal for canine samples because maltase in canine samples may artificially elevate assay results. Normal canine and feline amylase values can be up to 10 times higher than those in human beings. Therefore samples may have to be diluted if tests designed for human samples are used.

Lipase

Nearly all serum lipase is derived from the pancreas. The function of lipase is to break down the long-chain fatty acids of lipids. Excess lipase is normally filtered through the kidneys, so lipase levels tend to remain normal in the early stages of pancreatic disease. Gradual increases are seen as disease progresses. With chronic, progressive pancreatic disease, damaged pancreatic cells are replaced with connective tissue that cannot produce enzyme. As this occurs, a gradual decrease in both amylase and lipase levels are seen.

Test methods for determination of lipase levels usually are based on hydrolysis of an olive oil emulsion into fatty acids using the lipase present in patient serum. The quantity of sodium hydroxide required to neutralize the fatty acids is directly proportional to lipase activity in the sample. Newer tests for lipase are available from some reference laboratories capable of detecting canine lipase by using immunologic methods.

Lipase assay may be more sensitive for detecting pancreatitis than is amylase assay. The degree of lipase activity, like amylase activity, is not directly proportional to the severity of pancreatitis. Determinations of blood lipase and amylase activities usually are requested at the same time to evaluate the pancreas.

Increased lipase activity is also seen with renal and hepatic dysfunction, although the exact mechanisms for this are unclear. Steroid administration is correlated with increased lipase activity with no concurrent change in amylase activity.

Amylase and Lipase in Peritoneal Fluid

Comparison of amylase and lipase activity in peritoneal fluid with serum may provide additional diagnostic information. A finding of higher amylase and lipase activity in peritoneal fluid than in serum strongly suggest pancreatitis provided intestinal perforation has first been ruled out.

Trypsin

Trypsin is a proteolytic enzyme that aids digestion by catalyzing the reaction that breaks down the proteins of ingested food. Trypsin activity is more readily detectable in feces than in blood. For this reason, most trypsin analyses are done on fecal samples. Trypsin is normally found in feces, and its absence is abnormal.

Two fecal test methods are used in the laboratory: the test tube method and the x-ray film test. The test tube method involves mixing fresh feces with a gelatin solution. The test solution does not become a gel if trypsin is present in the sample to break down the protein (gelatin). If trypsin is absent, the solution becomes a gel. The x-ray film test uses the gelatin coating on undeveloped x-ray film to test for the presence of trypsin. A strip of x-ray film is placed in a slurry of feces and bicarbonate solution. If trypsin is present in the fecal sample, the gelatin coating is removed from the film upon rinsing with water. If no trypsin is present, the gelatin coating remains on the film after rinsing. The test tube method is considered more accurate than the x-ray film test in evaluating fecal trypsin proteolytic activity.

Only fresh feces should be used. Fecal trypsin activity may be decreased if the patient has recently eaten raw egg whites, soybeans, lima beans, heavy metals, citrate, fluoride, or some organic phosphorous compounds. Calcium, magnesium, cobalt, and manganese in the feces may increase trypsin activity. Proteolytic bacteria in the fecal sample may result in false-positive or apparently normal results, especially in older samples.

Serum Trypsinlike Immunoreactivity

Serum trypsinlike immunoreactivity (TLI) is a radioimmunoassay that uses antibodies to trypsin. The test can detect both trypsinogen and trypsin. The antibodies are species specific. Trypsin and trypsinogen are produced only in the pancreas. With pancreatic injury, trypsinogen is released into the extracellular space and converted to trypsin, which diffuses into the bloodstream. The test is available only for the dog and cat.

TLI provides a sensitive and specific test for diagnosis of exocrine pancreatic insufficiency in dogs. Dogs with exocrine pancreatic insufficiency (EPI) have a serum TLI of less than 2.5 mg/L. Normal dogs have a range of 5 to 35 mg/L. Dogs with other causes of malassimilation may have normal serum TLI. Dogs with chronic pancreatitis may have normal TLI values or between 2.5 and 5 mg/L. Normal cats have 14 to 82 mg/L TLI, whereas cats with EPI have less than 8.5 mg/L.

Serum TLI decreases in parallel with functional pancreatic mass. The inflammation associated with acute and probably chronic pancreatitis may enhance leakage of trypsinogen and trypsin from the pancreas and increase TLI. Also, decreased GFR increases TLI (trypsinogen is a small molecule that easily passes into the glomerular filter). Serum TLI is an important indicator of functional pancreatic mass. It is most informative if coupled with N-benzoyl-l-tyrosyl-p-aminobenzoic acid (BTPABA) and fecal fat results to characterize and diagnose malassimilation.

Serum TLI increases after eating (especially proteins) but values remain within reference intervals. In addition, pancreatic enzyme (exogenous) supplementation does not alter TLI. Therefore food should be withheld for at least 3 hours and preferably 12 hours before taking a blood sample. The blood is coagulated at room temperature and the serum stored at 20° C until assay.

Serum Pancreatic Lipase Immunoreactivity

Serum feline pancreatic lipase immunoreactivity (fPLI) is specific for pancreatitis, and its use is now recommended instead of the previously validated serum feline trypsinlike immunoreactivity test as a serum test to diagnose cats with symptoms of pancreatitis.

Endocrine Pancreas Tests

A variety of tests are available to evaluate the endocrine functions of the pancreas. In addition to the traditional blood glucose tests, other tests now available include fructosamine, β-hydroxybutyrate, and glycosylated hemoglobin. Urinalysis, serum cholesterol, and triglyceride tests also provide information on the function of the pancreas.

Glucose

Regulation of blood glucose levels is complex. Glucagon, thyroxine, growth hormone, epinephrine, and glucocorticoids are all agents favoring hyperglycemia. They boost blood glucose levels by encouraging glycogenolysis, gluconeogenesis, and/or lipolysis while discouraging glucose entry into cells. Insulin is the hypoglycemic hormone. Promoting glucose flux into its target cells, it also triggers anabolism, a process that converts glucose to other substances. This regulatory effect prevents the blood glucose concentration from exceeding the renal threshold and the spilling of glucose into the urine.

The pancreatic islets respond directly to blood glucose concentrations and release insulin (from the beta cells) or glucagon (from the alpha cells) as needed. Glucagon release also directly stimulates insulin release. Epinephrine is under direct sympathetic neural control; hyperglycemia is one aspect of the classic “flight or fight” state. The other hormones mentioned respond to hypothalamic/pituitary command. At any point in time, most of these agents are acting, shifting the blood glucose concentration up or down.

Because only insulin lowers blood glucose levels, aberrations of insulin action have the most obvious clinical effects. Hypofunction (diabetes mellitus) or hyperfunction (hyperinsulinism) can occur.

The blood glucose level is used as an indicator of carbohydrate metabolism in the body and may also be used as a measure of endocrine function of the pancreas. The blood glucose level reflects the net balance between glucose production, such as dietary intake and conversion from other carbohydrates, and glucose utilization, which is expended energy and conversion to other products. It also may reflect the balance between blood insulin and glucagon levels.

Glucose utilization depends on the amount of insulin and glucagon produced by the pancreas. As the insulin level increases, so does the rate of glucose utilization, resulting in decreased blood glucose levels. Glucagon acts as a stabilizer to prevent blood glucose levels from becoming too low. As the insulin level decreases (as in diabetes mellitus), so does glucose utilization, resulting in increased blood glucose concentration.

Many tests are available for blood glucose. Some of these react only with glucose, whereas others may quantitate all sugars in the blood. End point and kinetic assays are available. The kinetic enzymatic assays tend to be the most accurate and precise. Samples must be taken from a properly fasted animal. Serum and plasma for glucose testing must be separated from the erythrocytes immediately after blood collection. Glucose levels may drop 10% an hour if the sample of plasma is left in contact with erythrocytes at room temperature. Even the use of an SST may not be adequate to prevent this. Mature erythrocytes use glucose for energy and, in a blood sample, they may decrease the glucose level enough to give false-normal results if the original sample had an elevated glucose level. If the sample originally had a normal glucose level, erythrocytes may use enough glucose to decrease the level to below normal or to zero. If the plasma cannot be removed immediately, the anticoagulant of choice is sodium fluoride at 6 to 10 mg/ml of blood. Sodium fluoride may be used as a glucose preservative with EDTA at 2.5 mg/ml of blood. Refrigeration slows glucose utilization by erythrocytes.

Fructosamine

Glucose can bind a variety of structures, including proteins. Fructosamine represents the irreversible reaction of glucose bound to protein, particularly albumin. When glucose concentrations are persistently elevated in blood as in diabetes mellitus, increased binding of glucose to serum proteins occurs. The finding of increased fructosamine indicates a persistent hyperglycemia. Because the half-life of albumin in dogs and cats is 1 to 2 weeks, fructosamine provides an indication of the average serum glucose over that period. Fructosamine levels respond more rapidly to alterations in serum glucose than does glycosylated hemoglobin. However, serum fructosamine may be artifactually reduced in patients with hypoproteinemia.

Glycosylated Hemoglobin

Glycosylated hemoglobin represents the irreversible reaction of hemoglobin bound to glucose. The finding of increased glycosylated hemoglobin indicates a persistent hyperglycemia. The test result is a reflection of the average glucose concentration over the lifespan of an erythrocyte—3 to 4 months in dogs and 2 to 3 months in cats. Patients that are anemic may have artifactually reduced levels of glycosylated hemoglobin.

β-Hydroxybutyrate

Ketone bodies can also be detected in plasma. The ketone produced in greatest abundance in ketoacidotic patients is β-hydroxybutyrate. However, many tests for serum ketones only detect acetone. Tests for β-hydroxybutyrate that use enzymatic, colorimetric methods are now becoming available for use in the veterinary clinic.

Glucose Tolerance

Glucose tolerance tests directly challenge the pancreas with a glucose load and measure insulin’s effect by evaluation of blood or urine glucose concentrations. If adequate insulin is released and its target cells have healthy receptors, the artificially elevated blood glucose level peaks 30 minutes after ingestion and begins to drop, reaching normal value within 2 hours, and no glucose appears in the urine. A normal glucose blood level at 2 hours postprandial may rule out diabetes mellitus. Prolonged hyperglycemia and glucosuria are consistent with diabetes mellitus. Profound hypoglycemia after challenge may indicate a glucose-responsive, hyperactive beta-cell tumor of the pancreas. This test may be simplified by determining a single 2-hour postprandial glucose.