Sensory Aids for Persons With Visual Impairments

FUNDAMENTAL APPROACHES TO SENSORY AIDS

Augmentation of an Existing Pathway

Use of an Alternative Sensory Pathway

PRINCIPLES OF COMPUTER ADAPTATIONS FOR VISUAL IMPAIRMENTS

GUI Problems and the Blind Computer User

READING AIDS FOR PERSONS WITH VISUAL IMPAIRMENTS

Access to Visual Computer Displays for Individuals With Low Vision

Devices That Provide Automatic Reading of Text

Camera and Scanner Characteristics for Automatic Reading

Braille as a Tactile Reading Substitute

Portable Braille Note Takers and Personal Organizers

Speech as an Auditory Reading Substitute

Synthetic Speech Output Reading Machines

Access to Visual Computer Displays for Individuals Who Are Blind

Studies of Computer Use by Visually Impaired Adults

User Agents for Access to the Internet

Making Mainstream Technologies Accessible

MOBILITY AND ORIENTATION AIDS FOR PERSONS WITH VISUAL IMPAIRMENTS

Electronic Travel Aids for Orientation and Mobility

Global Positioning System–Based Navigation Aids for the Blind

On completing this chapter, you will be able to do the following:

1 Describe the major approaches to sensory substitution, including the advantages and disadvantages of each

2 Describe device use for reading and mobility by persons who have visual impairment

3 Describe how computer outputs are adapted for individuals with visual limitations

4 Describe the major approaches to Internet access for persons with visual impairments

When an individual has a sensory impairment, assistive technologies can provide assistance in the input of information. In this chapter, approaches that are used to either aid or replace seeing and hearing are emphasized. This includes sensory aids that are intended for general use and assistive technologies that are used specifically for providing visual access to computers. Assessment considerations for sensory function are described in Chapter 4. Patients with low vision were surveyed to determine their major needs for assistive devices (Stelmack et al, 2003). Sixty-three activities in the categories of travel, food and shopping, communications, household tasks, self-care, recreation and socialization, and contrast were included in the survey. The informants were 149 individuals in the age range of 51-96 years (mean 76 years). Two thirds were male. The survey consisted of asking participants whether they could perform the activity independently or if they used a low-vision device or whether they thought it was important to use a device to perform the activity independently. The highest-ranked items involved travel (finding a clear path, identify landmarks, recognize traffic signals, step off a curb), self-care (apply makeup, shave), reading (large print, sign checks, find food in kitchen), and recreation (see television, recognize persons close up); Stelmack et al (2003) provide detailed results. Assistive devices designed to meet the needs identified in the Stelmack survey are discussed in this chapter, beginning with the fundamental principles associated with sensory aids.

FUNDAMENTAL APPROACHES TO SENSORY AIDS

Chapters 2 and 3 describe the human component of the human activity assistive technology (HAAT) model in some detail. Two primary intrinsic enablers of the human in this model are sensing and perception. If there are impairments in either of these functions, it is necessary to use sensory aids. When sensory aids are designed or applied, the level of impairment becomes a critical issue. If there is sufficient residual function in the primary sensory system being aided, the input is augmented to make it useful to the person. For example, eyeglasses magnify (augment) the level of visual information. On the other hand, if there is insufficient residual sensory capability, then the sensory aid must use an alternative sensory pathway. For example, braille (tactile pathway) can be used for reading when vision is not functional. We describe both augmentation and replacement for visual information in this section.

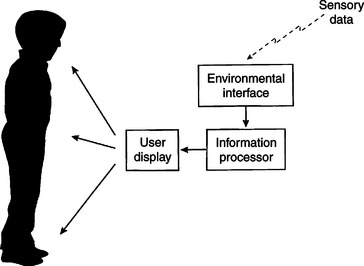

Figure 8-1 shows the major components of a sensory aid based on the parts of the assistive technology component of the HAAT model. The environmental interface detects the sensory data that the human cannot obtain through his or her own sensory system. This is typically a camera for visual data, a microphone for auditory data, and pressure sensors for tactile data. The environmental interface signal is fed to an information processor, the function of which depends on the type of aid. For sensory aids that use the same sensory pathway, the information processor primarily amplifies the signal. Examples include closed-circuit television (CCTV) for visual input and hearing aids for auditory input. In other cases, the information processor may be more complicated. For example, in an auditory substitution reading device, the information processor may take visual information from the sensor, convert it to speech, and then send it to the user as auditory information. In the case of the sensory aid, the human/technology interface is a user display, which portrays the sensory information for the human user. The processed information is presented to the user so that the alternative pathway can process it. For the visual pathway this is a visible display (e.g., a video monitor), for the auditory pathway it is an audio display (e.g., a speaker), and for the tactile pathway it is a vibrating pin or electrode array through which pressure or touch data are provided to the user.

Augmentation of an Existing Pathway

For someone who has low vision, the primary pathway (i.e., the one normally used for input) is still available; it is just limited. The limitation may be one of several types. The most common type of limitation is one of intensity. For visual information, this limitation means that the size of the input signal is too small to be seen. Eyeglasses are the most common type of aid used for this problem, but other ways can be used to magnify the signal. The second type of impairment is referred to as a frequency or wavelength limitation. For visual input, this is manifest in inadequacy in discerning colors or the contrast between foreground and background, and this problem can be addressed with filters or by varying contrast (e.g., black on white rather than white on black). Finally, there are field limitations. This term is most commonly used in describing visual loss, and the field may be limited in several ways (see Figure 3-4). The most common approach to problems of this type is to use lenses that are designed to widen the field.

Use of an Alternative Sensory Pathway

When a sensory input modality is so impaired that there can be no useful input of information through that channel, we must substitute an alternative sensory system. The use of braille for reading by persons who are blind is an example of tactile substitution for visual input. Tactile and auditory systems replace the visual system, and visual and tactile systems substitute for auditory input of information. Visual and tactile substitutions for auditory information are discussed in Chapter 9. When this type of substitution is made, the assistive technology practitioner (ATP) must be aware of fundamental differences among the tactile, visual, and auditory systems.

Tactile Substitution.

The tactile system has been used as the basis for many visual substitution systems. Visual information is spatially organized (Nye and Bliss, 1970). This means that visual information is represented in the central nervous system by the relationship of objects to each other in space; that is, the left, right, up, down, far, and near features of objects are preserved. In contrast, the auditory system is temporally organized (Kirman, 1973). This means that it is the time relationships in auditory signals that provide information. For example, it is the temporal sequence of sounds in speech that the auditory system uses to form words and derive meaning. Finally, tactile information is both temporally and spatially organized (Kirman, 1973), and sensory input from the tactile system requires both spatial and temporal cues. For example, the fingers are capable of distinguishing fine features such as those found on coins. However, to distinguish one denomination of coin from another, it is necessary to manipulate them in the hand. This movement of the coins provides temporal (time sequence) information that helps clarify the spatial information, and it is very difficult to distinguish two denominations of coins merely by placing a hand on top of them without movement. This combination of movement and texture is referred to as spatiotemporal information. The combination of tactile and kinesthetic or proprioceptive information is called the haptic sensory system.

Kirman (1973) presents an example that illustrates the differences between visual and tactile information for reading. Print on a page is organized spatially. People read by using saccadic eye movements, which jump from one group of letters to another. With each new point of focus, new information is taken in. This allows the visual system (including the eyes, peripheral pathways, and central nervous system components) to use its spatial feature extraction to recognize shapes as letters, to assemble them into words, and to associate meaning with them. In contrast, a person reading with braille moves his or her hand across the line of raised dots, obtaining both spatial (the organization of the six braille cells) and temporal (the moving pattern under his finger) information. If the sighted person were to use the method used with braille, the text would constantly move before the eyes, and this would result in a blurred image because the spatial information would be constantly changing. Thus we can say that the movement (temporal aspect) interferes with the visual input of information. On the other hand, if the braille user were to use the approach used by the sighted reader, he or she would place a finger on a character, input the information, and then jump to the next character. This would severely limit the input of braille information because the movement required by the tactile system would be absent. Thus the visual and tactile methods of sensory input are very different, which must be taken into account when one system is substituted for the other.

When vision is used for mobility rather than reading, there are some differences. In this case the visual image is constantly changing as the individual walks. The eyes scan the environment, and information is derived from the spatial arrangement of objects and people and from changes in the person’s position relative to these objects as he or she moves. The visual system (including oculomotor components) functions to stabilize images on the retina for input of data, even during movement. This maximizes input of changing spatial information. Ways in which persons with visual impairments use other senses and assistive devices for mobility are discussed in the section on mobility later in this chapter.

Auditory Substitution.

The auditory system has been used to substitute for visual information in several ways. Some of these have been more successful than others, and the reasons for success or failure illustrate the challenges of substituting one sense for another. The least successful approaches have been those that converted a visual image of letters into a set of tones. One such device was the Stereotoner (Smith, 1972). The environmental interface for this device was a camera consisting of a set of horizontal slits. As the camera passed over a letter, a black area (i.e., a part of a letter) resulted in a tone being produced and a white area (no letter) resulted in silence. As the camera moved over a letter, a series of tones was heard as changing musical chords. Although some individuals were able to use this information at a reading rate of 40 words per minute, the device was generally unsuccessful. Cook (1982) cites several reasons for this. First, the device required the user to recognize a chord pattern, then to assemble that into a letter, and then to put the letters together into a word that was meaningful in the context of the whole sentence. This is a difficult and unnatural process for the auditory system. Second, the necessity to read letter by letter using this approach resulted in a slow input speed and placed additional memory requirements on the user. Finally, the Stereotoner was tiring to the user because of the intense concentration required. The major lesson to be learned from this example is that the auditory system is ideally suited to the receipt of language information in certain forms (e.g., speech), but it is poorly suited to complex signals that represent spatial patterns, as in the case of the Stereotoner. This is the primary reason that reading devices using auditory substitution all use speech as the mode of presentation of information.

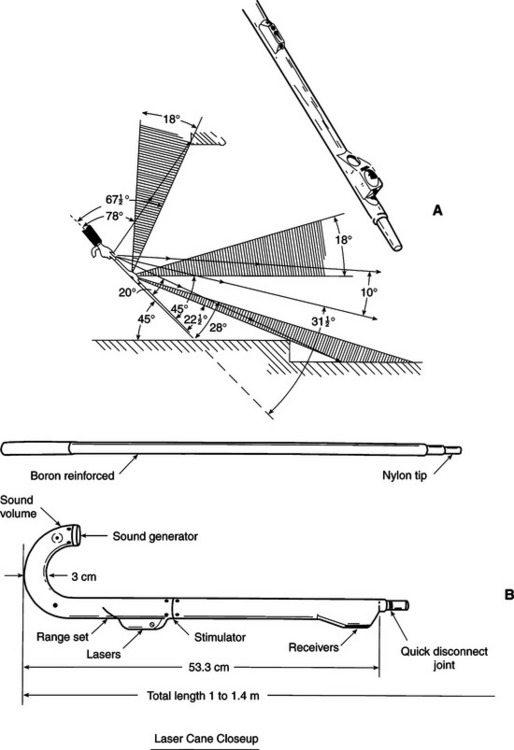

Devices for visual mobility have used auditory substitution with greater success. This is because mobility depends much more on gross cues than on precise spatial information as in reading. In mobility, the problem becomes one of identifying large objects as potential hazards.

PRINCIPLES OF COMPUTER ADAPTATIONS FOR VISUAL IMPAIRMENTS

Computer interaction is bidirectional, and the ATP must understand how computer outputs can be adapted for persons with sensory impairments. User output from a computer is generally provided by a visual display. This type of display is also referred to as soft copy. For both general-purpose computers and special-purpose computers built into assistive devices with displays, video display terminals, flat-panel displays, and liquid crystal displays are generally used as output devices. The other type of output from a computer is in a permanent form, or hard copy, from a printer. Computers also provide auditory outputs in sound, music, or synthetic speech. These outputs are important to individuals who have visual impairments.

Standard visual computer outputs are not suitable for use by persons who have vision impairments. The term low vision indicates that the individual is able to use the visual system for reading but that the standard size, contrast, or spacing are inadequate. The term blind refers to individuals for whom the visual system does not provide a useful input channel for computer output displays or printers. For individuals who are blind, alternative sensory pathways of either audition (hearing) or touch (feeling) must be used to provide input. Because low vision and blindness needs are so different from each other, they are discussed separately.

Graphical User Interface

For a human to interact with a computer, there must be an effective communication channel. The most commonly used channel today, the graphical user interface (GUI), is established for nondisabled users through the keyboard or mouse for input and a visual display or speakers for output. What makes these peripheral elements into a user interface is the way in which they interact with the internal computer programs. Input of data, storage and processing, and output are all handled by the computer operating system. Some types of user interfaces are more suitable for adaptation of the computer to provide physical or visual access to the computer. The ATP must understand the various types of user interfaces and how they affect access.

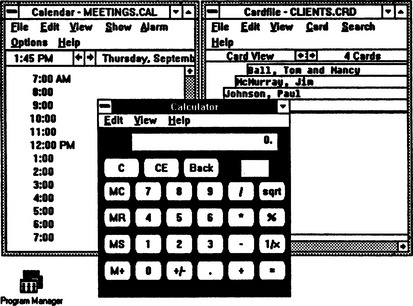

The GUI has three distinguishing features: (1) a mouse pointer, which is moved around the screen, (2) a graphical menu bar, which appears on the screen, and (3) one or more windows, which provide a menu of choices (Hayes, 1990). Movement of the mouse or a mouse equivalent (e.g., keystrokes, trackball, head pointer, or joystick) causes the pointer to move around the screen. Two primary characteristics of GUIs are particularly important in assistive technology applications: (1) the use of graphical menus and icons to which the user can point and click for input instead of using the keyboard and (2) multitasking capabilities, which allow more than one program to be loaded and run simultaneously. The creation of a graphical environment can save typing, reduce effort, and increase accuracy, and the use of icons generally helps with recall and ease of use. The GUI allows the use of windows, which partition the screen into smaller screens, each showing a particular application. When an application or function is opened or run by clicking (or sometimes double clicking), a feature (e.g., a calculator) or application (e.g., a word processor) is displayed in a window. Several windows may be open at the same time. Figure 8-2 shows multiple windows open and examples of menus and dialog boxes used for manipulating data and information. Specific implementations of GUIs have slightly different modes of operation, but the basic principles are similar to those described here.

Figure 8-2 An example of a GUI with several windows open for different applications. (From Microsoft Windows manual, Microsoft Corp., Redmond, Wash.)

The GUI has both positive and negative implications for persons with disabilities. The positive features are those that apply to nondisabled users. The major limitation of GUI use in assistive technology is that the user may not have the necessary physical (eye-hand coordination) and visual skills. In addition, adaptation for alternative input or output devices is often difficult, and adaptations must be redone when changes are made to the basic operating system. The GUI is the standard user interface because of its ease of operation for novices and its consistency of operation for experts. The latter ensures that every application behaves in basically the same way (e.g., screen icons for the same task look the same, operations such as opening and closing files are always the same). Adaptations of the GUI for persons with disabilities are discussed in following sections.

The GUI presents unique and difficult problems to the blind computer user. Early computer user interfaces used a command line interface (CLI) in which commands were typed and then executed by the computer. There are fundamental differences between the ways in which a text-only CLI and a GUI provide output to the video screen. These differences present access problems related both to the ways in which internal control of the computer display is accomplished and to the ways in which the GUI is used by the computer user (Boyd, Boyd, and Vanderheiden, 1990). CLI-type interfaces used a memory buffer to store text characters for display. Because all the displayed text can be represented by an ASCII code, it is relatively easy to use a software program and to divert text from the screen to a speech synthesizer. Early screen readers operated on this principle. However, these screen readers were unable to provide access to charts, tables, or plots with graphical features. This type of system is also limited in the features that can be used with text. For example, all text is the same size, shape, and font. Enlarged characters or alternative graphical forms are not possible with a CLI-type of system, and this limits its usefulness to sighted users. The GUI uses a totally different approach to video display control that creates many more options for the portrayal of graphical information. Because each character or other graphical figure is created as a combination of dots, letters may be of any size, shape, or color and many different graphical symbols can be created. This is useful to sighted computer users because they can rely on “visual metaphors” (Boyd, Boyd, and Vanderheiden, 1990) to control a program. Visual metaphors use familiar objects to represent computer actions. For example, a trash can may be used for files that are to be deleted, and a file cabinet may represent a disk drive. The graphical labels used to portray these functions are referred to as icons.

Another feature of the GUI is that it provides a specific, consistent layout of controls on the screen. This aids the user (especially a novice) in accessing programs because everything is consistent from one application program to another and within an application. Figure 8-2 illustrates a typical GUI with several windows open and an application program running. Note that the icons used are of familiar objects, and each window has a similar look and feel.

GUI Problems and the Blind Computer User

The GUI presents several problems to the blind user. First, the graphical characters are not easily portrayed in alternative modes. Text-to-speech programs and speech synthesizers are designed to convert text to speech output (see Chapter 7). However, they are not well suited to the representation of graphics, including the icons (visual metaphors) used in GUIs. Most icons used in GUIs have text labels with them, and one approach to adaptation is to intercept the label and send it to a text-to-speech voice synthesizer system. The label is then spoken when the icon is selected. Another major problem presented to blind users by GUIs is that screen location is important in using a GUI, which is not easily conveyed by alternative means. Visual information is spatially organized, and auditory information (including speech) is temporal (time based). It is difficult to convey the screen location of a pointer by speech alone. It is difficult to portray two-dimensional spatial attributes with speech. An exception to this is a screen location that never changes. For example, some screen readers use speech to indicate the edges of the screen (e.g., right border, top of screen). A more significant problem is that the mouse pointer location on the screen is relative, rather than referenced to an absolute standard location. This means that the only information available to the computer is how far the mouse has moved and the direction of the movement. If there is no visual information available to the user, it is difficult to know where the mouse is pointing. Other challenges presented to the visually impaired user of a GUI include the organization of the screen with elements spatially clustered visually; multitasking in which several windows are open simultaneously, with one possibly occluding another (i.e., visually displayed “on top” although both windows are active); spatial semantics (information presented through position in tables, groupings etc.); and graphical semantics (information portrayed through visual elements such as font size, colors, style) (Ratanasit and Moore, 2005). The Microsoft application programming interface for accessibility is a set of technologies that facilitate the development of screen readers and other accessibility utilities for Windows. These technologies provide alternative ways to store and access information about the contents of the computer screen. The accessibility APIs also include software driver interfaces that provide a standard mechanism for accessibility utilities to send information to speech devices or refreshable braille displays.

Ratanasit and Moore (2005) reviewed three primary types of nonspeech sound cues used for representing visual icons used in GUIs: (1) auditory icons, (2) earcons, and (3) hearcons. Auditory icons are everyday sounds used to represent graphical objects. For example, a window might be represented by the sound of tapping on a glass window or a text box by the sound of a typewriter. The Screen Access Model and Windows sound libraries are used in some applications. Earcons are abstract auditory labels that do not necessarily have a semantic relationship to the object they represent. Motives are components of earcons such as rhythm (e.g., the length of a musical note, a Latin beat), pitch (e.g., a musical C vs A), timbre (e.g., sound of a type of instrument), and register (e.g., octaves on the musical scale). An example of an earcon is a musical note or string of notes played when a file, window, or program is opened or closed. Different musical instruments may be used to represent different actions, such as a trumpet representing opening a file and a drum representing closing. In evaluations by blind users, earcons associated with musical characteristics were more effective than those using unstructured sounds (i.e., lacking rhythm, pitch and other cues). Hearcons are either nature sounds or musical works or instruments. Hearcons are completed musical sounds such as those produced by a running river or birds or a musical work, whereas earcons are separate audio components. In an evaluation by visually impaired participants, hearcons did not sufficiently portray semantic relationships to be effective. Font types have been represented by male versus female synthesized voices for normal and hyperlink text or softer and louder sounds for normal versus bold font.

Another obstacle faced by individuals who are visually impaired is the use of graphical information in tables and graphs. Three primary issues are the size of the table (i.e., providing information of the boundaries), overloading with speech information, and knowledge of current location within the table. Various methods have been developed to represent this information auditorally (Ratanasit and Moore, 2005). Nonspeech sounds are used to provide spatial relationships (e.g. a plucked violin string earcon might be used to represent the lines in a table or graph) and the text-based information contained in the table or graph is provided by synthesized speech. Another technique used is to associate higher pitches with larger numbers and lower pitches with smaller numbers in portraying trends and similar graphical data. Evaluation with visually impaired participants indicated greater success in using tables when nonspeech cues were combined with speech-based information. Another graphical approach is to represent numerical values by pitch, as above, but use a different timbre (instrument sound) for each axis.

READING AIDS FOR PERSONS WITH VISUAL IMPAIRMENTS

The major problems faced by persons with visual impairments are (1) access to printed reading material, (2) orientation and mobility (i.e., moving about safely and easily), and (3) access to computers, including the Internet. This section first describes reading aids for people with low vision who still obtain information through the visual system. Then tactile and auditory alternatives for people who are blind are discussed. The term reading is used here to include access to all print material, including text, mathematics, and graphical representations (e.g., maps, pictures, drawings, and handwriting). As discussed later, some types of reading have very specialized alternatives (e.g., talking compasses in lieu of maps, talking bar code readers for medicines and food cans).

Magnification Aids

There are three factors related to visual system performance for reading: size, spacing, and contrast. This section discusses the principles of low-vision aids for reading print material. These devices are generally referred to as magnification aids. Magnification may be vertical (size) or horizontal (spacing) or both. Magnification also includes assistive technologies that enhance contrast. There are three categories of magnification aids: (1) optical aids, (2) nonoptical aids, and (3) electronic aids (Servais, 1985). Examples of these are listed in Box 8-1.

Assistive technologies can also be used to enhance visual cues for children who have low vision (Griffin et al, 2002). Color and contrast can be enhanced by using hues (the named color, red, blue, etc.), lightness (perceived intensity), and saturation (perceived differences in color). Deficits in color vision may be difficult to detect in children, and Griffin et al provide the following guidelines for use in visual magnifiers, software, or Web site design for children with low vision: use colors that differ as little as possible in lightness, avoid colors from the ends of the spectrum, avoid white or gray with any color of the same lightness, avoid colors adjacent to each other in the color spectrum, and avoid use of pastel colors. Spatial considerations are another consideration in enhancing visual access for children with low vision (Griffin et al, 2002). Space includes size, patterns, outlines, and clarity of text and pictures. Optical magnifiers, software programs, and Web sites can address these features.

Optical Aids.

More than 90% of all individuals who have visual impairments have some usable vision (Doherty, 1993). Thus it is important to carefully choose low-vision devices to meet their needs. The National Institute on Disability and Rehabilitation Research has published a booklet describing clinical assessment methods, equipment, and tools needed for evaluating and matching of consumer’s needs to low-vision devices (Doherty, 1993). With the use of optical aids, individuals with low vision may be able to see print, do work requiring fine detail, or increase the range of their visual fields.

The simplest of optical aids is the hand-held magnifier. Among the advantages of these devices is that they require little training, they are lightweight and small (can fit in a pocket or purse), and they are inexpensive. Some also have a built-in light to increase contrast, and others have several lenses, which can be used alone or in combination, depending on the application. A selection of optical aids is shown in Figure 8-3. Sometimes it is difficult to hold a lens and carry out a task (e.g., a two-handed task such as embroidery). In other cases it may be difficult to hold a magnifier steady (e.g., for someone who is elderly or in poor health). In these situations, stand magnifiers, some of which have a built-in light, are useful. Some magnifiers are mounted on eyeglass frames to free both hands.

One approach to limitations of visual field is the use of field expanders. These are generally prisms or special lenses built in to eyeglass frames. When magnifying lenses are used, the expansion of the field reduces the size of the image and a tradeoff occurs. The image is not reduced in size when prism lenses are used to expand the field.

Telescopes assist with distance vision. These may be either worn on the head or held in the hand, and they may be monocular or binocular (Mellor, 1981). They may be used, for example, by students who need to see a chalkboard or an adult who needs to monitor children playing outdoors. Telescopic aids provide an enlarged but narrowed visual field. Head-mounted units may be attached to eyeglass frames or have a separate frame. Head-mounted devices are particularly useful when long periods of wear are necessary, such as when watching television.

Nonoptical Aids.

This approach to magnification is based on changes in the actual material that is to be read (Servais, 1985). Common examples are large-print books or other materials such as menus, programs, and newspapers. High-intensity lamps can significantly increase contrast of reading materials, and high-contrast objects in the environment can aid in localization. For example, brightly colored furniture or dishes can help with visualization. A glass that stands out from a countertop is easier to find and fill with liquid. As Servais (1985) points out, nonoptical aids can be very useful under the right circumstances, but they are limited in application because they are specialized to one or a few tasks.

Electronic Aids.

There are limitations to the amount of magnification and contrast enhancement that can be obtained by optical approaches to magnification. Electronic devices can overcome these limitations. Many electronic low-vision aids are based on CCTV devices. Some manufacturers refer to these devices as video magnifiers. There are two primary advantages of CCTV devices. The first of these is that the image size can be increased much more than for optical aids. Equally important is that the image can be manipulated and controlled. For example, contrast can be dramatically affected by the use of color or reversed images (e.g., white type on black background). The overall brightness of an image can also be controlled in CCTV devices, further increasing contrast.

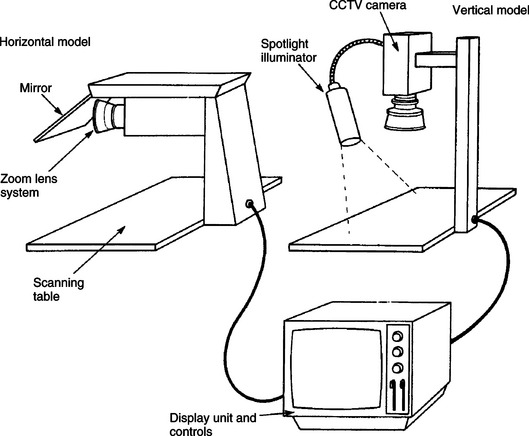

A typical CCTV is shown in Figure 8-4. The major components are a camera (environmental interface), a video display (user display), and a unit that controls the presentation of the image (information processor). The material to be read is placed on a scanning table, which easily moves both left to right and forward and back. There may be mechanical notches that help align the material, and some devices have adjustable margins. When the text is enlarged, the relative position of the material on the page is lost, and a spotlight of high intensity is sometimes used to show the user which part of the page is being imaged. With use of a split video screen, CCTV devices can be operated in conjunction with enlarged computer video displays to allow magnification of both computer data and the CCTV image of standard print material. Other contexts in which CCTV devices are used are to complete job-related tasks, to access educational materials at all levels, and for recreational reading.

Figure 8-4 CCTV system for low-vision assistance. (From Servais SP: Visual aids. In Webster JG et al, editors: Electronic devices for rehabilitation, New York, 1985, John Wiley.)

John WileyAll CCTV devices have the major features shown in Figure 8-4. An example of a CCTV device in use is shown in Figure 8-5. There is, however, a relatively wide range of features available in specific devices. The two broad categories of CCTVs are desktop and portable. The first category is by far the largest in terms of commercial products. Size and spacing are controlled primarily by two factors in desktop units: (1) size of the video monitor and (2) amount of enlargement provided by the electronics. Typical video monitors range in size from 12 to 19 inches, and maximal electronic magnification ranges from 45 to more than 60 times. There is a major tradeoff between monitor size and overall space required for the unit. Space requirements are often a significant limitation if a computer terminal, printer, and other office equipment must share space with the CCTV. A split-screen system overcomes this space problem to a large degree. CCTV systems often allow access not only to print material but also to the computer video screen. The technology is virtually the same for print or computer output. One such product is Spectrum SVGA, which allows the screen to be split into two. One half is used for CCTV display of printed material and the other is used for enlarged computer output. This system also functions as either a computer screen magnifier only or CCTV only.

A major challenge for people using video magnifiers is navigation around the text because it is often so enlarged that only a portion of a line or two of test is visible. This situation can result in missed words or difficulty in finding the beginning of the next line. One approach is to create a digital image of the page and then let the computer-based magnifier automatically scroll through the text (myReader, Pulse Data Human Ware, Concord, Calif., www.pulsedata.com). Automatic reading can be one long row that scrolls across the screen, a column of text whose width is such that it all appears on the screen at once or one word at a time with the user controlling the rate at which each word is displayed. Scrolling rate, magnification, and cursor movement around the text field are all adjustable and controllable by the user.

Contrast enhancement is provided either by gray scale or color. In the former approach the foreground and background contrast is adjustable and may be reversed (e.g., black letters on white or white letters on black). Color adds significant contrast enhancement because the user can choose alternative background and foreground colors. Not all persons with visual impairments have the same color vision, and color vision varies with visual field. Having some control over the foreground-background color combination allows the display to be customized to the needs of an individual user. Another advantage of color displays is that the original color of the print material can be retained. Maps with colored areas can be imaged; a preprinted form that calls for a signature “on the red line” shows the line as red, and so on. The major tradeoff with color monitors is that the image is not as sharp as the black and white image, especially at large magnifications. Color CCTVs are also more expensive than their black and white counterparts.

Most desktop CCTVs are relatively large and heavy primarily because of the video monitor. Liquid crystal displays and flat-panel screens have changed this. Flat-panel displays have different characteristics than cathode ray tubes, and the enlarged images provided by these two technologies are not equivalent or equally useable by all individuals. All desktop units must also be plugged into a wall socket for power. Thus it is difficult to transport them or to use them in contexts such as a classroom (unless a separate workstation is established—a common practice), and desktop units are generally kept in one physical location. Some desktop models have very small cameras (e.g., 1-inch diameter, 3 inches long) that can be connected to any video monitor or television set. This facilitates transportation and use in different locations.

Fully portable CCTVs are designed to be carried with the user. The most significant differences between these portable units and desktop CCTVs are size, weight, and battery power. Portable units weigh as little as 1.2 pounds and measure only about 9 × 3 inches for the display and 4 × 2 inches for the camera (for example, Pico, JBliss Imaging Systems, San Jose, Calif., www.jbliss.com; Pocket Viewer, HumanWare, Inc., Concord, Calif., www.humanware.com; Carrymate, Clarity, www.clarityusa.com/; Magnilink, Vision Cue, www.visioncue.com/). Portable units have a hand-held camera that is moved over the page. Maximal magnification varies from 3 to 64 times, and it may be controlled by changing camera lenses or by electronic image enhancement. Some units allow the camera to be connected to a desktop video monitor, standard television set, or portable computer to display the CCTV output. This allows it to be used in a portable or stationary mode, depending on the needs of the user. These cameras are extremely small (e.g., 2 inches × 2 inches × 4 inches, weighing 6 ounces). This flexibility is useful when greater magnification is needed for certain material (e.g., fine print) or at certain times (e.g., at the end of the day, when fatigue is greater) and when the user must travel to different settings during the day.

Access to Visual Computer Displays for Individuals With Low Vision

Screen-magnifying software that enlarges a portion of the screen is the most common adaptation for people who have low vision. The unmagnified screen is referred to as the physical screen. There are three basic modes of operation for screen magnifiers: lens magnification, part-screen magnification, and full-screen magnification (Blenkhorn, Gareth, and Baude, 2002). At any one time the user has access to only the portion of the physical screen that appears in this magnified viewing window. Lens magnification is analogous to holding a hand-held magnifying lens over a part of the screen. The screen magnification program takes one section of the physical screen and enlarges it. This means that the magnification window must move to show the portion of the physical screen in which the changes are occurring. Part-screen magnification is similar to lens magnification, except that the magnified portion is displayed in a separate window, usually at the top or bottom of the screen. The magnification program will follow a particular part of the screen referred to as the focus of the screen (Blenkhorn, Gareth, and Baude, 2002). Typical foci are the location of the mouse pointer, the location of the text-entry cursor, a highlighted item (e.g., an item in a pull-down menu), or a currently active dialog box. Screen readers automatically track the focus and enlarge the relevant portion of the screen. For example, if a navigation or control box is active, then the viewing window can highlight that box. If mouse movement occurs, then the viewing window can track the mouse cursor movement. If text is being typed in, then the viewing window can follow the text entry cursor and highlight that portion of the physical screen.

Full-screen magnifiers enlarge the entire screen, with the center of the enlarged portion being the cursor location. Thus, at any one time the user has access to only the portion of the physical screen that appears in this magnified viewing window. The size of the text in this window, the magnification, varies from 2 to 32 times or more in current magnifier programs. The viewing window must track any changes that occur on the physical screen. The mouse pointer can also be enlarged. Blenkhorn, Gareth, and Baude (2002) describe the design of screen magnification programs, including mouse pointer magnification.

Adaptations that allow persons with low vision to access the computer screen are available in several commercial forms. Lazzaro (1999) describes several potential methods of achieving computer access. The simplest and least costly are built-in screen enlargement software programs provided by the computer manufacturer. One system for the Macintosh, built in to the operating system, is Zoom. This program allows for magnification from 2 to 20 times and has fast and easy text handling and graphics capabilities. More information is available on the Apple accessibility Web site (http://www.apple.com/education/accessibility/technology). Magnifier (Table 8-1) is a minimal function screen magnification program included in Windows (http://www.microsoft.com/enable/default.aspx). It displays an enlarged portion of the screen (in Windows XP, from 2 to 9 times magnification; in Windows Vista, from 2 to 16 times), uses a part-screen approach and has three focus options: mouse cursor, keyboard entry location, and text editing. Other Magnifier options include inverted (e.g., black background, white letters), changing the location of the magnification pane, and high-contrast modes. For individuals who need only the high-contrast option, high contrast provides many color combination options for text, background, windows, and other GUI features. This is available in the control panels: Accessibility Options for Windows XP, Ease of Access for Windows Vista, and Universal Access for Macintosh. None of these built-in options are intended to replace commercially available full-function screen magnifiers. The mouse pointer settings under the Windows “mouse” control panel provide for changing the size, style, and color combination of all the pointers used during GUI interaction.

TABLE 8-1

Simple Adaptations for Visual Impairment

| Need Addressed | Software Approach |

| User cannot see status of CAPS LOCK, NUM LOCK, etc., lights | ToggleKeys |

| User requires greater contrast between foreground and background or greater size of characters on the screen | Magnifier or high contrast color scheme |

| User requires speech output rather than visual output | Narrator* |

Software modifications developed at the Trace Center, University of Wisconsin, Madison. These are included as before-market modifications to Windows and Macintosh operating systems.

*Windows Vista and XP versions differ. Features of Windows XP Narrator are documented on http://www.microsoft.com/enable/training/windowsxp/narratorturnon.aspx. The out-of-box Windows Vista text-to-speech (TTS) engine speaks U.S. English. This voice is called “Microsoft Anna.” In Chinese SKUs of Vista, the TTS engine speaks Mandarin, called “Microsoft Lili.” A different voice, perhaps speaking another language, requires the installation of a third-party TTS engine. Narrator will use any Speech Application Programming Interface (SAPI)–compliant TTS engine installed on Windows Vista and configured to be the default TTS engine. Keyboard commands include reading text (a character at a time, word at a time, line, paragraph, document) and navigating text on the basis of font attributes. For example, move cursor to where the font attributes have changed.

Screen-magnifying lenses that are placed over the monitor can also enlarge the information, but limited magnification (about two times) and distortion are the major problems. Increased contrast and reduced glare can be achieved with filters placed over the screen. Large monitors can have the effect of increasing text and graphics size, but the magnification is fixed. Adaptations that include both hardware and software provide the greatest compatibility, but they are also the most expensive alternatives.

Many screen magnification programs are available for use with Windows or Macintosh operating systems (for example, Lunar and Lunar Plus from Dolphin, Computer Access, San Mateo, Calif., www.dolphinusa.com; MAGic from Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com; VIP and ezVIP from JBliss Imaging Systems, San Jose, Calif., www.jbliss.com; Zoom Text, Zoom Text Xtra and BigShot from AI Squared, Manchester Center, Vt., www.aisquared.com; and Galileo, Baum, Germany, www.baum.de/) (see also the Microsoft accessibility Web site, http://www.microsoft.com/enable/default.aspx). These software programs offer wider ranges of magnification and have more features than built-in screen magnifiers. These programs generally offer access to Windows applications, including spreadsheet and word processing, e-mail, and Internet browsers. Many can also run with a screen reader (speech output utility). In some cases the screen reader is bundled with the magnification software, and in other cases the screen magnifier speech output runs in conjunction with a separate screen reader. Magnification of up to 32 times or more is available. The various screen modes described above are available in most screen magnification software. These programs also allow tracking of the mouse pointer, location of keyboard entry, and text editing. The magnification window can be coupled with one or more of these to facilitate navigation for the user. All screen images (including windows, control buttons, and other windows objects) are magnified. Automatic scrolling of the screen (left, right, up, down) is also available to make it easier to read long documents when they are magnified.

For individuals who have low vision or blindness, hard copy (printer) output is also a challenge. If the output is to be read by a person with normal vision, the text can be edited on the screen using the methods described earlier and then printed in a standard printer font size. If, however, the user with visual impairment needs to access the hard copy output, then either an enlarged or a braille printout is desirable. For enlarged print, the most common approach is to use a laser printer coupled with a special software program to create larger characters.

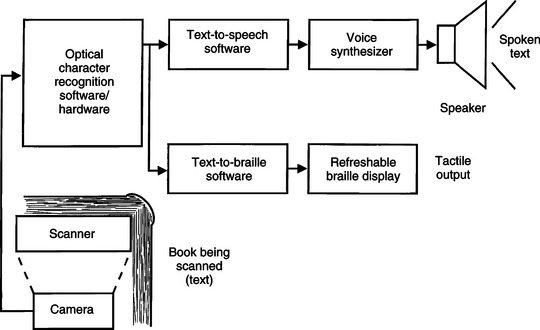

Devices That Provide Automatic Reading of Text

Automatic reading of text requires the three components shown in Figure 8-1: an environmental interface, an information processor, and a user display. The environmental interface is a camera that provides an image of the printed page, and the user display can be either tactile (braille) or speech synthesis. A block diagram showing the major components of an automatic reading machine is presented in Figure 8-6. Device operation involves scanning, optical character recognition (OCR), and the translation of recognized characters and either text-to-braille or text-to-speech conversion (see Figure 8-6). Most reading machines provide speech output, and some provide braille or both braille and speech. Both software and hardware approaches are used for speech synthesis output in much the same way as those used in screen readers for the blind. Synthetic speech for automatic reading systems is available in a variety of languages. Some automatic reading devices use standard personal computers (PCs) with special software for information processing. The PC is interfaced to a scanner (camera with software) and display (refreshable braille or speech synthesis). Current stand-alone (scanner included in the basic system) automatic reading machines offer simple one-button operation to scan a document and have it read. These units also provide manual access to features such as cursor keys to move around in the text, storing and retrieving files, and transferring the text to a computer or a disk. Automatic reading systems can also be used in conjunction with screen readers and Web browsers.

Figure 8-6 The major components of an automatic reading machine for persons with total visual impairment.

Camera and Scanner Characteristics for Automatic Reading.

To input the information into the machine, reading devices may use a flatbed scanner, a hand-held scanner, or a combination of the two (Fruchterman, 1991). Flatbed scanners have a glass plate 18 to 24 inches long and 10 to 14 inches wide. Scanners are usually defined as letter or legal size depending on the dimensions of the flat bed. This type of scanner, also called a desktop scanner, resembles a photocopy machine; however, the thickness is only about 3 to 4 inches. The material to be read is placed on the surface of the glass, and one advantage of this type of unit is that it can scan almost any kind of document, from a single sheet to a bound magazine or book. An automatic document feeder attachment can also be added to many flatbed scanners. This allows multiple sheets to be loaded and scanned. Scanners are widely used for home or business applications such as scanning photographs for use on Web pages or scanning documents for editing when an electronic copy is not available. For this reason, the technology is improving and the prices are falling as a result of the general market demand (Grotta and Grotta, 1998). This has resulted in advances that benefit blind users of automatic reading systems. Hand-held scanners vary in width from 2½ to 8½ inches (Converso and Hocek, 1990). For scanners narrower than the page, the camera must be moved across a line of text and then moved down to the next line, and so on all the way down the page. This can be difficult for a person who is blind because there is no frame of reference to keep the scanner on one line or to move just one line down. Flatbed scanners overcome this problem. The hand-held scanner can image most types of material, including single sheets and bound documents. An additional advantage is that it can be used with a laptop computer to create a portable reading machine.

All scanners consist of a light source and a camera, and some also contain lenses and mirrors to focus the image on the camera (Converso and Hocek, 1990). Grotta and Grotta (1998) describe both the use of charge-coupled device (CCD) imaging electronics and an emerging technology called contact image scanners (CIS). CCD cameras use a lens and mirror arrangement that moves across the document with the light source (usually a fluorescent lamp) and that is used to focus the image on the CCD detector. In contrast, CIS systems have a single row of sensors that is positioned just a few millimeters below the document and moves across it, together with an array of light sources, during the scan. The CIS systems draw less power; have a simpler mechanical design, making it possible to have thinner units; and eliminate the delicate optics of CCD devices. The resolution of CIS systems is not as good as that of CCD devices, but it is rapidly improving. The CCD or CIS array serves as a camera that converts the areas of light and dark to an electronic format, and computer software stores it in memory. Hand-held types have only the camera and light source.

The image that the camera stores consists of an array of black and white or color areas called pixels. The density of these pixels in the computer-stored image measures the quality of the scanner image. The units of measure are dots per inch. Scanners have resolutions from 300 to 4800 dots per inch (Grotta and Grotta, 1998). The other major specification that is used is gray-scale levels (for black and white scanning) and color bit depth for color scanning. Typical gray scale values are 256 levels. Color bit depth varies from 24 to 36 bits (Grotta and Grotta, 1998).

Some automatic reading systems have scanners built into them (for example, Ovation, Telesensory, Sunnyvale Calif., www.telesensory.com); Sara, Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com; Pulse Tech Book Reader, http://www.plustek.com; POET-Compact, Baum, www.baum.de/index-e.php; ScannaR, HumanWare, Concord, Calif., www.humanware.com). These systems include a flatbed scanner, built-in computer, voice output, and hard drive with room for up to 500,000 pages of text. In some cases Digital Audio-Based Information System (DAISY) reading capability for digital books (see below) is included. Scanned documents can be saved in MPS, WAV, or plain text format. Many of these systems require only a single button to be pressed to scan and read a document. Some units also provide multiple languages for spoken output. Other reading systems are software products that include optical character recognition and text-to-speech synthesis and are designed to use external commercial scanners and computers (for example, Open Book, Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com; Reading Advantage Telesensory, Sunnyvale, Calif., www.telesensory.com; Cicero, Dolphin Products, www.dolphincomputeraccess.com; An Open Book, Handy Tech Elektronik GmbH, Germany, www.handytech.de).

Optical Character Recognition.

The camera and scanner provide an image, consisting of an array of pixels. This image is black and white or color dots, and it is not in a form that can be translated into speech or braille. OCR is used to carry out this conversion. Units, called OCRs, have been developed for scanning print documents into computer-readable form by businesses. They also are used in automatic reading devices for persons who are blind.

The OCR is a software program that runs on a standard PC. The primary function of the OCR is to analyze the raw pixel data and assemble it into letters, spaces (to delineate words), and punctuation. Graphics (pictures or drawings and the elaborate characters sometimes used to begin chapters in books) must be removed from the text before output. There are a number of problems that OCR software must solve. The most significant of these is that letter recognition must occur with different print fonts. OCRs that accomplish this are called omnifont OCRs. Most scanners have an OCR product bundled with the scanner. These OCRs provide basic OCR capabilities, but they do not match stand-alone OCR products. Automatic reading systems use the professional stand-alone OCR products to achieve the best possible results. There are several general-purpose commercial omnifont OCR systems commonly used in reading machines for people who are blind. Some companies that provide automatic reading systems have their own proprietary OCR software, and others use professional-quality OCR software developed for business applications. The majority of the commercial software incorporated into automatic reading systems uses either the Xerox or Caere OCR software. Most current scanners use OmniPage LE (Nuance Corp, Burlington, Mass., www.nuance.com), the TextBridge (Nuance Corp) Classic, or proprietary OCR software. All OCR software available separately is compatible with the Windows operating system, and several automatic reading systems use standard PCs, OCR software, and an external scanner. Converso and Hocek (1990) present some guidelines for selecting a scanner and OCR for specific applications. They also include a discussion of computer hardware and software (e.g., word processing) factors to consider when scanners and OCRs are obtained.

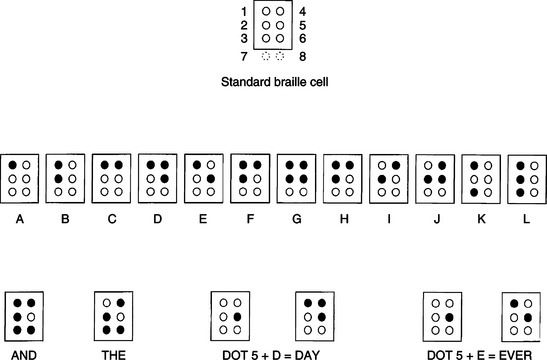

Braille as a Tactile Reading Substitute

The most widely used tactile substitution device for persons with visual impairments is braille. Each braille character consists of a cell of either six or eight dots, as shown in Figure 8-7. The seventh and eighth dots are used to show cursor movement or to provide single-cell presentation of higher-level ASCII codes. This is necessary because the six braille dots can only display 64 different combinations and there are 256 ASCII codes for characters (upper and lower case alphabet, numbers, special symbols, and control characters such as RETURN). Figure 8-7 shows examples of letters and numbers. When text is directly translated into braille letter by letter, it is referred to as Grade 1. Also shown in Figure 8-7 are some braille codes for words (called wordsigns) and word endings. The use of these contractions significantly speeds up the rate of reading, and this type of braille is called Grade 2 or Grade 3, depending on the number of contractions used. Reading rates with Grade 1 braille are about 40 words per minute. With Grade 3, reading speeds can approach 200 words per minute (Allen, 1971). Traditionally, braille has been produced by embossing on heavy paper, and this method is still widely used. For persons who develop skill with it, braille can be a fast and efficient method for accessing print materials.

Characteristics of Braille.

There are several disadvantages to the use of braille, especially in embossed form. First, the embossed material is heavy and bulky, and each braille page has significantly less information than a printed page of the same size. For example, a braille version of a 400-page print book would fill four books, each the size of an encyclopedia volume (Mann, 1974). A second disadvantage is that the cost of producing braille in an embossed form is high compared with print materials. For this reason, only a fraction of the total print literature is available in braille form. A third limitation is related to the spatial orientation of visual (print) material. When a person scans for a particular piece of information or edits text, this spatial orientation is used to find the particular piece of text needed. This process is difficult when the embossed braille paper format is used. This is partially because of the bulky nature of the material, but it is also a result of the difficulty that braille readers have in scanning text quickly. Finally, braille embossers do not allow corrections to be made. Once the dot pattern is impressed into the paper, it is not possible to remove it.

Braille itself, regardless of format, has limitations as well. The most significant is that very few persons (fewer than 10%) with severe visual impairment learn to use it. This is partially because more than 65% of all persons who become blind do so after age 65 years (Mann, 1974), and many of these cases are the result of diabetes, which also affects the tactile sense, making braille less desirable than other alternatives such as talking books. Despite all these disadvantages, braille is the modality of choice for many persons with severe visual impairment, and the use of a format other than embossed paper significantly enhances the effectiveness of this modality. One of the most widely used of these alternative formats is a refreshable braille cell. Computer output systems use either a refreshable braille display consisting of raised pins or hard copy by use of braille printers.

Refreshable Braille Displays.

Because braille is represented by a series of dots, raised pins can be substituted for the traditional embossed paper format. This approach, called refreshable braille display, is shown in Figure 8-8. There are several advantages to this format. The most significant of these is that the refreshable display is controlled by an electronic circuit that can be interfaced to computer displays or braille keyboards. This allows information to be stored electronically and greatly reduces the bulk compared with embossed braille. Second, because the text material is in electronic form, it can be edited, searches can be made, and copies of braille material can be easily produced in electronic form (e.g., on CD removable memory). The refreshable braille cell (or cell array) can also be used as the output mode for an automatic reading machine.

Each refreshable braille cell has a set of small pins arranged in the shape of a standard braille cell. The pins that correspond to the dot pattern for a letter or word sign are raised. Both Grade 1 and Grade 2 braille can be presented on refreshable displays by use of software that converts text from ASCII format to braille. Arrays of from 1 to 80 cells are available.

Stationary refreshable braille displays have arrays with multiple braille cells. Typically the array sizes are 20, 40, or 80 cells (for example, Pulse Data Human Ware, Concord, Calif., http://www.pulsedata.com; Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com); ALVA Series, Vision Cue, Portland, Ore., http://www.visioncue.com/). These arrays, and the hardware and software to control them, typically cost in the range of $3500 to $10,000 depending on the number of cells and the manufacturer. Generally, the standard six-dot format is used for each cell. For an eight-dot cell, the price for a 40-cell array is 20% higher than for the six-dot format. The 80-cell format allows an entire line of a computer screen to be displayed at one time. An eight-dot, 80-cell refreshable display can cost as much as $10,000, a significant increase over the cost of a 40-cell, eight-dot device. Thus price is a major consideration in refreshable braille displays. The refreshable braille arrays we have described generally can be used as an alternative to the screen in desktop computers.

The ALVA (Vision Cue, Portland, Ore., http://www.visioncue.com/) braille terminals provide 44-, 70-, and 80-cell refreshable displays for desktop use and 23- and 44-cell displays for portable applications (battery operated). All versions have eight-dot braille cells. All ALVA models also provide extra status cells that display the location of the system cursor, which line of text is displayed in braille, which attributes are active, and the relationship of those attributes to the characters on the screen. This information can be monitored with the left hand while the right hand reads the text on the braille display. USB and serial ports are available for data transfer. Text is provided in both Grade 1 and Grade 2 braille.

Freedom Scientific (Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com) makes 40- and 80-cell braille displays. The 40-cell unit includes a Braille keyboard. Both the 40- and 80-cell versions have navigation features accessible through a series of buttons on the display. Combinations of buttons are used to enter commands. Another product, the PAC Mate portable Braille display, is a 20-cell refreshable braille display that is connected to any computer through a USB port. This unit uses a seamless design between braille cells that makes the display feel like paper. It works with most Windows-based software packages. Pulse Data Human Ware (Concord, Calif., http://www.pulsedata.com) makes a series of refreshable braille displays, shown in Figure 8-9. The 40-cell and 24-cell Brailliant refreshable braille displays are designed for use with a laptop or desktop computer. The Brailliant 32-, 64-, and 80-cell displays are eight-dot braille displays for desktop computers. All these models are configured for split-window display or as programmable status cells and all include Bluetooth and USB connectivity. The latter are accessed by clicking a sensor located above one of the braille cells to instantly move the mouse pointer or cursor to a new location for editing. Grade 2 braille translation is included on all models.

For computer users who are familiar with braille, this approach can be more effective than screen readers. However, a combination of approaches may be most effective with braille and speech combined. If done thoughtfully and carefully, the hardware and software designed for braille can be used together with that developed for screen reading with speech synthesis. Supernova (Dolphin Computer Systems, San Mateo, Calif., www.dolphincomputeraccess.com) provides screen magnification (2 to 32 times) and speech and braille output in one package for Windows applications. There are six different viewing modes: full screen, split screen, window, lens, autolens, and line view (for smooth scrolling). Speech output is available letter by letter during typing or word by word. A variety of languages and speech synthesizers can be used with Voyager. “Hooked access” allows parts of the screen, such as the current line of a word processor, to be permanently displayed. Supernova also supports graphic object labeling and provides speech output and a braille layout mode.

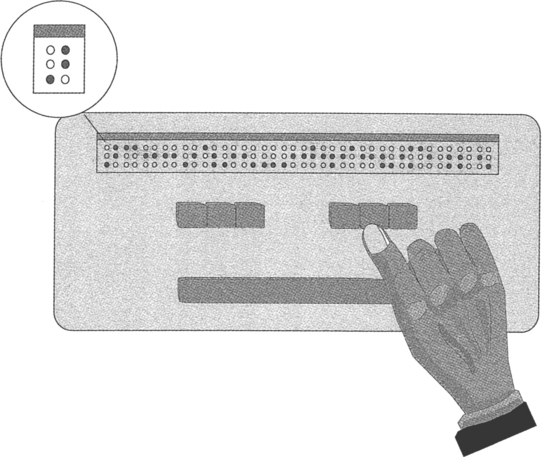

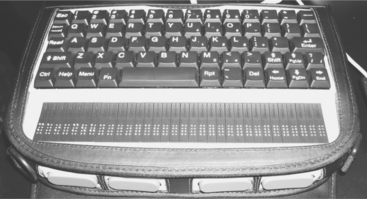

Portable Braille Note Takers and Personal Organizers.

Stand-alone data managers or personal organizers vary in size from a compact 4.5 inches square and about 1.5 inches thick to the size of a laptop computer (approximately 9 × 12 inches) (for example, the Braille Lite Series, Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com); Braille Desk 2000, Artic Technologies, Troy, Mich., www.artictech.com; Braille Wave, Handy Tech Elektronik GmbH, Germany, www.handytech.de; Braille Note and Voice Note, HumanWare, Concord, Calif., www.humanware.com); Aria, Sensory Tools, Robotron Proprietary Limited, St. Kilda, Australia, www.sensorytools.com/products.htm; MPO 0550, Alva Access Group, Oakland, Calif., www.alva-bv.nl/). A typical model is pictured in Figure 8-10.

Some models use a braille keyboard for input and others use a standard QWERTY keyboard. The braille keyboard has one key for each of the six dots in a braille cell. Additional keys are used for eight-dot braille and for control, editing, and data management. Output takes several forms. Synthesized speech is available in all units. Earphone and speaker output for the synthesized speech are also available. Some models include a refreshable Grade 2 braille display (from 8 to 32 braille cells) either alone or paired with synthetic speech. The speech synthesizer and refreshable braille display can also be used as outputs (replacing the output from the video monitor) on the unit or in conjunction with screen reader software on a PC. Additional outputs available on selected models include computer file transfer, Internet, and e-mail access by use of a modem (generally external to the note taker), and print. Some models also dial a telephone automatically from the data in the built-in address book.

Built-in programs vary somewhat among various models. All include some sort of word processing for writing away from a computer (e.g., while sitting by the pool or riding a bus to work), editing documents developed on a PC word processor, and taking notes in class or at meetings. Other programs built into specific models, in various combinations, include a calendar, address book, calculator, timer or watch, e-mail access, Internet browser, and text (ASCII)-to-braille translation. Storage of data is in both random-access memory and flash-read-only memory (ROM). Removable flash memory cards increase both flexibility and growth potential as the capacity is continually being increased. Flash memory card storage through USB ports adds to storage capability and provides an additional means of transferring files between the note taker and a PC. Direct transfer through a USB port is also routinely available. Several portable note takers include productivity software such as word processing and e-mail with full access through speech or braille output. MP3 music players and Web access by Bluetooth or WiFi protocols are also available on many units. Some note takers can also be used as computer keyboards through the built-in USB port or can function as cell phones (e.g., the Alva MPO 0550). Storage and manipulation of information may be in the form of braille or print or both. Control features may be by use of additional keys with specific functions or by use of a speech output menu of choices. The PacMate (Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com) is a fully functional pocket PC with voice and Braille options. It includes Microsoft productivity software (e-mail, database, spreadsheet, word processing, scheduling), MP3, Web access, and other features to bring to the blind user the ease of use and functionality that sighted users of portable PCs enjoy.

Speech as an Auditory Reading Substitute

Because reading is based on visual language, it is logical that auditory substitution for reading also uses language—that is, speech. Audio technology is the primary method for information storage and retrieval used by individuals who are blind (Scadden, 1997). All the approaches discussed in this section have speech as the output mode.

Recorded Audio Material.

The oldest and most prevalent use of auditory substitution for persons with visual impairment is recorded material. Current technology used in recorded audio material is cassette tapes, CDs, and CD-ROMs (for example, Recording for the Blind and Dyslexic, www.rfbd.org); National Library Service for the Blind and Physically Handicapped, Library of Congress, http://www.loc.gov/nls/index.html).

The major type of recorded material is cassette tapes. Several models are provided by the National Library Service for the Blind and Physically Handicapped. The major features that are included on some or all of these are 15/16-inch per second (nonstandard for longer play and copyright protection) and ⅞-inches per second (standard used for music tapes) playback speeds, variable speed control, portability, automatic reverse or rewind, and frequency compensation to allow increased speed without a “chipmunk” sound. The variable speed allows the listener to review material faster than it was originally spoken. With practice, it is possible to understand speech at rates up to four times normal. Some people also use this type of machine to record lectures and then review the material in lieu of note taking. Cassette tapes can be produced by virtually any local library to make backup copies for distribution.

The use of CD-ROMs allows a great deal of information to be placed on a single disk. One CD-ROM can store a large amount of data. Reproduction costs are low. The major advantages of CD-ROMs for music are greatly increased fidelity resulting from greater frequency response, smaller size of both player and disks than phonograph records, and indexing, which can be used to find a particular track. These features are being exploited in recorded material for individuals who are blind (Scadden, 1997). The use of digitized audio information allows voice recordings to be mixed with headings that allow easier searching of the text. Multimedia presentations are also commonplace with CDs, allowing both visual and auditory presentation of information, thereby increasing the potential market and reducing price. Audio displays are also being used for the presentation of mathematical information by computers and speech synthesizers and as a substitute for data presentation (e.g., tables, charts) (Scadden, 1997). In this form a book can be loaded into a PC word processor (either Windows or Macintosh based) and displayed on the screen. Because the CD-ROM is basically a storage medium for the computer, sophisticated search strategies can be used to find a particular item or place in the text. For persons with low vision or blindness, the availability of CD-ROM–based reading materials opens up many different options for obtaining access to print materials. For example, with an enlarged screen output, reading material on a CD-ROM can be accessed and presented to a person with low vision by use of a computer. More significant, however, is the use of either braille or speech output from the computer to allow individuals who are blind to read from the CD-ROM.

One of the challenges in any electronic format is standardization. Different countries have different recording formats for talking books on tape, and there are many formats for word processors in digital form. For this reason an international group, the DAISY Consortium (www.daisy.org) has developed an international standard for digital talking books (Kerscher and Hansson, 1998). This standard includes production, exchange, and use of digital talking books. The goal of the DAISY Consortium is to promote the use of digital books that comply with an international standard. The members of the consortium are associations and organizations across the world that are involved in the provision of reading materials for individuals who are blind. The DAISY standard is hardware platform and operating system independent, and it makes use of the Web accessibility standards developed by the World Wide Web Consortium (W3C). There are several on-line sources for books in the DAISY format (for example, Benetech, www.bookshare.org; National Library Service, U.S. Library of Congress, www.loc.gov/nls; Recording for the Blind and Dyslexic, www.rfbd.org; Dolphin Audio Publishing, www.dolpjinauiopublishing.com). These sites have thousands of titles, including books for children and adults, textbooks, and newspapers. Many of the books are available in both DAISY and Braille Reading Foundation (BRF) Grade II braille format for printing books or using refreshable braille displays. Players for DAISY format CDs are available from several manufacturers (for example, Telex, Burnsville, Minn., www.telex.com; FSReader, Freedom Scientific, St. Petersburg, Fla., www.freedomsci.com; EaseReader, Dolphin Audio Publishing, www.dolphinaudiopublishing.com; Victor, Human Ware, Concord, Calif., www.humanware.com). A typical DAISY format reader is shown in Figure 8-11.

Synthetic Speech Output Reading Machines.

Auditory output from automatic reading machines is provided by synthetic speech devices. Types of speech synthesis and conversion of ASCII text into speech (called text-to-speech) is discussed in Chapter 7. The use of speech synthesis in reading machines for persons with visual impairments or learning disabilities uses the standard types of speech synthesis. There are a variety of both hardware- and software-based speech synthesizers for use with reading programs or aids (see Chapter 7). Because many reading devices are based on PCs, screen readers (programs that provide synthetic speech output from the computer screen, see Chapter 7) can also be used as reading machines.

There are several ways in which information can be converted to ASCII form for use by a screen reading program. The most common is to use a scanner and OCR program as discussed in this section. A second approach is to obtain CD-ROMs that contain computer-readable written material (Dixon and Mandelbaum, 1990). There are services that make books on disk available to persons who are blind. The computer disks have files that can be loaded into a word processor and then read by using a screen reader program. The CD-ROMs provide significantly greater storage than floppy disks, and they are made available to blind readers by publishers. Dictionaries, almanacs, and encyclopedias are among the many publications available in this format. A major advantage of this type of storage is the indexing and searching capability provided by CD-ROM technology. There is now a large and growing amount of literature (especially the classics) available on the Internet in electronic form (called e-text). Many newspapers put their whole issues on the Internet, as do on-line news and sports services. Individuals who are blind can read this information by using screen readers and accessible Web browsers.

Access to Visual Computer Displays for Individuals Who Are Blind

For individuals who are blind and need to access a computer, the problem is one of providing input through an alternative sensory pathway, auditory or tactile or both. Auditory output is provided by voice synthesizers (hardware or software based), and tactile output is generally provided by refreshable braille displays and embossed hard copy.

Systems that provide voice synthesis output for blind users are generally referred to as screen readers. A computer user who is blind should be able to access all the same graphics and text as a person who is sighted. There are a variety of commercially available speech synthesizers, and many screen readers use their own proprietary speech synthesis software and computer sound cards, as well as compatibility with refreshable braille displays. Windows includes a basic function screen reader utility, Narrator, and a Toggle Keys in its accessibility options that are accessed through the control panel. These features are described in Table 8-1. The narrator program is a text-to-speech utility for people who are blind or who have low vision; it reads text that is displayed on the screen in an active window or menu options or text that has been typed into a window. The Toggle Keys option generates a sound when CAPS LOCK, NUM LOCK, or SCROLL LOCK key is pressed.

A sighted computer user will often scan a screen for a specific piece of information or to obtain a sense of the continuity and flow of the written material, which includes looking for specific screen attributes (such as highlighted or underlined material and features of the GUI). For the user who is blind, duplicating this capability requires that the adapted output system provide reading of text and descriptions of graphics. Finally, screen reader programs provide on-screen messages or prompts for the user input during program operation. Graphic characters should have text labels attached to them. These can be read to the consumer by use of speech synthesis software. Currently available screen reader programs provide navigation assistance by keyboard commands. Examples of typical functions are movement to a particular point in the text, finding the mouse cursor position, providing a spoken description of an on-screen graphic or a special function key, and accessing help information (for example, Screen Reader2 from IBM, Special Needs Systems, Austin, Tex., www.rs6000.ibm.com/sns; Jaws for Windows from Freedom Scientific, St. Petersburg, Fla. www.freedomsci.com; Zoom Text Xtra Level 2 from AI Squared, Manchester Center, Vt. www.aisquared.com; Supernova and Hal from Dolphin Computer Access, San Mateo, Calif. www.dolphinusa.com; Magnum and Magnum Deluxe from Artic Technologies, Troy, Mich.; Protalk32 for Windows, Biolink Computer, Vancouver, Canada, www.biolink.bc.ca; Window Eyes from GW Microsystems, Fort Wayne, Ind. www.gwmicro.com/gwie).