Disabled Human User of Assistive Technologies

INFORMATION PROCESSING MODEL OF THE ASSISTIVE TECHNOLOGY SYSTEM USER

SENSORY FUNCTION AS RELATED TO ASSISTIVE TECHNOLOGY USE

Control of Posture and Position

PERCEPTUAL FUNCTION AS RELATED TO ASSISTIVE TECHNOLOGY USE

COGNITIVE FUNCTION AND DEVELOPMENT AS RELATED TO ASSISTIVE TECHNOLOGY USE

Developmental Disabilities and Cognitive Deficits

Problem Solving and Decision Making

PSYCHOSOCIAL FUNCTION AS RELATED TO ASSISTIVE TECHNOLOGY USE

Assistive Technology Use Over the Life Span

MOTOR CONTROL AS RELATED TO ASSISTIVE TECHNOLOGY USE

Speed and Accuracy of Movements

Development of Movement Patterns Through Motor Learning

Relationship Between a Stimulus and the Resulting Movement

EFFECTOR FUNCTION AS RELATED TO ASSISTIVE TECHNOLOGY USE

Factors Underlying the Use of Effectors

On completing this chapter, you will be able to do the following:

1 Place the human user of assistive technologies in the proper context relative to the activities and contexts of human performance

2 Describe and apply an information processing model of the disabled human operator of assistive technologies

3 Use basic human factors and neuroscience concepts to describe the interaction between persons with disabilities and assistive devices

4 Describe how disabilities, learning (including experience), age, and changing conditions affect the human performance model and the interaction among the human, the activity, and the context

5 Apply basic principles of human performance to specific application areas (activities) and contexts

In the previous chapter the assistive technology system and the interrelationships among its component parts are described. In this chapter the focus is on the human user of assistive technologies. It is assumed that the reader has a general knowledge of normal human physiology and of disabilities, and therefore the emphasis is on those characteristics of disability that influence the use of assistive technologies. The Disability Statistics Center at the University of California, San Francisco, has provided the following statistics based on the National Health Interview Survey, a continuing national household survey consisting of 49,401 household interviews with 128,412 people in 1992 (www.dsc.ucsf.edu). Data collected include information regarding basic personal assistance needs (i.e., whether people need help with activities of daily living such as bathing, eating, dressing, or getting around inside) and routine personal assistance needs (i.e., whether people need help with instrumental activities of daily living such as household chores, doing necessary business, shopping, or getting around for other purposes) as a result of chronic health conditions.

• Approximately 15% (37.7 million) of the United States’ population have a limitation that affects a major life activity such as working or going to school. These individuals report 1.6 conditions per person on average, for a total of 61 million limiting conditions.

• More than 19 million individuals ages 18 to 69 have physical or mental conditions that keep them from working, attending school, or maintaining a household. Women report a higher number of activity-limiting conditions than do men.

• Minorities, the elderly, and those in lower socioeconomic populations have a greater incidence of disabilities and need greater assistance in both activities of daily living (52% more than 65 years old) and instrumental activities of daily living (58% more than age 65 years).

• A newborn infant can be expected to have 13 years of limited activity out of a 75-year life expectancy.

• National disability-related costs are more than $170 billion annually.

These statistics indicate that activity-limiting disabilities are widespread, unevenly distributed across the general population, and expensive. Assistive technologies, if appropriately applied, can help to overcome the activity limitations imposed by disabilities. This requires a thorough understanding of human abilities and skills, especially in the presence of a disability.

In designing assistive technology systems, it is important to build on the skills of the user and provide assistive devices that augment or replace functional limitations. Because the goal is to increase functional independence for individuals with disabilities, it is important to focus on remaining function, rather than on lost function. In this chapter a description of the human user of assistive technologies is developed.

INFORMATION PROCESSING MODEL OF THE ASSISTIVE TECHNOLOGY SYSTEM USER

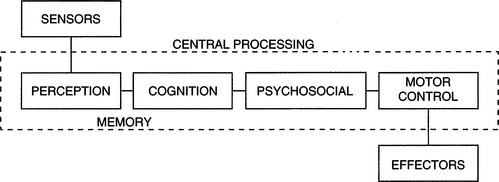

Human factors engineers and psychologists have developed the model shown in Figure 3-1 to describe the human component of a human-machine interaction (Bailey, 1989). This model is useful for describing the human operator of an assistive technology system. The individual blocks shown in Figure 3-1 delineate functional rather than structural components, and they are used to help identify the important considerations in human-machine interaction. Bailey (1989) lists three things that a system designer must know about the user: (1) what can be done (skills), (2) what cannot be done (limitations), and (3) what will be done (motivation). Motivation is directly related to the person’s goals and needs and how well the assistive technology system meets them.

Figure 3-1 An information processing model of the human operator of assistive technologies. Each block represents a group of functions related to the use of technology. Taken together, these components constitute the intrinsic enablers for the human.

Skills and limitations in the three component areas shown in Figure 3-1 are considered when designing assistive technology systems. Taken together, these components constitute the intrinsic enablers for the human. Input from sensors is necessary for obtaining data from the environment, and limitations can arise in both the sensitivity (minimum detectable levels of light, sound, or pressure) and range (allowable variation in size, amplitude, or magnitude of the sensory input). When assistive technology system use is being considered, the visual, auditory, tactile, proprioceptive, kinesthetic, and vestibular sensory systems all play important roles. Sensory data produced by each of these systems are important for the successful use of assistive technologies. Some assistive technologies specifically address sensory loss. For example, reading and mobility systems for the visually impaired and hearing aids for individuals with auditory impairment are designed to compensate for these specific losses (see Chapters 8 and 9). However, sensory function affects virtually all areas of assistive technology application, and it is important to consider sensory function as an integral part of the overall human capabilities required for the successful operation of an assistive technology system.

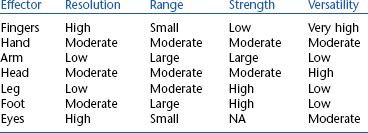

The term effectors will be used to describe the neural, muscular, and skeletal elements of the human body that provide movement or motor output. The result of the movement of the effectors is motor output. These elements work together to allow movement under the control of central processing and in response to sensory input. Limitations can arise from impairments in any element or combinations of them. Effectors provide the motor outputs that can be used for the control of assistive technology systems. Often, assistive technology systems are controlled by hand movements. For example, powered wheelchairs typically use joystick control activated by hand movements, and computers and augmentative communication systems use hand and finger movements for keyboard use. However, other anatomical sites may be used for control, and the components of postural control and reflexes also contribute to the generation of motor output.

Interposed between the sensors and effectors are the central processing functions of perception, cognition, neuromuscular control (including motor planning), and psychological factors. Perception is the interpretation and assignment of meaning to data received from the sensors, and it involves an interaction between information derived from sensed data and information stored in memory based on previous sensory experiences (Bailey, 1989). As Dunn (1991) points out, sensory and perceptual function provides the mechanisms by which an individual interacts with the environment. It is the combination and interpretation of data from all the sensory systems that provide a meaningful picture of the environment and our interaction with it.

The term cognition refers to attention, memory, problem solving, decision making, learning, language, and other related tasks. As pointed out by Duchek (1991), virtually all aspects of performance are based on cognitive function, including performance that uses assistive technology systems and human performance in general. For example, the use of a powered wheelchair requires several types of cognitive function. The human operator must visually scan the environment, process the sensory data, make decisions as to the direction of movement desired, and activate the corresponding effector to cause the motion of the wheelchair in the desired direction. Once in motion, the user must attend to the environment to avoid obstacles and hazards and make instantaneous decisions regarding speed and direction. The user may also be required to engage in problem solving to negotiate a tight space or recover from an error. Cognitive processes involved in this example include attention, decision making, problem solving, language (e.g., spatial concepts such as left, right, forward, back), and memory. Without these capabilities, it would be difficult to control a powered wheelchair effectively.

It is also sometimes difficult to separate cognitive performance from sensory or motor performance. For example, an individual using an electric feeding device (see Chapter 14) requires sensory input to locate food on a plate, decision making to select the desired food item to be eaten, sufficient motor skills to activate a control interface that directs the spoon to the plate to pick up food and move it to the mouth, and monitoring of the path of the spoon as it travels. Because this is a complex set of tasks, it is difficult to determine whether failure to complete them successfully is caused by a sensory or a perceptual problem (e.g., difficulty in separating the food from the background of the plate), a cognitive problem (e.g., forgetting what the sequence of tasks is or inability to attend long enough to complete the task), or a motor limitation (e.g., inability to activate the control interface or inability to physically remove the food from the spoon because of lack of oral-motor control).

Motor control is the result of the integration of sensory, perceptual, and cognitive components into a motor pattern that is executed by the effectors. This process involves many degrees of feedback and feed-forward control, and there are many current theories relating to the precise mechanisms involved (see Burgess, 1989, for example). The term motor control refers to the central processing components of effector regulation. These components may be in the brain or spinal cord, and smooth, precise movements are possible only through integration of information from the sensors, other central nervous system (CNS) components (e.g., perception, decision making), and feedback from the effectors.

Motor planning is used to describe the process by which purposeful movements are executed to accomplish a purposeful task (Warren, 1991). This is a central processing activity that requires the highest level of motor control. For example, the tasks of writing, eating, using a hand tool, and typing all require motor planning for successful completion. Motor learning occurs as a task is practiced over and over, and many tasks become automatic with practice (i.e., we are not aware of the individual steps in the task). The learner must concentrate on each step to learn the task. However, although the task may become automatic or subconscious, motor planning is still involved; an individual with CNS damage may lose this ability. Thus motor output involves sensory data collection (from internal and external sensors), interpretation and integration of these data (perception), conscious planning of a movement (not always necessary), development of a movement pattern that is responsive to the plan and consistent with the sensory data (motor control), and execution of the movement (effectors). Motor control is discussed in detail later in this chapter.

Psychosocial function consists of identity, self-protection, and motivation. These factors are related to the acceptance of a disability, the approach a person takes to the assistive technology, and how effective the technology can be for the person. Concepts from self-identity and self-protection are used to describe how a person with a disability might interact with assistive technologies and how successful he is likely to be in using them. Motivation greatly influences how much an individual works to develop skill in using an assistive technology and the degree to which he or she is successful in that use.

Limitations in function can occur in any of these areas as a result of trauma, disease, or a congenital condition. A major goal of assessment for the purpose of designing assistive technology systems is to identify the disabled person’s skills in the areas of sensory function, central processing, and motor output and control.

SENSORY FUNCTION AS RELATED TO ASSISTIVE TECHNOLOGY USE

In this section the major sensory systems that are involved in assistive technology system use are described. The emphasis is on human sensory performance and how it affects use of assistive technologies to compensate for sensory limitations. These compensatory technologies are discussed in succeeding chapters.

Visual Function

Visual function is important (but not essential) for the effective use of assistive technology systems, especially regarding access systems. For example, in using augmentative communication systems, individual items must be found in arrays of vocabulary elements, scanning cursors must be tracked, and visual feedback is often used to signify successful message generation. Likewise, to use a powered wheelchair, visual scanning of the environment must be present, and there must be adequate acuity and visual field to guide the chair around obstacles effectively, safely, and efficiently. For individuals who have visual impairments, reading print material or computer displays can be difficult or impossible, and assistive technologies can be of help.

When an individual’s primary disability is visual, it is obvious that the assistive technology must accommodate needs in this area. Often other modalities must be used, typically auditory or tactile senses; general purpose visual substitution systems for mobility and reading are discussed in Chapter 8. However, as Cress et al (1981) point out, the incidence of visual impairment in individuals with severe physical disabilities may be as high as 75% to 90%. Often these visual difficulties are not identified or treated. Because assistive technology application is so dependent on the use of visual input, visual function must be carefully evaluated (see Chapter 4), and it is necessary to specify and design systems to account for special visual requirements. Several types of measurements are typically used to assess visual capability. These include visual acuity (target size), visual range or field size, visual tracking (following a target), and visual scanning (finding a specific visual target in a field of several targets). Each of these is important in the use of assistive technology systems; how they are measured is described in Chapter 4.

Visual Acuity.

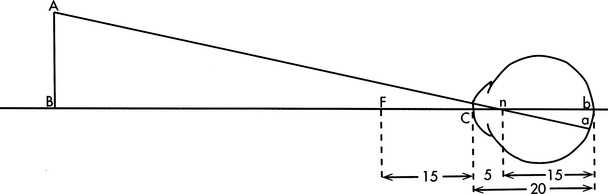

The term visual acuity is used to refer to all those aspects of the visual system that are related to focusing an image on the retina and extracting sensory data from that image. Three factors are important in this process: (1) size of the object, (2) contrast between the object and the background, and (3) spacing between the object and surrounding background objects. One way to measure the size of an object is to determine the visual angle formed by that object when it is viewed at a known distance. Figure 3-2 illustrates the concept of visual angle. Visual angles of common objects include 13 minutes of arc for pica-typed letters, 2 minutes for a quarter held at arm’s length, and 1 second for a quarter at 3 miles (Bailey, 1989). The minimal visual angle threshold for the eye is approximately 1 second of arc; however, the recommended visual angle for ease of viewing in normal light is 15 minutes of arc (21 minutes in reduced light) (Bailey, 1989).

Figure 3-2 The visual angle is the angle just in front of the cornea, C, formed by object AB. (From Ruch TC, Patton HD: Physiology and biophysics, ed 19, Philadelphia, 1966, WB Saunders.)

Visual angle describes only the size of an object that is detectable. Contrast between the object and the background is equally important, and the visual threshold of interest is brightness. The minimal detectable brightness for normal human vision is a single candle seen at 30 miles on a dark, clear night (Bailey, 1989). This distance translates into measurable units of 10−6 millilamberts. For comparison, a tungsten filament light bulb emits 1 million millilamberts, and white paper has a brightness of 10 millilamberts in good reading light. The absolute value of the emission or reflection of light from an object is not as important as the degree to which the object differs from the background. The visual system functions best when contrast is high (Dunn, 1991). Busy visual fields have too many competing objects for the visual system to extract important visual data. In later chapters the implications of these aspects to assistive technology system assessment and design are discussed.

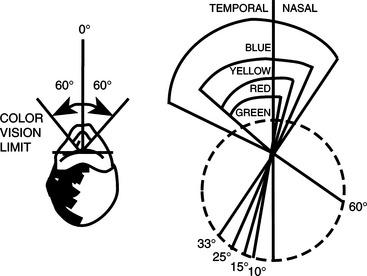

The eye is sensitive to colors in the visual spectrum (from violet to red), but it is not equally sensitive to all colors in this range. Also, different areas on the retina are sensitive to different colors (Bailey, 1989). If the eye is fixed and not allowed to rotate, the limits of color vision are 60 degrees to each side of the midline. Within this range, the response of the retina to colors is not equal for all wavelengths (colors). Figure 3-3 illustrates that blue objects are visible over the entire 60-degree range, whereas yellow, red, and green objects are recognizable only at points closer to the fixed (center) point of vision, which has implications for the design of systems for individuals who rely on peripheral vision or who have difficulty moving their eyes to track objects. If green or red is used, the person’s ability to see the object may be limited; visibility can be increased by using blue or yellow. Contrast can also be created by using different colors for foreground and background.

Visual Field.

With the head and eyes fixed on a central point, the normal range of peripheral vision in the right eye is 70 degrees to the left and 104 degrees to the right (Bailey, 1989). If the eyes are allowed to rotate but the head remains fixed, the range is 166 degrees to each side of the central point.

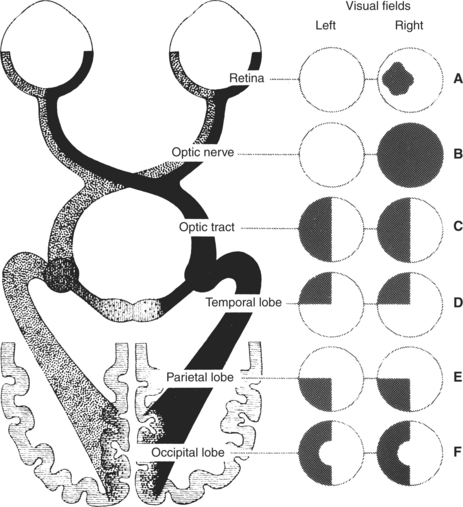

This typical visual field may be altered in several ways by disease or injury to the eyes, visual pathways, or brain. The most common types of visual field deficits are shown in Figure 3-4. Visual loss may occur in one or more of the quadrants of the left or right field. Dunn (1991) discusses the major causes of these losses. These types of losses are common in persons with disabilities such as cerebral palsy, traumatic brain injury, and diseases affecting the eyes and visual system. When assistive technology systems are specified and designed, the size and nature of the individual’s visual field must be taken into account.

Figure 3-4 Types of visual field deficits. A, Retinal lesion: blind spot in the affected eye. B, Optic nerve lesion: partial or complete blindness in that eye. C, Optic tract or lateral geniculate lesion: blindness in the opposite half of both visual fields. D, Temporal lobe lesion: blindness in the upper quadrants of both visual fields on the side opposite the lesion. E, Parietal lobe lesion: contralateral blindness in the corresponding lower quadrants of both eyes. F, Occipital lobe lesion: contralateral blindness in the corresponding half of each visual field, but with macular sparing. (From Umphred DA: Neurological rehabilitation, ed 2, St Louis, 1990, Mosby, p 721. Courtesy Smith Kline & French Laboratories, Philadelphia.)

Visual Tracking and Scanning.

Visual tracking is the ability to follow a moving object. This skill is necessary for many assistive technology tasks. Visual scanning differs from visual tracking in that the object does not move; instead the eyes are moved to different parts of a scene to find a specific object or location within the scene. Oculomotor function is required for normal vision and for assistive technology applications in which the eyes are used as an effector (see the section on motor control in this chapter). Conjunctive eye movements are those in which the eyes move together (e.g., saccades, vestibulo-ocular reflexes, optokinetic reflexes, and smooth-slow pursuit). In disjunctive eye movements the eyes do not track together as in vergence during refocusing. These motor behaviors all need appropriate alignment of the eye muscles in addition to the intact motor system, and this is often not the case in persons with disabilities.

Eye movements are typically classified into two sets of systems: those that stabilize the retinal image and those that transfer gaze to a new target. The optokinetic and vestibulo-ocular reflexes are in the first category. All head movements serve as adequate stimuli for these reflexes; that is, the head movement serves as the input that generates the reflex. These reflexes may be impaired for many individuals with disabilities who have difficulty maintaining a stable head or trunk position. Smooth pursuit eye movements also serve to stabilize the retinal image. Transfer of gaze is accomplished by saccades, vergence, and head movements.

Visual Accommodation.

In the normal eye at rest, distant objects are focused on the retina. As the object is brought closer, the image falls in front of the retina unless the curvature of the lens is changed. The process by which the ciliary muscles change the curvature of the lens and hence the focal point of the eye is called visual accommodation. Accommodation is quantified by determining the change in the power of the lens of the eye as objects are brought closer. The power is calculated as the reciprocal of the focal distance of the eye, and it is measured in diopters (D). The closest point at which an object can still be focused is called the near point. For a person less than 20 years of age with normal visual accommodation, the near point is approximately 10 cm and the accommodation is approximately 12 D. As individuals age, their accommodative ability decreases. For example, at age 50 years the near point is at approximately 30 cm and the accommodation is reduced to less than 2 D; this situation leads to the prescription of reading glasses. Many types of disabilities affect accommodation; limitations in accommodation are referred to as accommodative insufficiency, which can be a significant factor when assistive technologies are used. For example, if a person is using a keyboard device with a visual display, the separation of these two system components may require constant accommodation as visual gaze is directed at the keyboard and then at the display and back to the keyboard. Appropriate placement of the keyboard and visual display can reduce the amount of accommodation that is required and can result in significantly improved overall system performance.

Common Visual Deficits.

Visual limitations are common in many types of disabilities. Two studies in this section illustrate how these limitations can affect the design of assistive technology systems. One example is of a congenital disability (cerebral palsy) and one example is of an adventitious disability (traumatic brain injury).

Duckman (1979) studied ocular function in a population of 25 children with cerebral palsy. He found that 92% of the children had ocular motor dysfunction of some type: 40% had significant refractive errors, 56% had strabismus, 100% had accommodative insufficiency, 100% had poor directional concepts, and 78% had visual perception dysfunction. These results parallel other reports in the literature, and they indicate that the visual system is far from normal in this population. Duckman states that the poor directional concepts were so severe that “most children did not even have a concept of direction on their own bodies” (p. 1015).

The high degree of accommodative insufficiency was not expected by Duckman, and he stated that “these children almost demonstrated ‘paralyses’ of accommodation” (p. 1015). Most of the children were unable to make shifts of as little as 0.25 D in their accommodative systems. This finding has direct bearing on tasks that require frequent redirection of gaze, such as looking at a keyboard to find the desired character and then looking at a display or screen to monitor the selections. It also helps explain the success of systems in which eye gaze is used in one plane only (e.g., vertical) rather than requiring movement horizontally and vertically (Goosens and Crain, 1987).

These considerations dictate that great care must be exercised when persons with disabilities are asked to perform visual tasks. For example, communication systems using eye gaze as a method of indicating choices typically rely on printed targets (e.g., “yes” or “no”) to which the eyes must be directed (Goosens and Crain, 1987). Given the slow movements, tracking asymmetries, and difficulties with accommodation, it is not surprising that the use of these approaches is difficult for severely disabled persons and that development of these skills can take many hours of practice (see Light, Beesley, and Collier, 1988, for example).

Tychsen and Lisberger (1986) have shown that flaws in the visuomotor systems underlie deficits in the processing of visual motion. They note that the misalignment of the eye muscles (strabismus) in early life results in a permanent misalignment of the horizontal axes for both eyes, even after surgical correction of the muscle defect. Further, their tests demonstrate (1) a nasal-temporal asymmetry in the rate of smooth pursuit eye movement, given a horizontally moving target and (2) a vertical asymmetry in smooth pursuit, given a vertically moving target. Psychophysical judgments by their subjects revealed that targets were seen to move more rapidly in one direction than in the other when the targets were traveling at the same speed.

Padula (1988) describes a similar situation for individuals with traumatic brain injuries. He describes a posttrauma vision syndrome with characteristics of exotropia, exophoria, accommodative dysfunction, convergence insufficiency, low blink rate (related to attention level), spatial disorientation, and balance and posture difficulties. Individuals with this syndrome typically have diplopia (double vision), movement of objects located in the periphery, visual memory problems, poor tracking ability, and poor concentration and attention. Padula also describes remarkable improvement in functional ability when prism lens glasses are used by these individuals. These characteristics and symptoms are similar to those described by Duckman (1979) for cerebral palsy.

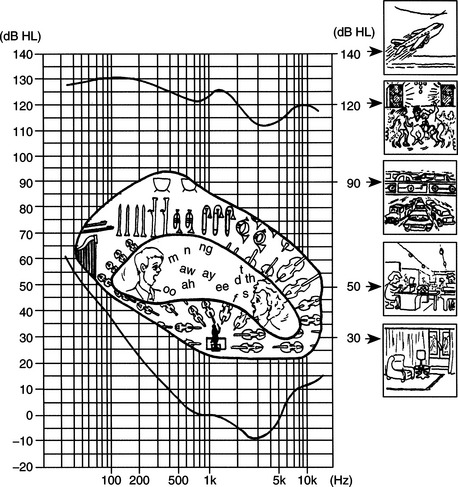

Auditory Function

Several types of auditory function are important for the use of assistive technology systems. Auditory thresholds include both the amplitude and frequency of audible sounds. The amplitude of sound is measured in decibels (dB). This unit is the logarithm of the ratio of the sound pressure being heard to the smallest sound pressure detectable by the ear (20 micropascals). This minimal threshold is equivalent to the ticking of a watch under quiet conditions at 20 feet away. Because of the logarithmic calculation of decibels, a doubling of the sound pressure level in decibels is a tenfold increase in the amplitude of the sound. Figure 3-5 shows sound pressure levels for a variety of typical sounds (Bailey, 1989).

Figure 3-5 The sensitivity of the human ear to frequency is shown on the plot. This curve is normalized to 0 dB at 1000 Hz. The reference pressure is 0.0002 dynes/cm2. Along each side and in the center of the plot are shown frequencies and intensities of common sounds and speech. (From Ballantyne D: Handbook of audiological techniques, London, 1990, Butterworth-Heinemann.)

Butterworth-HeinemannThe concept of sound pressure level and the values shown in Figure 3-5 are particularly important in consideration of the context for assistive technology use. One example of the application of these principles is Carolyn’s use of an augmentative communication device that has voice synthesis output.

Impairment of auditory function has two major effects: loss of input information and inability to monitor speech output. The latter can result in significant difficulties in oral communication. There are several assistive technology approaches to providing oral communication assistance to persons who have an auditory impairment. One approach is to provide feedback, either visually or tactilely, that represents the person’s speech patterns and relates them to typical speech. A second approach is to provide alternatives to oral communication, such as visual displays that are read by the listener. These and other approaches are discussed in Chapter 9

Auditory Thresholds.

The typical range of frequencies that can be heard by the human ear is 20 to 20,000 hertz (Hz) (Bailey, 1989). The ear does not respond equally to all frequencies in this range, however, and Figure 3-5 shows the response curve of a normal ear. The vertical axis of Figure 3-5 is the sound pressure measured in decibels. The horizontal axis shows the frequencies of sound applied. The curve in this figure is the minimal threshold for detecting the sound for each frequency. The tone presented at 1000 Hz requires an intensity of 6.5 dB to sound as loud as a tone presented at 250 Hz with an intensity of 24.5 dB. This curve illustrates why alarms and other audible indicators usually have a frequency near 1000 Hz.

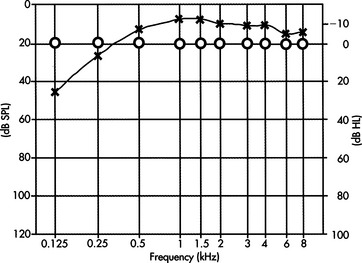

There are several types of tests that audiologists use in assessing hearing. Pure tone audiometry presents pure (one-frequency) tones to each ear and determines the threshold of hearing for that person. The intensity of the tone is raised in 5-dB increments until it is heard; then it is lowered in 5-dB increments until it is no longer heard. The threshold is the intensity at which the person indicates that he or she hears the tone 50% of the time. A typical audiogram is shown in Figure 3-6. On the curve shown in Figure 3-6, all values are displayed as hearing loss, and the “normal” level is shown as 0-dB loss. The curve of Figure 3-5 is incorporated into the plot of Figure 3-6. Thus for 125 Hz, a tone of 90.5 dB was heard 50% of the time in the right ear (45.5-dB threshold from Figure 3-5 added to 45-dB loss from Figure 3-6). At 1000 Hz the threshold presented was 36.5 dB. This test gives the audiologist information regarding the range of frequencies over which the person can hear.

Figure 3-6 Typical audiogram test results for pure-tone testing. SPL, Sound pressure level. (From Ballantyne D: Handbook of audiological techniques, London, 1990, Butterworth-Heinemann.)

Butterworth-HeinemannAlthough the frequencies presented in the pure tone test are in the range of speech (125 to 8000 Hz), this test alone does not indicate the person’s ability to understand speech. To evaluate this function, the audiologist uses a speech recognition threshold test. In this evaluation, speech is presented, either live or recorded, at varying intensity levels, and the person’s ability to understand it is determined. The person is asked to repeat either words or sentences presented at these varying intensities.

Hearing Loss.

On the basis of these and other tests, the audiologist determines both the degree of hearing loss and the type of loss. Four types of hearing loss are typically defined (Mann, 1974). These are (1) conductive loss associated with pathological defects of the middle ear, (2) sensorineural loss associated with defects in the cochlea or auditory nerve, (3) centrally induced damage to the auditory cortex of the brain, and (4) functional deafness resulting from perceptual deficits rather than physiological conditions. Auditory impairment is considered slight if the loss is between 20 and 30 dB, mild if from 30 to 45 dB, moderate if from 60 to 75 dB, profound if from 75 to 90 db, and extreme if from 90 to 110 dB (Stach, 1998). Selection of hearing aids for these types and magnitudes of loss is discussed in Chapter 9.

Somatosensory Function

Somatosensory function plays an essential role in the design and selection of assistive technology systems. One view of the role of the somatosensory system is to provide information regarding “where the body ends and where the world begins” (Dunn, 1991, p. 239). As the major interface for many assistive devices, the somatosensory system plays a critical role in determining the effectiveness of assistive technology interventions. The close relationship between the motor and sensory systems is also evident in the decreased control capability exhibited in the presence of somatosensory impairment. For example, persons who have Hansen’s disease (leprosy) lose peripheral sensation, which results in a loss of feedback to the motor system, and fine motor abilities are significantly compromised. Poor fine motor abilities can result in significantly compromised capabilities relative to the control of assistive technologies. Somatosensory input is received from receptors in the periphery and includes pressure, hot-cold, tactile, and kinesthetic responses.

When sensation is lost, as in spinal cord injury, somatosensory input is absent and tissue damage can result from externally applied pressures such as those generated in sitting. The inability to perceive pressure or discomfort is especially important in the design of seating systems and cushions (see Chapter 6).

Control of Posture and Position

The adequate control of posture and position in space are fundamental to successful use of assistive technologies. Movement of the limbs or head requires adjustment by the internal sensory and motor control systems to maintain a functional posture. Accommodation to external forces such as gravity or movement also requires constant adjustment. This control of posture and body position in space is an integrative function of the visual, vestibular, proprioceptive, and kinesthetic senses and the motor components of the trunk, pelvis, and extremities. As discussed in Chapter 6, a fundamental requirement for the effective use of assistive technologies is that the user be positioned appropriately.

The vestibular system provides information regarding how the body interacts with the environment (Dunn, 1991). This information is integrated with other sensory data to affect control of body position and to accommodate changes brought about by movement or changing environmental data. The sensory data provided by the vestibular system are used to relate internal sensory and motor maps to the external world. Humans constantly change their position in space to achieve greater functional control (e.g., compensating for upper extremity movement or changes in balance when picking up an object) or greater comfort or to move from place to place. When sensory or motor impairments are present, assistive technologies can be used to help compensate for postural deficits. Likewise, the design of assistive technology systems must take into account any postural deficits present.

When changes in body position occur because of internal forces (e.g., reaching for a keyboard) or external factors (e.g., increasing the load on the arm by lifting an object), a sophisticated control system provides the necessary compensatory mechanical, neural, and sensory changes (Lee, 1989). This control system features both feedback (sensory data affects motor output) and feed-forward (internal commands alter the motor system, with sensory changes following) components. Seating and positioning systems can be designed for individuals who lack the motor or sensory system function adequate for postural control to help stabilize the person and facilitate functional tasks (see Chapter 6). However, many of these systems are static, providing only one fixed position for the individual. As Kangas (1991) points out, static positioning is inconsistent with normal posture, which is dynamic and varies widely with different functional tasks. Kangas also defines a functional position, which allows movement but also stabilizes the individual to facilitate function (see Chapter 6).

As discussed earlier, Padula (1988) describes the use of prism glasses, which allow the individual to place visual and vestibular data in the proper relationship to each other. In some cases assistive technologies can be used to alter sensory perception and affect motor performance. In one case an individual had continual neck flexion and only lifted his head for short periods. When he was fitted with prism lenses, he immediately lifted his head and brought it in line with his torso. Similarly, individuals who demonstrate a consistent left- or right-leaning posture have been brought to midline by the use of horizontally oriented prism lens. In motor-disabled children and adults with disabilities such as traumatic brain injury, these lenses have resulted in postural corrections independent of any additional technological intervention such as seating systems.

The visual-vestibular coupling also can be exploited in other ways. Vestibular and visual function is closely related. The degree of this coupling is directly connected to the degree of self-produced locomotion (Campos and Bertenthal, 1987). Self-produced locomotion allows much greater correlation of visual and vestibular feedback, which has obvious implications for dependent versus independent mobility using assistive technologies. Sensory input provided by the vestibular system (in concert with visual and proprioceptive data) is significantly different when an individual is in control of his or her own movement than when a passive “passenger.” A common example of this phenomenon is the observation that the driver of a car on a winding road rarely gets carsick, whereas passengers often do. Likewise, a person with a disability who is pushed in a wheelchair receives different vestibular input than when he or she is propelling the chair.

A recurring theme in this chapter is that prior experiences of the human user of assistive technology systems play a major role in both the specification and design of the system and in its success. A classic study done with newborn kittens illustrates this point for the postural control system (Held and Hein, 1963). Kittens and their mothers were reared in total darkness from birth to the initiation of visual exposure at 8 to 12 weeks of age. A special carousel was used to provide equivalent movement experiences for each kitten. Two littermates were used in each set of experiments. One kitten was allowed to move on its own; the other was moved passively by the motion of the first kitten. Only the kittens that had active movement showed fear of heights, whereas the passively moved kittens did not. These results indicate that development involving movement depends in large measure on the degree to which that movement is self-generated. An example of an assistive technology application in which these concepts are important is dependent mobility (e.g., the person is pushed by an attendant) compared with independent powered mobility.

The importance of postural and position control has other implications for the application of assistive technologies. Given that self-generated movement provides different information than passive movement, it is not surprising that children who are given access to a powered mobility system often initially spend a great deal of time turning in circles. If there is an attempt to “correct” this behavior, the child may be deprived of important vestibular, visual, and kinesthetic development. If, however, the child is allowed to experiment with the powered wheelchair and obtain the new sensory experiences associated with self-propelled locomotion, there will be greater success in getting the child to be accurate and safe with the wheelchair (Kangas, 1991).

PERCEPTUAL FUNCTION AS RELATED TO ASSISTIVE TECHNOLOGY USE

Perception adds meaning to sensory data. Human interpretation of sensory events is based on both physiological function and prior sensory and perceptual experiences. Assistive technologies can affect perceptual experience in many ways, some positive and some negative. Because the use of these technologies is often a new experience, a novice user who has a disability is likely to have significantly different perceptions of events and device interactions than do either more experienced users or nondisabled assistive technology practitioners (ATPs). In this section the implications of perceptual function to assistive technology use are explored.

All sensory systems have both physical and perceptual thresholds. The term threshold is used to describe the minimal level of input that results in an output from a sensory system. For example, the auditory system can be described in terms of the amplitude and frequency of the input information. These are physical parameters that describe the thresholds associated with sensory function. Auditory perceptual thresholds are described as loudness (related to amplitude) and pitch (related to frequency). The perceived loudness and pitch differ from individual to individual and are typical of perceptual thresholds that are often referred to as psychophysical parameters. Sensitivity to sound varies from person to person, and an acceptable sound for one person (e.g., a teenager listening to a rock band) may be perceived as uncomfortably loud by another person (e.g., a parent listening to the same rock music).

A major perceptual task is separating information about one portion of an image from the rest of the image, for example, picking one person out of a crowd or identifying one object in a picture when there are many objects present. This type of task is referred to as figure-ground discrimination because the desired object (figure) is extracted from the background (ground). Good figure-ground skill is important for many assistive technology-related activities, such as selecting one symbol out of an array of symbols on a communication device. Many disabilities interfere with the ability to make figure-ground discriminations.

Auditory localization refers to the ability to identify the spatial origin of a sound; it is based on a comparison of sound from the two ears. Separation of one source of sound from others in a noisy environment is also important for successful task completion and for the effective use of assistive technology devices in varying contexts. For example, a user of a powered wheelchair must be able to identify the location (e.g., street noise, a person approaching, a voice calling to her) of a sound if she is to respond to it. This ability is also what allows us to focus on one speaker at a party in which many conversations are going on simultaneously. Dunn (1991) uses the term auditory figure-ground discrimination to describe this capability.

Making discriminations of physical parameters is a perceptual task. Estimates of length, distance, and time are examples of such discriminations. Time estimates are an important part of assistive technology use, especially when single-switch scanning is used. Accurate estimates of time require active participation in the task (Bailey, 1989). An active person generally overestimates time (i.e., thinks time has passed faster), and a passive person underestimates time (i.e., thinks it has passed slower). This occurrence is a formal recognition of the old saying “time flies when you’re having fun.” It also underscores the importance of making the human user of assistive technologies an active participant in the training process. For example, computer-based games are often used to develop switch skills. In this approach, the disabled child is required to activate a switch to obtain interesting graphic or auditory results. Using this approach, the child may activate the switch many times in a session to obtain new results, and a training session of 30 minutes may pass very quickly. Conversely, if the switch is connected to less interesting results, such as a single light or tone, and the child is asked to practice hitting it, the training session time may drag for both the child and the teacher.

One of the major accomplishments of early childhood development is independent mobility, and early perceptual development is directly related to the acquisition of this skill. In children with motor disabilities, independent mobility is often dependent on the use of assistive technologies. Campos and Bertenthal (1987) studied the relationship between independent locomotion and perceptual development. They point out the importance of considering both growth and learning as important aspects of development. Campos and Bertenthal used an experimental paradigm that measured fear of heights (as determined by heart rate increases) in children who had developed locomotion and in those who were prelocomotor. They found that height wariness was greater in children who were independently mobile than in those who were not. They also found that the height wariness of prelocomotor infants (less than 12 months old) who had used walkers was higher than that of those who had not. In a related experiment, they studied a motorically disabled infant who had a cast and brace preventing independent mobility. When the cast and brace were removed, they found that the infant’s wariness of heights increased. These and other studies demonstrate the relationship between motor experience and perceptual development and the role of assistive technologies in each. Kermoian (1998) describes evidence relating early mobility to cognitive development in young children as they actively engage in their environment. Typically developing children use creeping, crawling, and walking to obtain environmental interaction beyond their arm’s reach. This interaction fosters cognitive and language development. Children who have mobility limitations can achieve similar benefits from the early use of assistive technologies for mobility (see Chapter 12).

Assistive technologies can also provide erroneous sensory data—that is, data that are not consistent with other environmental information available to the person. A classic example of this phenomenon is the use of prism glasses that reverse the image on the eye, creating a mirror image of the environment (Bailey, 1989). When these glasses are first put on, the world is reversed and the person becomes disoriented. However, as the glasses are worn for longer periods, the sensory perception is brought into conformance with the sensory data and the person begins to function as if the visual image was not reversed. When the glasses are removed, the person is initially disoriented, and a period of adjustment is required to bring sensory perception into line with the new, “normal” data.

Bailey (1989) describes another study in which subjects who wore prism glasses that displaced the visual image several inches to the left or right were asked to reach for a target. Once again, they adjusted the sensory perception to match the data, and they were able to access the targets accurately after a few minutes of practice. The most interesting result of this experiment, however, came when the glasses were removed. The subjects consistently missed the targets in the opposite direction from the original displacement provided by the glasses. Analysis of these results revealed that it was kinesthetic perception rather than visual perception that was altered, and the effect persisted for a much longer time than the original visual disorientation had. It was also determined that if one hand was observed doing a task during the wearing of the glasses and the other was not, only the hand that was observed with altered visual input was affected.

These experiments have profound implications for the application of assistive technologies. Because individuals with disabilities often have significantly different sensory experiences and sensory maps of the world than do able-bodied persons, it is difficult to predict the perceptual experience that an assistive technology system will provide to the person. Perceptual differences may result from the sensory input, as in the prism glasses experiment. For example, a person with an altered visual field may not receive visual data that provide a complete picture of the environment. If that person acts on the limited sensory data, he or she may make errors in using an assistive device. Because these errors will be reflected in motor performance, it is difficult to identify them as perceptual rather than motor. An individual who has a motor disability may have difficulty keeping the head aligned with the horizon (i.e., have a tilt of the head to the left or right), which affects sensory input. If the individual then attempts to use a computer input system that requires horizontal and vertical movement (relative to the horizon) to move a cursor on the screen, he or she may have difficulty because the sensory data provided regarding the external world are not consistent with the way in which the cursor moves on the screen. To improve performance, the sensory (visual and kinesthetic) data must be brought into conformance with the perceptual information. This conformance can be accomplished in several ways, such as orienting the screen to the same angle as the head or providing learning time that allows the person to adapt the perception of the computer task to the task of head movement.

COGNITIVE FUNCTION AND DEVELOPMENT AS RELATED TO ASSISTIVE TECHNOLOGY USE

Cognitive performance plays an important role in the use of assistive technologies. In this section those aspects of cognitive performance that most often affect the design and implementation of assistive technology systems are described. There are several problems associated with adequately assessing the cognitive abilities necessary for the control of assistive technology systems. The most important of these is that the assistive technology often provides a function for which the person has no experience base. In the use of a powered wheelchair, the disabled human operator may have never been responsible for his or her own mobility and may not have experience in making the required decisions. A second difficulty is that there are many cases of effective technology use that would not have been expected given the measurable cognitive function of the user.

Cognitive Development

To specify and design assistive technology systems for children, it is important to understand some fundamental concepts of cognitive development and to relate these to the use of assistive technologies by children. With the passage of federal legislation relating to early intervention and special education, services are being provided to very young (birth to 3 years) children (see Chapter 1). Many children in this age group have special needs that can be aided by assistive technologies. Although many of the principles discussed can be applied directly to this population, there are unique characteristics that must also be considered. These characteristics are discussed in this section.

Changes that occur in a child arise from both environmental influences (experience) and biological maturation (Santrock, 1997). Growth can be defined as change arising from physical development of the CNS. The term learning is used to refer to changes that occur because of contact with some environmental influence. Development is a function of both growth and learning. A careful consideration of development, both current status and developmental change, is crucial to the successful application of assistive technology systems.

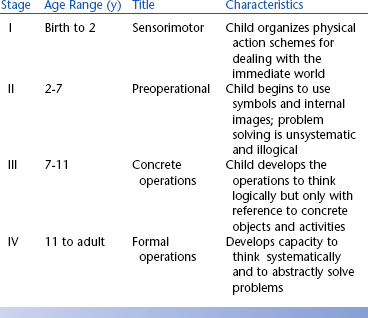

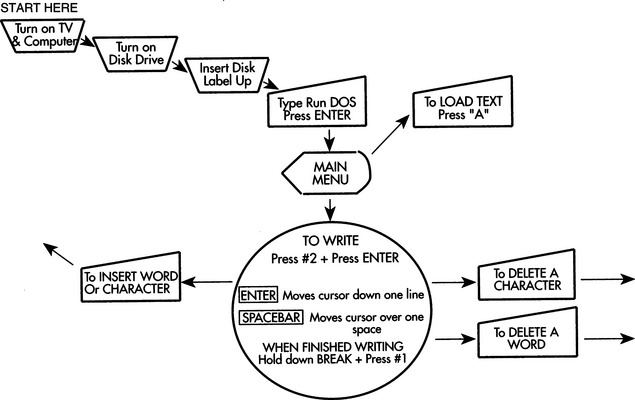

Although there are many theories of cognitive development, the work of Jean Piaget (see Brainerd, 1978, for example) is particularly useful because of its emphasis on object manipulation in the early years and the consideration of alternative methods of problem solving as the child grows into an adult. The major stages of development proposed by Piaget are shown in Table 3-1. Although there is some controversy regarding the details of Piaget’s theory, the four basic stages shown in Table 3-1 provide a useful framework for us to consider in applying assistive technologies to solve problems of children with disabilities. One of the major factors illustrated in Table 3-1 is the change in problem-solving approaches and abilities as a child develops. The very young child does not approach problems in the same way as the adult, which must be considered in the design of assistive technology systems.

One of the major controversies regarding Piaget’s theory is the age at which symbolic representation emerges. This skill, necessary for cognitive functions such as problem solving, was believed by Piaget to begin with the preoperational stage (stage II in Table 3-1). However, recent work has shown that infants as young as 6 months old develop symbolic representation (Mandler, 1990). These skills are acquired by observation and by direct manipulation of objects. For example, 9-month-old infants have been shown to be capable of imitating actions that they have observed but not practiced. Infants are also able to remember, after a short delay, where objects have been placed. These and other similar results indicate that very young children (less than 9 months old) are capable of forming symbolic representations of objects and manipulating these representations to carry out tasks.

Goldenberg (1979) applies the idea of observational learning to the case of children whose motor abilities are severely limited and who have limited capability for further motor development. He proposes two hypothetical situations: (1) a child whose only motor response is eye movement and (2) a child whose only response is raising an eyebrow. The first child may engage the environment through movements of the eyes that cause an image to move on the retina. This motor action may or may not lead to interaction, depending on whether someone in the child’s environment interprets the eye movements as meaningful and uses them as a basis for communication. In the second case, the child’s action does not manipulate the environment for the child, but again its interpretation by another person may allow interaction with the environment. In each of these cases the provision of an assistive device that is sensitive to the motor actions of the child may enable development. However, in each case the importance of observational learning prevents us from saying that development is not occurring.

From the point of view of assistive technology systems, the early manipulation of objects and the use of tools are of particular importance. Table 3-2 summarizes some of the early skills in these areas. It is clear from Table 3-2 that at a very early age the normally developing child can and does interact with objects and can use an object as a tool to achieve a desired result; thus it is not surprising that assistive technologies have been used successfully with very young children. Brinker and Lewis (1982a) used the concept of co-occurrences, the provision of a contingent result when the child carries out a purposeful action, to foster the development of interaction skills in infants and very young children. They used a microcomputer to arrange events so that they could be consistently controlled by an infant’s behaviors; therefore, the infant was led to believe that the world was controllable (Brinker and Lewis, 1982b). The infant used switch activation to control graphics, toys, and tape recordings of songs or voices. Data on the number of switch activations and observable behaviors (e.g., facial expressions, reaching for a toy) of the infant showed that children as young as 3 months old would develop purposeful movements to cause the contingent result. Given the skills shown in Table 3-2, these results are not surprising.

TABLE 3-2

Early Object Manipulation and Tool Use in Typically Developing Children During the Sensorimotor Period of Development (Birth to 2 Years)

| Developmental Age (mo) | Actions |

| 5 | Reinitiates familiar game during pause |

| 6 | Finds object hidden behind or under screen |

| 6 | Imitates novel body movement |

| 6-8 | Transfers object hand-to-hand |

| 7 | Leans forward to look for a dropped object |

| 8-10 | Anticipates circular trajectory of an object |

| 8 | Drops one object to reach for another |

| 8-9 | Moves to obtain object out of reach |

| 8-10 | Pulls support to obtain object without demonstration |

| 9 | Uses one object as a container for another |

| 12 | Pulls string to obtain object without demonstration |

| 12-14 | Retrieves object by pouring if container is too small for hand |

| 12-15 | Holds mechanical toy that another person has started |

| 13-15 | Uses string to obtain object against gravity |

| 15 | Moves around barrier to obtain object |

| 15-18 | Uses tool as extension of body to obtain object |

| 15-18 | Finds object where last seen or usually kept |

| 15-19 | Opens box to obtain object without demonstration or seeing object placed in box |

| 18-20 | Imitates two action combinations |

| 19-20 | Anticipates result of actions and adjusts behavior accordingly to situations and problems |

| 21 | Attempts to activate mechanical toy without demonstration |

| 22 | Anticipates means/end and result of applied means |

The direct manipulation of objects by robotic systems controlled by the child is an attractive contingent result in a computer-controlled and switch-activated system for very young children. Cook, Liu, and Hoseit (1990) developed a system that allowed a very young child to interact with a small robotic arm by a single-switch activation. They investigated whether both nondisabled and disabled children would use the robotic arm as a tool. Cook et al used a continuous playback mode in which a movement was played back sequentially as long as the switch was depressed, and the arm stopped when the switch was released. Typical tasks used were bringing a cracker within reach of the child and tipping a cup to reveal its contents. If a child was attempting to retrieve an object with the robotic arm, it was concluded that he was using it as a tool if the switch was pressed to bring the object closer (in the continuous mode) and then reached for the object, and if still out of reach the switch was pressed again. Repeated use of this sequence of actions indicated the use of the robotic arm as a tool to retrieve the object. Fifty percent of the disabled children (all those with a standardized cognitive age level score of 7 to 9 months or greater) and 100% of the nondisabled children did interact with the arm and use it as a tool to obtain objects out of reach. Gross and fine motor skill levels were less related to success in using the robotic arm than were the levels in cognitive and language areas. This study illustrates the careful application of assistive technology to match the developmental level.

As children grow and develop, they are able to deal with objects and schemes of action more symbolically. These emerging skills affect the way in which assistive technology systems are specified and designed for children who are between 2 and 6 years of age (in the second stage shown in Table 3-1). For example, augmentative communication systems that require the use of symbols can be designed and the vocabulary included can be expanded over that of the stage I child. More complicated operational features such as two- and three-sequence tasks can also be included. As concepts of time begin to develop, sequential selection of objects, such as that required in scanning, can be used. For the preoperational child, it is also important for us to consider other characteristics (Brainerd, 1978). For example, children in this age range typically exhibit centration, focusing on only one aspect of an object. Often this is a surface feature such as color or flashing lights; thus assistive technology systems must be designed carefully so that the most striking features are also the most important to their use. Children in this stage also exhibit animism, attributing life and consciousness to inanimate objects. This characteristic can be exploited by making devices fun to use and giving them names. A final example is the failure of children in this stage to separate play and reality; they apply the same ground rules to each situation. If this characteristic is taken into consideration, a communication device can be used, for example, to create strange sounds (e.g., a belch), and we will not insist on always saying things properly. This approach can help the child develop skills in an interesting way and then apply them to other situations, such as moving to a given destination. Examples of characteristics of the preoperational child and their implications for assistive technology use are shown in Table 3-3.

TABLE 3-3

Characteristics of the Preoperational Child That Influence Assistive Technology Use

| Characteristic | Assistive Technology Implications |

| Symbolic representation | Augmentative communication, use of language concepts in control of devices |

| Sequencing | Multiple symbol communication, multistep control of systems |

| Centration | Child may focus on color, size, or shape rather than function of assistive device |

| Animism | Give assistive devices a personality with names, etc. |

| Play equals reality | Make use of play routines to accomplish functional goals |

Assistive technologies can play a role in cognitive development for children in this stage as well. Verburg (1987) studied 10 children aged 2 to 5 years who were provided with a miniature powered vehicle. The changes in scores on a developmental profile over the course of learning to use the powered vehicle were used to determine the effect of the device on cognitive development. Changes in scores were calculated in months, and those that exceeded the number of months of the training period were taken to indicate cognitive growth. For example, if a study lasted 3 months and the child’s difference in beginning and ending scores was 5 months, it was decided that development had occurred as a result of the experiment. Five categories of development were used: physical, self-help, social, academic, and communication. The major effects of the use of the vehicle were in the social and academic categories, with 7 of the 10 children showing gains greater than the length of the study. Communication (three children), self-help (two children), and physical (one child) showed smaller gains. This study illustrates the importance of assistive technologies in enabling learning and associated development. An added benefit of Verburg’s study was that parental protectiveness decreased as the children became more independently mobile.

The older child (stage III in Table 3-1) has significantly more ways of using assistive technologies (Brainerd, 1978), and this can be captured in the specification and design process. For example, decentration is now common, and “optional” features that are secondary but useful can be included without the concern that they will distract the child from successful use of the device. For instance, a powered wheelchair controller with a high- and a low-speed feature will be more understandable by a child in stage III than by the child in stage II. A major advance for children in this stage is the ability to apply logical operations to concrete (real and observable) problems. The emergence of these skills has a direct influence on the design of augmentative communication systems to be used for writing in school. Features of word processors that allow editing of text can be included, and the child can be expected to learn to use features such as printing and saving text. It is important, however, that the design of training materials for the use of assistive technology systems be based on concrete, real situations rather than more abstract concepts. Operational principles should also be concrete. This caveat does not mean that they must be “simple” but that they rely on a logical problem-solving approach that focuses on real properties of objects and situations. Among the skills of children in this stage are the ability to carry out complex tasks consisting of several steps and recognizing that the processes are reversible, categorizing objects, combining classes of objects and extracting their common properties, recognizing that problems may be solved in more than one way, and reasoning deductively. Success in specifying and designing assistive technology systems for children in this stage of development is directly related to how carefully these and other characteristics of this age group are considered.

The adolescent (stage IV) is in transition between deductive, concrete problem solving and the inductive, systematic reasoning characteristic of adults. A key change in this stage is that problem solving and reasoning are systematic rather than random as in previous stages. The design of assistive technology systems for individuals in the early part of this age range (11 to 15 years) must include consideration of the transition from concrete to formal operations because most individuals alternate between these two during this period. The problem solving and decision making required for the use of systems can be more inductive, but allowance for basic operation that is concrete must be made.

In summary, the specification and design of assistive technology systems for children are not just a matter of simplifying the features of adult systems. Instead there are specific characteristics of children in various age groups that must be taken into account to ensure the effectiveness of systems selected for them. By taking into account the nature of childhood and its unique “lifestyle,” assistive technologies can be made fun as well as useful. This design feature increases the likelihood that they will be effective. Finally, not only is the human component different in the case of children but there are activities and contexts that are unique to childhood. By incorporating the unique features of these other two components of the total system, its efficacy can be further improved.

Developmental Disabilities and Cognitive Deficits

When developmental delay or cognitive impairment caused by trauma (e.g., traumatic brain injury) is being considered, it is tempting to relate an individual’s functional capability to the stages of development, such as those presented in Table 3-1. From the point of view of assistive technology use, this strategy is undesirable for several reasons. First, the individual who has a disability has a significantly different nervous system than the nondisabled person for whom the developmental sequences have been established. The developmental delay or cognitive impairment is the result of other factors, and these must be taken into account when evaluating the level of cognitive functioning. Second, it is often true that an individual with cognitive impairment exhibits significant skill in one area but has severe deficits in others. Development in the presence of an abnormal nervous system is best considered as divergent from the path considered to be typical. This is in contrast to the view that development is proceeding along the same “typical” path but is delayed. Assistive technology application is most effective when individual skills are determined through assessment (see Chapter 4) and the system characteristics emerge from this assessment.

Individuals with congenital or adventitious cognitive impairments may have difficulties with attention, memory, problem solving, language, and other areas. When assistive technology systems are designed for these individuals, it is important to give careful attention to the cognitive demands that use of the device places on the person and to include learning and operational aids within the total system. It is generally not the goal to make things simpler for someone with a cognitive deficit but to make them different. For example, individuals who have a learning disability may benefit from alternative modes of information presentation. Often auditory information is more easily assimilated than visual information. Examples of approaches for individuals with memory loss and problem-solving limitations are described next.

Memory

Memory is important for effective use of assistive technologies. When assistive technology systems are specified and designed, the role of human memory in successful use must be considered. Human memory is often considered to have three components: (1) sensory memory, (2) short-term memory, and (3) long-term memory (Bailey, 1989). Each type of memory plays a role in the use of assistive technologies. Sensory memory describes the storage of sensory data for a very brief time after the removal of the stimulus. For our purposes, the most important types of sensory memory are visual and auditory. The afterimage that traces the path of a moving sparkler in the dark is an example of sensory memory. Visual sensory memory, typically in the form of an image, lasts for about 250 millisecond (one fourth of a second) (Bailey, 1989). Some assistive devices make use of this type of memory in their design. One example is the Pathfinder (Prentke Romich Co., Wooster, Ohio) augmentative communication system. In this device a set of 128 lights is arranged in a matrix 16 lights wide by 8 lights high. A detector is placed on the user’s head, and when it is aimed at one of the lights, the Pathfinder detects it and the choice labeled by that light is activated. The device turns on the lights one at a time from the upper left corner to the lower right corner, row by row. However, although only one light is turned on at a time, the user actually sees all the lights as being dimly lit. This effect results in part from sensory memory, and without it this input method would not be feasible. Auditory sensory memory is often in the form of an echo of the original input data that lasts for up to 5 seconds (Bailey, 1989).

Short-term memory is sometimes referred to as working memory (Bailey, 1989). Its duration is generally up to about 20 to 30 seconds, and it is used for temporary storage of information necessary to complete a task. This form of memory allows us to carry out many tasks associated with assistive technologies. In assistive technologies, short-term memory is used for seldom-used device operational sequences that are looked up in a manual when needed (e.g., how to replace batteries in a hearing aid) or for remembering a piece of information briefly (e.g., a telephone number to be dialed). Because the capacity of short-term memory is approximately seven items, it is important to restrict the amount of information required to be stored in short-term memory. Individuals have difficulty remembering more than seven items if they do not have the opportunity to rehearse and transfer the information to long-term memory. Information stored in short-term memory arises from both external and internal sources. For example, reading this sentence requires using stored information regarding letters and their combination into words, together with visual input from the page. Information in short-term memory is generally believed to be stored in an encoded form. The code may be a form that makes use of longer-term stored information or one that is more easily recalled than the original form of the information. There is evidence that some visual information, such as words, is actually stored in auditory form, by memory of their sounds rather than what they look like. This evidence has implications for individuals who are unable to use oral language or who have not heard oral language because of a congenital hearing impairment, and this must be taken into account when assistive technology systems are designed for them.

When designing systems, several steps can be taken to help the human operator maximize use of short-term memory. One strategy involves grouping information into short sequences and use of patterns that are related to stored information. For example, an assistive device for writing may have several functions, such as entering text, storing text, and printing. If the system is designed so that each of these tasks follows a similar, consistent sequence of actions, then the use of the system will be more easily learned. Bailey (1989) also discusses the use of rehearsal and patterns in codes as aids to users of systems. Rehearsal is the repeating of a new piece of information (e.g., a phone number) to ensure that it is not forgotten. Another strategy groups number or letter sequences into short (three- or four-character) groups and includes similar patterns in the groups. Examples of useful patterns for numbers are groups that end in the same number; for letters the groups may spell short words or be remembered as acronyms.

Long-term memory stores information that has lasting value. Although short-term memory consists of “throwaway” information that is used only once, long-term memory is important for things used often. Examples of the use of long-term memory in assistive technologies include recalling codes used for storage of information, remembering how to turn on a device and use its features, and remembering where to go and how to get there with a powered wheelchair. Long-term memory differs from the other two types primarily in the duration of the stored information. This type of memory is permanent although we forget it. There is evidence indicating that loss of information from long-term memory is a problem of access rather than actual loss of stored information (Bailey, 1989). Designers of assistive technology systems need to be aware of several memory processes related to remembering and forgetting: (1) encoding, (2) storage, and (3) retrieval. Each of these plays a major role in the design and use of assistive technology systems.

Encoding is the way in which information to be stored is organized, and it is important in retrieval of the stored information. System designers can help with this process by relating steps, tasks, or information to be remembered to the person’s experience. Because each person has unique, and sometimes limited, experiences, careful attention must be paid to assessing the best ways to encode information for easy retrieval. For example, with speed dialing, in which one digit is used as a code for a stored phone number, it may be easier if phone numbers for certain people are recalled by letters instead of by the digit. Mom’s number could be stored under M, sister Tammy under T, work under W, and so on. This method of encoding helps with recall because there is a relationship between the stored number and the code.

There are many theories regarding how and why we forget. From a systems design point of view, these are important, especially in relation to training individuals to use assistive technologies. One of the most important factors affecting forgetting is what the person does between the time the information is learned and the time that it is used (Bailey, 1989). The term interference is used to describe the process of forgetting. Bailey discusses two types of interference: proactive and retroactive. Proactive interference occurs when information acquired before the learning of new material interferes with the use of the new material in performance. This type of interference often occurs in assistive technology system use. For example, Tom has learned to use one type of mechanical feeder, which requires that a switch be pushed to the right to rotate the plate and to the left to raise the spoon to mouth level. The spoon action is automatic once the switch has been activated. A new feeder is introduced that gives Tom more control because the second switch must be continuously pressed to scoop the food and raise the spoon, and it can be stopped at any point and restarted. This process can make eating more efficient because, if the spoon misses the food, it is not necessary to go through an entire cycle before trying again to get food. Tom has proactive interference if he persists in pushing the second switch only once because this was a previously learned strategy rather than maintaining switch activation until the food reaches mouth level. Even if Tom is able to adapt to the new strategy, he may revert to the old strategy if he is tired or stressed.

Retroactive interference occurs when a person learns to do task A, then learns task B, and finally is asked to perform task A. He or she may forget how to do task A because of concentrating on task B. This situation can occur when a person is trained to use an assistive technology system that has multiple functions or tasks. This type of problem can be avoided by allowing enough practice and use time for task A before task B is introduced. For example, a person with a visual impairment is being trained to use a screen reader. This is a device that provides speech output instead of visual output. The person has learned how to scan through the text by using the arrow keys on the keyboard (task A). Now he is trained to save a file and retrieve it (task B). When he goes back to task A, he may have forgotten how to do it or forgotten details of this task. This is called retroactive interference.