Applying the Outcomes of Needs Identification and Physical-Sensory Evaluations to Control Interface Selection

In Figure 7-9 we list specific information related to human/technology interface selection that is an outcome of the needs identification process. The information gathered reveals particular factors that should be considered during the interface selection process. For example, identifying the activity the consumer wants to perform provides us with information on how large an input domain is required and possible control interfaces to consider. If the consumer is in need of a power wheelchair and is not interested in using a computer, for example, it is not necessary to determine whether he or she can use a keyboard. Alternatively, the consumer may need to perform several functional activities (e.g., communication, mobility, and environmental control), which affects the selection of an interface. In situations such as this, it should be considered whether a different control interface for each function or a single integrated control for all the functions is to be used.

The information gathered during the physical-sensory skills evaluation gives us a profile of the user’s skills in these areas, specifically those shown in Figure 7-9. This information can be used to determine the acceptable parameters for potential control interfaces. The range measurement determines the consumer’s minimal and maximal comfortable reach and defines the geometrical requirements for the individual’s workspace. This parameter provides an indication of the possible locations for placement of a control interface (or interfaces) and the maximal distance between the extreme outer edges of the interface (e.g., the overall size of a keyboard or switch array). The resolution measurement provides data on the consumer’s ability to control his or her movement to select targets.

Given this information on the consumer’s skills, potential candidate control interfaces that have similar characteristics in terms of the number and spacing of the targets and the size of individual switches or keys can be selected. Once candidate interfaces have been selected, comparative testing is conducted. The purpose of comparative testing using the control interfaces is to provide the ATP with information on how fast the consumer can input using the control interface and the accuracy of that input. Methods for carrying out comparative testing are described in Chapter 4. During comparative testing, it is also critical that the ATP gather subjective information from the consumer on each interface that is evaluated. This information includes the ease or difficulty of use.

Control Enhancers: Interface Positioning, Arm Supports, Mouthsticks, Head Pointers, and Hand Pointers

Control enhancers are aids and strategies that enhance or extend the physical control (range and resolution) a person has available to use a control interface. In some cases a person’s control may be enhanced to the extent that he or she can select directly. In other cases control enhancers can minimize fatigue. Control enhancers include strategies, such as varying the position or the characteristics of the control interface, and devices, such as mouthsticks, head and hand pointers, and arm supports.

The person and the control interface should both be positioned to maximize function. The importance of proper positioning to maximize an individual’s function is discussed in Chapter 6. A person’s position should be observed before and during the control interface evaluation. If inadequate positioning appears to be affecting the person’s ability to control an interface, it should be addressed before continuing with the evaluation. The position of the control interface can also affect the person’s ability to activate it. Changing the height or the angle of the control interface even slightly may enhance the person’s ability to control it.

As control interfaces become more sophisticated, control-enhancing features are becoming part of the interface. For example, certain joysticks have a feature called tremor dampening that allows adjustment of the joystick for people who have tremors. Tremor-dampening joysticks are able to distinguish between tremors, which are faster and smaller, and intentional movements, which are slower and larger. The joystick is adjusted so that the tremors are disregarded and only intentional movements are detected. This adjustment enhances the ability of an individual who might otherwise be unable to operate a joystick to control a power wheelchair. A similar feature, called filter keys, is used in Windows. When the filter keys feature is activated in Windows, brief keystrokes are ignored and the rate at which keys repeat when being pressed is delayed.

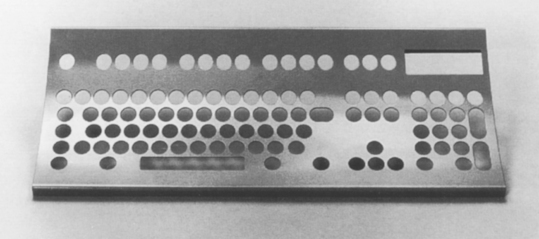

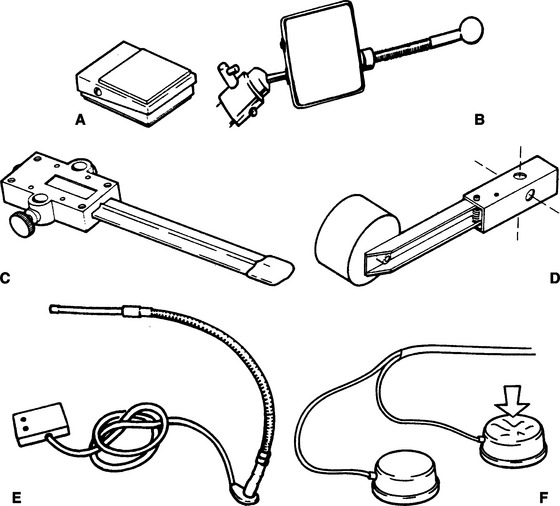

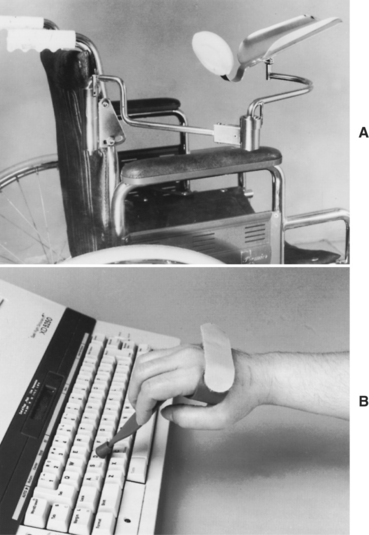

Individuals who have weakness in the arm may not have enough strength to access the full range of a keyboard adequately. A mobile arm support (Figure 7-10, A), which props the arm and assists in arm movements by eliminating some of the effects of gravity, may then allow the individual to access a keyboard. For the individual who has the gross motor ability to move his or her arm and hand around a keyboard but has difficulty extending and isolating a finger to depress a key, a pointing aid may help. There are commercially available aids that can be strapped on to the hand to assist in pointing, such as the typing aid shown in Figure 7-10, B. In some cases it is necessary to custom fabricate a pointing aid for it to fit the consumer’s hand appropriately. These custom-fabricated aids can range from complex hand splints to simple tools such as a pencil with an enlarged eraser.

Figure 7-10 Control enhancers. A, Mobile arm support used to enhance the control in the upper extremity for accessing a control interface. B, Typing aid used to enhance a person’s ability to point and access a keyboard. (Courtesy Sammons Preston Co., Bolingbrook, Ill.)

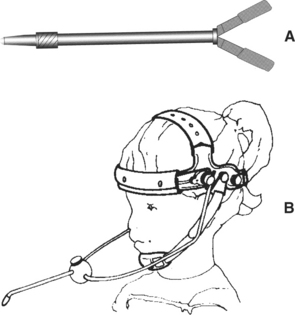

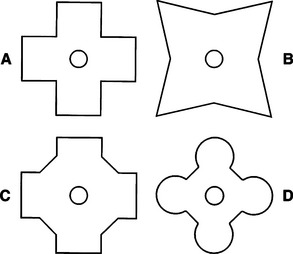

For individuals who lack functional movement in their arms and hands, a mouthstick or head pointer (Figure 7-11) can be used with head and neck movement to access a keyboard or perform other types of manipulation tasks (e.g., dialing a telephone number or turning pages in a book) (see Chapter 14). For a head pointer, a rod with a rubber tip is attached to a band that is worn around the top of the head. The individual can then use the end of this rod to depress keys. Besides being able to move the head vertically and horizontally, the individual must have the ability to produce a third dimension of movement to depress keys with a head pointer: forward and backward. There are also light pointers that can be worn on the head or held in the hand to control devices. One advantage of head-controlled light pointers is that it is not necessary for the user to move the head forward or backward. Light pointers are described in greater detail in the section on pointing interfaces.

Mouthsticks are often used by individuals who are quadriplegic as a result of a spinal cord injury. A mouthstick consists of a pointer attached to a mouthpiece. The user grips the mouthpiece between the teeth and moves the head to manipulate control interfaces or other objects. The shaft of the mouthstick can be made from a wooden dowel, a piece of plastic, or aluminum. In some cases, interchangeable tips for different functions (e.g., painting, writing, typing) can be inserted into the distal end of the shaft. The mouthpiece can be a standard U shape that is gripped between the teeth or a custom-made insert. Puckett et al (1988) identify a number of criteria for design of a mouthstick. Mouthsticks are also available from several suppliers. Use of a mouthstick requires good oral-motor control; later in this chapter training to develop these skills is discussed.

The consumer’s range and resolution with the control enhancer can be determined by using the same methods discussed in Chapter 4. In some cases, particularly if there is a need to extend the consumer’s range (e.g., when the head is the likely control site), it is apparent at the beginning of the evaluation that the consumer will benefit from using a control enhancer. When the user has adequate range but resolution is in question, it may not be obvious during the physical-sensory evaluation whether a control enhancer will be beneficial. In these cases it is recommended that comparative testing of candidate interfaces with and without the use of control enhancers be completed. This evaluation provides the ATP with objective data regarding the effectiveness of the control enhancer. Certain control interfaces, however, cannot be activated with a control enhancer. These include displays specially designed to be used with light pointers, eye-controlled systems, or capacitive switches requiring skin contact for activation (Lee and Thomas, 1990).